As soon as a group adopts AI coding assistants, the primary positive aspects present up on the

particular person degree: one developer can draft, modify, and refactor code

a lot sooner than earlier than.

However supply pace isn’t restricted by typing.

Whenever you take a look at the total supply lifecycle, from necessities by

launch, new friction seems:

- Ambiguous necessities turn out to be code shortly, and misunderstandings scale with them.

- Critiques need to course of extra change, and inconsistency turns into simpler to introduce.

- Extra integration and testing points floor as a result of “generated” doesn’t suggest “aligned.”

- Manufacturing threat is more durable to purpose about when the amount of change rises.

So sure, native pace improves. However that does not mechanically

translate into system-level throughput. It is like shopping for a Ferrari and driving it on muddy roads: the engine is

highly effective, however your arrival time is set by highway situations and

site visitors. In our expertise, the true query is not “How will we generate extra code?” It is

how will we make AI-generated adjustments governable, reviewable, and reusable,

so groups get sooner and safer?

That led our Thoughtworks inner IT groups (World IT Providers) to a

methodology and workflow we now name Structured Immediate-Pushed Improvement (SPDD).

SPDD goals to show AI help from private effectivity into an organization-level

functionality that scales, with out buying and selling away high quality.

Prompts as First-Class Supply Artifacts

What’s SPDD?

Structured Immediate-Pushed Improvement (SPDD) is an engineering methodology

that treats prompts as first-class supply artifacts.

As a substitute of counting on advert hoc chats, SPDD turns prompts into property that

might be: model managed, reviewed, reused, and improved over time.

Groups use structured prompts to seize necessities, area language,

design intent, constraints, and a activity breakdown. Then the LLM generates

code inside an outlined boundary, so output turns into extra predictable and

simpler to validate.

It has two core elements

The REASONS Canvas

The REASONS Canvas is a construction for producing prompts. It forces

readability round necessities, area mannequin, resolution method, system

construction, activity decomposition, reusable norms, and safeguards. So the

LLM is guided by intent, not guesswork.

The REASONS Canvas is a seven-part construction that guides a immediate from

intent → design → execution → governance.

Summary elements (intent & design)

- R — Necessities: What downside are we fixing, and what’s DoD?

- E — Entities: Area entities and relationships.

- A — Method: The technique of how we’ll meet the necessities.

- S — Construction: The place the change matches within the system; elements and dependencies.

Particular elements (execution)

- O — Operations: Break the summary technique into concrete, testable implementation steps.

Frequent requirements elements (governance)

- N — Norms: Cross-cutting engineering norms (naming, observability, defensive coding, and many others.).

- S — Safeguards: Non-negotiable boundaries (invariants, efficiency limits, safety guidelines, and many others.).

The canvas aligns intent and limits earlier than code is generated, transferring uncertainty to the left.

As a result of the structured immediate captures the total specification, reviewers purpose a few single artifact as an alternative of scattered chat logs and partial diffs.

By following the identical construction, each immediate turns into governable in the identical manner.

And as area data and design choices accumulate in every immediate, they compound particular person experience throughout iterations and scale back variability throughout the group.

The SPDD workflow

The workflow brings prompts into the identical self-discipline as code: commit

historical past, evaluation, and high quality gates. It additionally enforces a easy however highly effective rule:

When actuality diverges, repair the immediate first — then replace the code.

Over time, this adjustments how groups work. Critiques transfer away from “spot

the bug” towards “examine the intent.” Rework turns into extra managed. And

profitable patterns naturally accumulate right into a reusable immediate library

that helps AI-First Software program Supply (AIFSD).

In case you’ve recognized about Spec-Pushed

Improvement,

you may acknowledge the identical start line: write the spec clearly first,

then let the mannequin implement. SPDD takes a unique angle. It treats

structured prompts as ruled, reusable, versioned group property (REASONS

+ workflow) that evolve alongside the code – an method that Birgitta

Böckeler categorizes as a spec-anchored method.

The objective of the SPDD workflow is to show enterprise enter → abstraction →

execution → validation → launch right into a “closed loop”—and to verify

immediate property and code evolve collectively, not individually.

SPDD workflow

The goal of this workflow is to anchor collaboration on the prompts, so

that builders and product house owners can keep away from repeated cycles of alignment.

The immediate units an express boundary for code era, lowering the

randomness of the LLM’s non-determinism, making it simpler to manipulate. By

treating the structured prompts as first-class artifacts in model

management, we flip profitable practices into reusable property, bettering

consistency and lowering reinvention.

In follow these steps are carried out by instructions offered

by openspdd, a command-line device that

implements the SPDD workflow. The desk under summarizes every

command.

| Command | Sort | Objective |

|---|---|---|

| /spdd-story | Optionally available | Breaks a big requirement into unbiased, deliverable person tales following the INVEST precept. |

| /spdd-analysis | Core | Extracts area key phrases from necessities, scans related code, and produces a strategic evaluation overlaying area ideas, dangers, and design course. |

| /spdd-reasons-canvas | Core | Generates the total REASONS Canvas — an executable blueprint from high-level rationale right down to method-level operations. |

| /spdd-generate | Core | Reads the Canvas and generates code activity by activity, strictly following the operations, norms, and safeguards outlined within the immediate. |

| /spdd-api-test | Optionally available | Generates a cURL-based API take a look at script with structured take a look at instances overlaying regular, boundary, and error eventualities. |

| /spdd-prompt-update | Core | Incrementally updates the Canvas when necessities change (necessities → immediate → code). |

| /spdd-sync | Core | Synchronizes code-side adjustments (refactoring, fixes) again into the Canvas so the immediate stays an correct document of the present code (code → immediate). |

Enhancing a billing engine with SPDD

An advanced workflow is obscure within the summary, so

we have now ready an instance workflow of enhancing an current software program

system. This technique, and its enhancement, are neccessarily small so as

to be understandable inside a tutorial article. That mentioned the enhancement

instance is a full end-to-end instance: from creating preliminary

necessities, to analyzing enterprise necessities, to producing and reviewing a structured immediate,

to producing and verifying code, to remaining cleanup and testing.

You’ll be able to observe together with this instance by putting in

openspdd in your personal atmosphere.

The present system

The present system is a straightforward billing engine that calculates payments

for utilizing a large-language mannequin. It accepts a document that captures how

many tokens are utilized in a session and calculates a invoice.

The whole codebase for this preliminary model is

out there on GitHub.

The repository consists of the preliminary necessities story

and all of the SPDD artifacts used to generate it.

For brevity, we do not describe that preliminary era right here, nevertheless it follows basically the identical steps as that for the enhancement.

We deal with describing the enhancement as a result of most work on a system are enhancements.

The enhancement

Pushed by evolving enterprise necessities and direct person suggestions, we’re enhancing the billing engine to transition from a static pricing mannequin to a extra subtle,

versatile infrastructure. This replace goals to help various subscription methods and variable, model-specific pricing by the next key adjustments:

- API enhancement: replace the present

POST /api/utilization

endpoint to just accept a brand new, requiredmodelIdparameter

(e.g., “fast-model”, “reasoning-model”). - Mannequin-aware pricing: shift from a single international price to dynamic

pricing, the place prices range relying on the precise AI mannequin

invoked. - Multi-plan billing logic: introduce distinct billing behaviors

based mostly on the client’s subscription tier: - Commonplace plan (optimized): retains the worldwide month-to-month quota,

however any overage utilization is now calculated utilizing model-specific

charges. - Premium plan (new): operates with no quota restrict. It

introduces cut up billing, the place immediate tokens and completion

tokens are charged individually at totally different charges relying

on the mannequin used. - Architectural scalability: implement an extensible design sample

(reminiscent of Technique or Manufacturing unit) to cleanly isolate the calculation

formulation for various plans, guaranteeing the system can simply

accommodate future pricing fashions.

Since this new part encompasses each enterprise necessities and technical particulars,

it’s sometimes accomplished collaboratively by a pairing session between a PO (or BA) and a developer.

Step 1: Create preliminary necessities

To kick off the method shortly, we will use the /spdd-story

command to generate a person story instantly based mostly on the enhancement. Usually, person tales are offered by the PO or BA.

Nevertheless, in our workflow, we will remodel tales of any kind right into a constant format and dimension.

So long as there’s shared alignment on the ultimate acceptance standards, this step might be carried out by a PO, BA, or developer, relying on the group’s versatile division of labor.

Instruction:

How spdd-story works

This command breaks a big requirement into unbiased,

deliverable person tales following the INVEST precept (1–5 days

of labor every). Every story consists of acceptance standards written in

enterprise language, able to function enter for

/spdd-analysis.

Its goal is to make giant necessities manageable and to make sure a standardized,

predictable format for the following steps.

The AI analyzed the enhancement description and cut up it into two

person tales:

The auto-generated tales are detailed sufficient to function a baseline

for a proper challenge. For this walkthrough we consolidate them right into a

single simplified story so the instance stays self-contained.

Instruction:

Consolidate the next two person tales right into a single, simplified

story:

@[User-story-1-1-initial]Multi-Plan-Billing-Basis-&-Commonplace-Plan-Mannequin-Conscious-Pricing.md

@[User-story-1-2-initial]Premium-Plan-Cut up-Fee-Billing.md

Necessities:

1. Merge each plans (Commonplace and Premium) into one coherent story.

2. Maintain solely the sections: Background, Enterprise Worth, Scope In, Scope Out, and Acceptance Standards.

3. Strip implementation-level element — deal with what the system ought to do, not how.

4. Acceptance Standards should use Given/When/Then format with concrete numeric examples.

5. Maintain the outcome concise — no multiple web page.

6. Solely preserve three high-level ACs.

Directions of this sort hardly ever produce similar textual content on each run —

fashions and sampling introduce small variations — so we nonetheless anticipate to

evaluation and tweak the output earlier than treating it as remaining. The mixed

story under is the model we refined for this walkthrough: a intentionally

simplified consolidation of the 2 preliminary tales.

Step 2: Make clear evaluation

Earlier than leaping into implementation, the developer critiques the person

story to construct a shared understanding of what it means in follow. If

there are apparent business-level points, that is the purpose to align with

the BA or PO. On this case the story is obvious sufficient, so we transfer

straight to breaking it down alongside three dimensions: core logic, scope

boundaries, and definition of achieved.

Core logic

One new required subject on the API: modelId. The shopper

now tells us which AI mannequin they used — that is the important thing that unlocks the

proper value.

- Commonplace Plan: Buyer has a month-to-month token quota. Utilization

inside quota is free. Overage is charged at a model-specific price

(e.g., fast-model $0.01/1K vs. reasoning-model $0.03/1K). Present

quota logic stays; solely the speed lookup adjustments. - Premium Plan: No quota. Each token is billed from the

first one. Immediate tokens and completion tokens are charged individually,

every at a model-specific price. Invoice = immediate cost + completion

cost. This plan is solely new. - Routing: The system determines the client’s plan and

dispatches to the matching billing components. The design have to be simple to

lengthen — Enterprise plans (Story 2) are subsequent.

Scope boundaries

We’re solely calculating the present invoice. We’re NOT constructing buyer

CRUD, NOT querying historic payments, NOT managing subscriptions, and NOT

including/eradicating fashions.

Definition of achieved

The next eventualities restate the story’s acceptance standards

with the implementation element the group must confirm. The fourth

merchandise (Response format) isn’t a brand new enterprise AC — it captures the

non-functional contract the developer provides to make the factors

testable end-to-end.

- Validation: Lacking

modelId→ HTTP 400.

Unknown buyer → HTTP 404. Adverse tokens → HTTP 400. All

current validations stay intact. - Commonplace Plan billing: A buyer with a 100K quota and

90K already used submits 30K tokens for fast-model ($0.01/1K).

Anticipated outcome: 10K lined by quota, 20K overage, cost $0.20.

The identical request with reasoning-model ($0.03/1K) yields $0.60 —

similar quota logic, totally different price. - Premium Plan billing: A buyer submits 10K immediate

tokens + 20K completion tokens for reasoning-model (immediate $0.03/1K,

completion $0.06/1K). Anticipated outcome: $0.30 + $1.20 = $1.50. No

quota, no overage — immediate and completion are billed individually. - Response format: HTTP 201 returning invoice ID, buyer ID,

token counts, timestamp,modelId, and a plan-appropriate

cost breakdown.

If all these eventualities move, we have conquered this story.

Step 3: Generate evaluation context

With the necessities and scope clarified, we use the

/spdd-analysis command. By feeding it the enterprise

necessities, we instruct the AI to generate a complete evaluation

context.

How spdd-analysis works

This command extracts area key phrases from the enterprise

necessities (e.g. “billing”, “quota”, “plan”) and makes use of them to

scan solely the related elements of the codebase — not all of it. It

identifies current ideas, new ideas, key enterprise guidelines,

and technical dangers.

The output is a context-rich doc overlaying area idea

recognition, strategic course, and threat evaluation. It serves as

enter for the following step: producing the REASONS Canvas.

Instruction:

/spdd-analysis

@[User-story-1]Multi-Plan-Billing-Basis-&-Mannequin-Conscious-Pricing.md

Generated artifact: the preliminary evaluation context doc.

This command produces a strategic-level evaluation grounded in precise

codebase exploration. The output focuses solely on the “what” and

“why,” intentionally avoiding granular implementation particulars at this

stage. It sometimes covers:

- Area ideas: current vs. new, relationships, enterprise

guidelines - Strategic method: resolution course, design choices,

trade-offs - Dangers & gaps: ambiguities, edge instances, technical dangers,

acceptance-criteria protection

Overview and refine the evaluation context

With our personal understanding of the enterprise necessities in thoughts, we

evaluation the generated evaluation doc—specializing in the areas

highlighted within the alignment talent. This evaluation serves

two functions: confirming that our understanding aligns with the AI’s

interpretation, and discovering edge instances or boundary eventualities the AI

may floor that we hadn’t thought of.

On this particular occasion, the evaluation targeted on a number of vital

areas:

- Whether or not the Technique Sample was appropriately thought of.

- Adherence to the OOP rules established within the current system,

particularly ISP and SRP. - The validity of the proposed technique for including new fields.

- Figuring out edge instances not beforehand anticipated.

- Uncovering potential technical dangers.

Upon finishing the evaluation, the AI’s evaluation largely aligned with

our architectural intent; in truth, its concerns have been much more

complete than ours in sure areas.

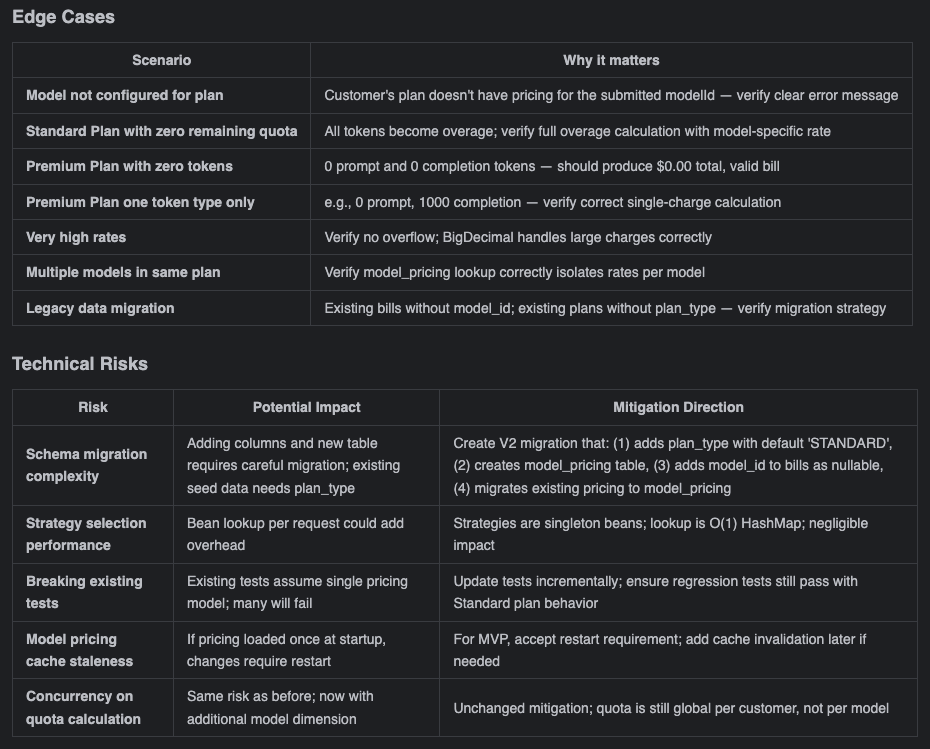

Edge instances and dangers from the

evaluation doc

To be clear, at this stage we solely possess a high-level

conceptual alignment. Whereas we will shortly envision the implementation

for areas the place we have now prior expertise, we can not fully map out

all of the granular technical particulars for the unfamiliar elements proper

now.

Nevertheless, that’s completely high quality. The overarching course is aligned.

We will proceed to the following step: observing how the AI “simulates” the

concrete implementation particulars inside our established framework and

context. As soon as we have now these tangible particulars, we will uncover deeper,

hidden points and make knowledgeable trade-offs based mostly on the precise

situation—adopting the approaches the place the advantages outweigh the

drawbacks, and discarding the remainder.

Resolution: settle for the evaluation as-is and proceed.

Step 4: Generate structured immediate

How spdd-reasons-canvas works

This command reads enterprise context (the output of

/spdd-analysis, or a direct necessities description)

and combines it with the present state of the codebase. It then

generates a design specification throughout all seven REASONS

dimensions — from “why are we doing this” to “what should we not

do.”

The output is an executable blueprint. The Operations

part is exact right down to methodology signatures, parameter varieties, and

execution steps.

Instruction:

/spdd-reasons-canvas

@GGQPA-001-202603191100-[Analysis]-multi-plan-billing-model-aware-pricing.md

Generated artifact: the preliminary structured immediate.

By this level, we have already gone by high-level technique throughout

the evaluation part—so when reviewing the structured immediate, we’re not

ranging from scratch. As a substitute, we’re analyzing how the AI has

translated our shared understanding into the REASONS Canvas construction:

from technique to abstraction to concrete particulars.

Consider it as a development: the evaluation part gave us strategic

readability; now we’re checking whether or not that readability has been faithfully

carried by into the architectural abstractions and implementation

specifics. That is intent alignment at a deeper degree—guaranteeing that

earlier than any code is generated, the AI has successfully “simulated” the

whole resolution inside our outlined framework. We get to evaluation from a

international perspective slightly than getting misplaced in particulars from the

begin.

Focus the evaluation on the areas highlighted within the abstraction-first talent. On this case,

this foundational context is already embedded within the codebase and the earlier

structured immediate. Consequently, when producing the structured

immediate for this iteration, the AI naturally elements in these

architectural tips and OO rules. In consequence, though the

generated content material is extremely advanced, there are remarkably few main

points. We will decide to proceed with producing the code utilizing this

structured immediate first, after which conduct a deeper evaluation to determine

any potential code-level anomalies later.

Up to now, we have now reached a robust consensus on the intent degree,

clarifying each the core downside and the decision path. Whereas there

could also be slight omissions within the particulars, this isn’t a priority; having

aligned on the general scope with the AI makes native optimizations

extremely controllable. Now, we transition into the code era

part.

Step 5: Generate code

This step is extra concerned as we’re producing the product code,

checks, and our critiques have two different outcomes.

Generate product code

As soon as our structured immediate is locked in, use it to generate the

product code.

How spdd-generate works

This command reads the REASONS Canvas and generates code activity

by activity, following the order outlined in Operations. It strictly

adheres to the coding requirements in Norms and the constraints in

Safeguards — no improvisation, no options past what the spec

defines.

The core precept: the immediate captures the intent, and the code

is the implementation of that intent. Generated code should correspond

one-to-one with this specification.

Instruction:

/spdd-generate

@GGQPA-001-202603191105-[Feat]-multi-plan-billing-model-aware-pricing.md

Generated artifact: code generated based mostly on the structured

immediate.

Due to the a number of rounds of logical deduction we did earlier

utilizing structured prompts, we method the code evaluation with a transparent

focus and set of priorities:

- Structure: does the code strictly observe our anticipated 3-tier

structure? - Enterprise logic: does the Service layer implementation completely

align with our preliminary intent? - Scope of change: are the modifications strictly confined to the

boundaries outlined by the structured immediate, avoiding unrelated

adjustments or scope creep?

On this particular case, because of the extremely exact context, the

generated code largely met our expectations, except for a number of

potential “magic numbers.” We’ll optimize these out as soon as the

useful verification is full.

The important thing takeaway right here is: don’t be concerned about making errors, and

do not stress over not catching each single element completely on the

first attempt. So long as we preserve iterating and advancing by the SPDD

workflow, there are many alternatives to course-correct. Minor

code smells are high quality for now—we confirm the core performance first,

then circle again to optimize.

Characteristic verification

Throughout function validation, the SPDD workflow gives the

/spdd-api-test command to generate useful testing

scripts.

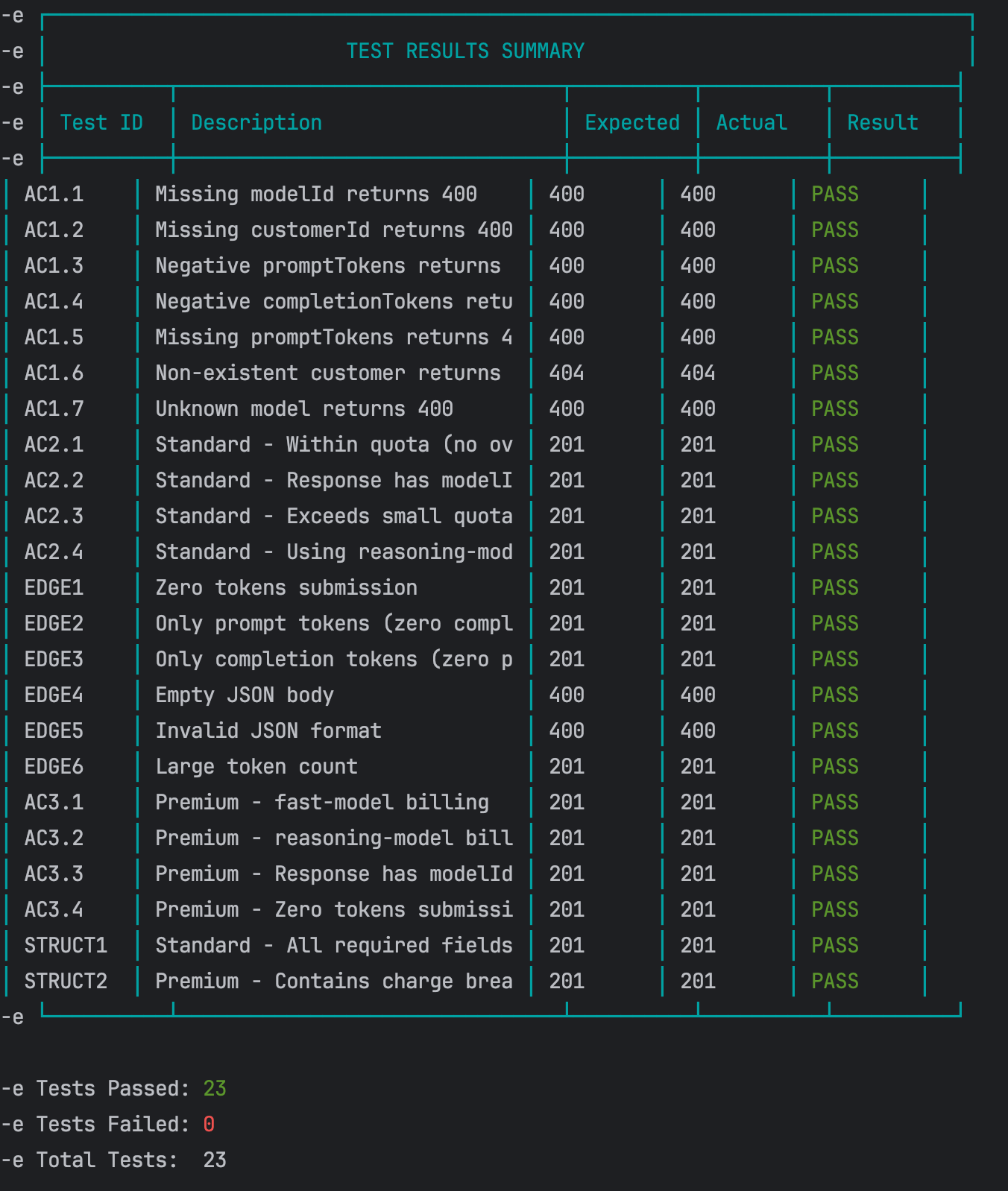

How spdd-api-test works

This command extracts API endpoint info from the code

implementation or acceptance standards and generates a cURL-based

take a look at script. The script features a structured test-case desk

overlaying regular eventualities, boundary situations, and error

eventualities. When executed, it outputs expected-vs-actual comparability

outcomes.

Instruction:

/spdd-api-test

Generated artifact: the API take a look at script.

Guided by the outlined guidelines within the command, the AI generates a

script that formulates the required take a look at eventualities utilizing curl instructions.

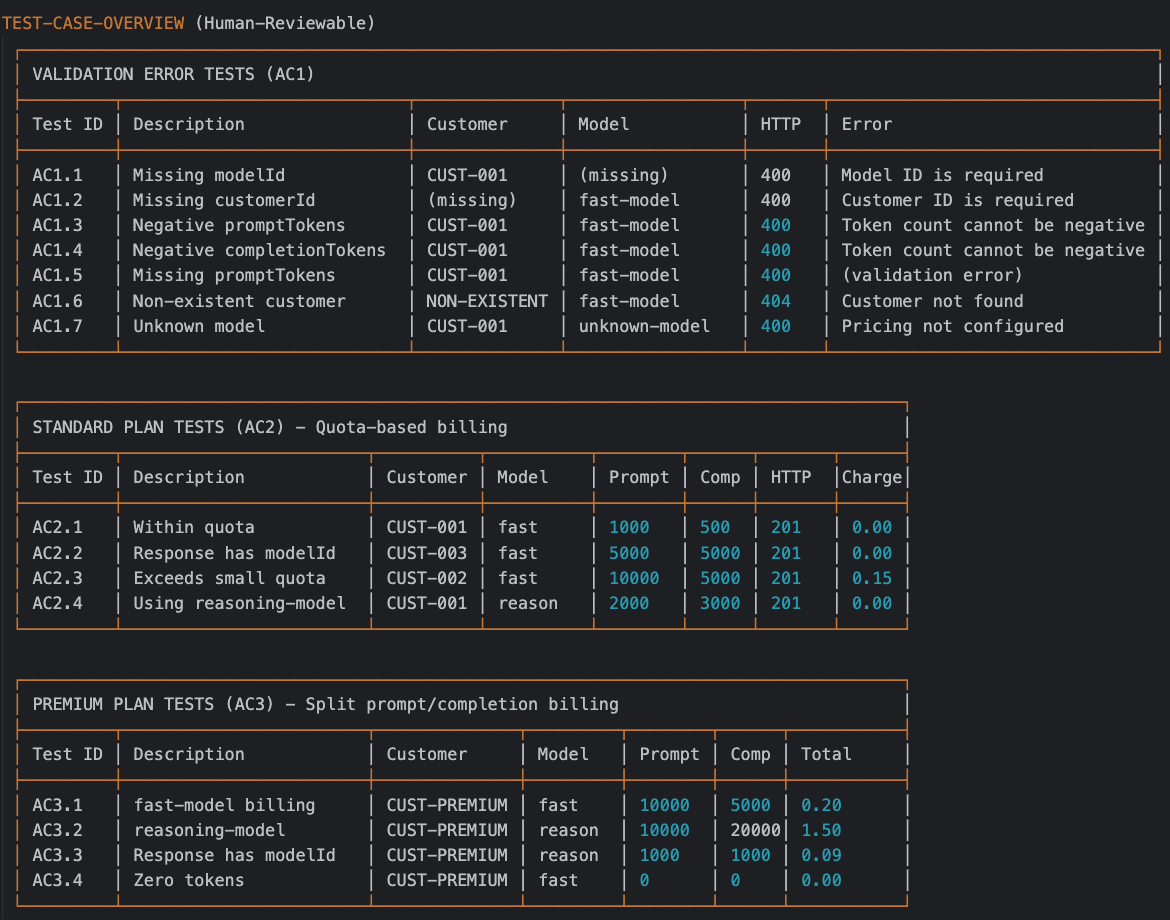

We will evaluation these AI-generated eventualities within the “TEST CASE OVERVIEW”

part of the script.

Generated API Check Script

Execution: as soon as the script is generated, run it:

sh scripts/test-api.sh

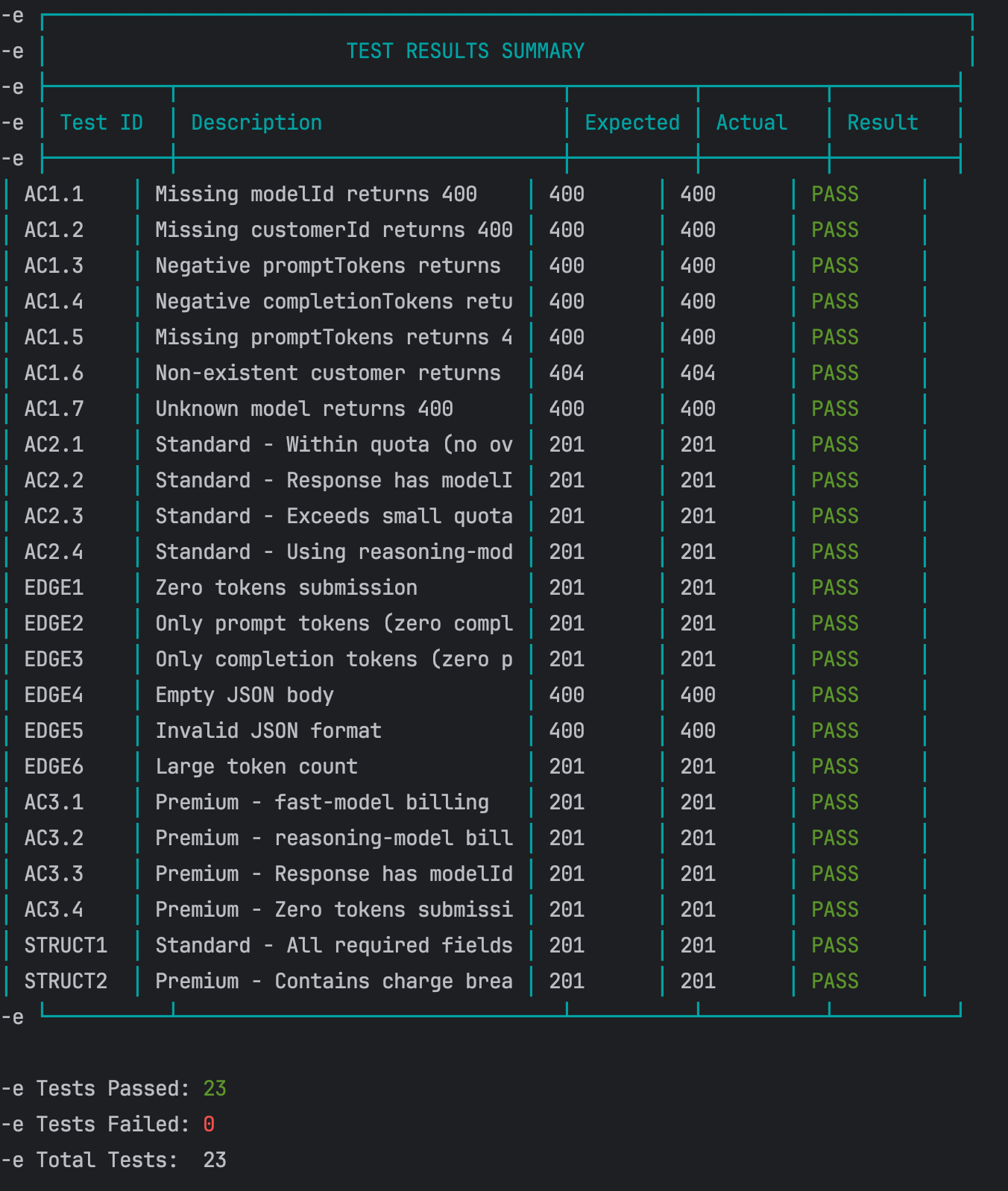

End result: all useful checks handed efficiently.

API Check Outcomes

Code evaluation & remaining changes

Due to the rigorous intent alignment within the first a number of steps, the

heavy lifting is already achieved. At this stage, the remaining points are

normally minor logic discrepancies or surface-level code smells.

To keep up precision in our engineering practices, we categorize

these remaining changes into two distinct varieties—based mostly on whether or not they

change the system’s observable habits—and deal with them utilizing

totally different methods throughout the SPDD workflow:

Two responses to code evaluation adjustments

Logic corrections (habits adjustments)

Technique: replace the immediate first, then generate code. For points

associated to enterprise guidelines or logic mismatches (which inherently change

the observable habits of the software program), at all times replace the structured

immediate to lock within the appropriate intent earlier than touching the code. That is

an replace or bug repair, not a refactoring.

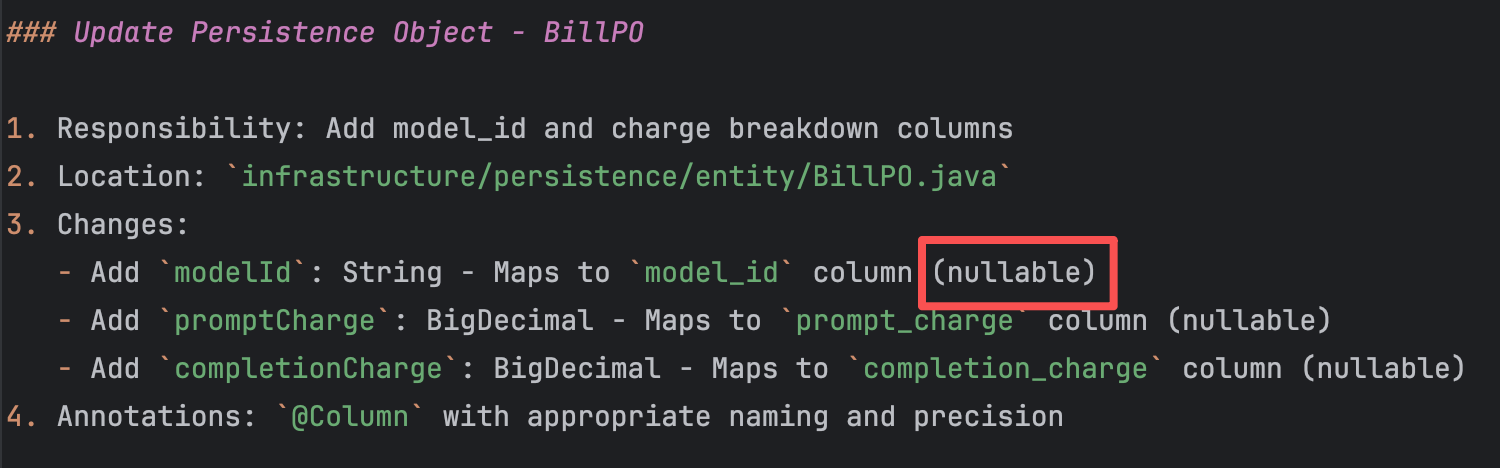

As an example, when persisting modelId within the invoice, we

at present enable this subject to be nullable. The underlying purpose is the

want to keep up backward compatibility with historic information, making

this workaround an affordable architectural resolution.

Immediate wants replace

Nevertheless, there’s an alternate. If the enterprise stakeholders can

verify what the modelId worth needs to be previous to this

change, we will unify the system’s habits and get rid of this potential

technical debt. Let’s assume that, after confirming with the enterprise,

the modelId for all historic payments needs to be set to

fast-model.

With this clear intent, we work together with the AI:

How spdd-prompt-update works

This command incrementally updates the present Canvas. It

modifies solely the sections affected by the change and preserves

every part else. Based mostly on the kind of change — new requirement,

architectural adjustment, or constraint change — it mechanically

determines which REASONS dimensions want updating.

This differs from /spdd-sync: sync flows from

code to spec when code has modified; prompt-update flows from

necessities to spec when necessities have modified.

Instruction:

/spdd-prompt-update @GGQPA-001-202603191105-[Feat]-multi-plan-billing-model-aware-pricing.md

model_id is a required subject, and its default worth is fast-model.

Based mostly on this resolution, replace the corresponding elements of the structured immediate.

The AI updates the structured immediate based mostly on this instruction.

Up to date artifact: the up to date structured immediate.

As soon as confirmed, use the /spdd-generate command to

replace the corresponding code based mostly on the newly up to date structured

immediate:

/spdd-generate

@GGQPA-001-202603191105-[Feat]-multi-plan-billing-model-aware-pricing.md

The AI, guided by the principles outlined throughout the

/spdd-generate command, comprehends the required adjustments

and performs focused updates solely on the affected

codebase.

Up to date artifact: the up to date code.

It is very important be aware that we don’t regenerate your complete

codebase. We proceed utilizing the present structured immediate and the AI

handles focused diffs:

- Establish the mismatch: discover that the habits of

modelIdthroughout persistence is inconsistent with the brand new

enterprise requirement (it have to be necessary with a default). - Goal the immediate snippet: copy the precise part from the

structured immediate that defines the outdated logic. - Replace the immediate: paste the extracted snippet into the chat

alongside the revised enterprise rule, instructing the AI to replace

the structured immediate first. - Generate focused code updates: as soon as the immediate displays the brand new

reality, run/spdd-generatepointing to the up to date file.

The AI mechanically performs focused diffs solely on the

affected codebase, slightly than regenerating every part from

scratch.

Refactoring (clear code & type)

“A change made to the inner construction of software program to make it simpler

to know and cheaper to change with out altering its observable

habits.”— Martin Fowler

Technique: refactor the code first, then sync again to the immediate.

For structural or stylistic points that don’t change observable

habits, instruct the AI to refactor the code instantly, after which use

a sync command to replace the immediate documentation.

For instance, the AI-generated BillingServiceImpl class

comprises some hardcoded magic numbers that should be extracted into

significant constants.

non-public int calculateRemainingQuota(String customerId, PricingPlan plan) {

if (plan.getMonthlyQuota() == null || plan.getMonthlyQuota() == 0) {

return 0;

}

LocalDate currentDate = LocalDate.now(ZoneOffset.UTC);

LocalDateTime monthStart = currentDate.withDayOfMonth(1).atStartOfDay();

LocalDateTime monthEnd = currentDate.plusMonths(1).withDayOfMonth(1).atStartOfDay();

Integer currentMonthUsage = billRepository.sumIncludedTokensUsedForMonth(customerId, monthStart, monthEnd);

return plan.getMonthlyQuota() - currentMonthUsage;

}

Instruction 1:

@BillingServiceImpl.java Within the calculateRemainingQuota methodology,

there are some magic numbers that should be processed as

constants

The AI executes the code refactoring based mostly on this instruction

(keep in mind the golden rule: at all times refactor in small, incremental

steps). If the output meets our expectations, we use the

/spdd-sync command to synchronize these newly up to date

code particulars again to their corresponding places throughout the

structured immediate.

Instruction 2:

How spdd-sync works

This command compares the present code in opposition to the Canvas

specification, then synchronizes code-side adjustments (refactoring,

bug fixes, new elements) again into the Canvas.

The objective is to maintain the Canvas as an correct design doc

for the present code, slightly than an outdated historic

document.

/spdd-sync

The AI summarizes the adjustments based mostly on the principles outlined within the

/spdd-sync command. It then follows the structural

necessities of the REASONS Canvas to jot down the detailed code

description updates again into the corresponding sections of the

structured immediate.

As soon as each instructions are executed, we will see all of the immediate and code

adjustments right here.

For any deeper or hidden code smells, merely repeat these steps.

The golden rule is to at all times preserve the structured immediate synchronized

together with your newest codebase.

Regression take a look at

As soon as all optimizing is full, restart the service and run the

API take a look at script yet one more time to make sure no core performance was

damaged throughout the cleanup.

End result: all handed.

Regression Check Outcomes

Step 6: generate unit checks

Useful testing alone is inadequate for sturdy validation; it

acts primarily as an auxiliary examine and isn’t factored into code

protection metrics. The ultimate sign-off on core logic requires

complete unit checks. At present, the SPDD workflow doesn’t have

devoted testing instructions finalized (these might be launched in

future iterations). As an interim resolution, we make the most of a

template-driven method to generate structured prompts for unit

testing.

Generate the preliminary take a look at immediate

We start by combining the implementation particulars with our

standardized testing template to generate a baseline take a look at immediate.

Instruction:

@GGQPA-001-202603191105-[Feat]-multi-plan-billing-model-aware-pricing.md,

mixed with the template @TEST-SCENARIOS-TEMPLATE.md, please

generate a take a look at immediate file.

Deduplicate and refine eventualities

After producing the preliminary structured take a look at immediate, a few of the

proposed take a look at eventualities have been duplicates of what we already had. To

tackle this, we continued the dialogue, instructing the AI to

cross-reference the generated immediate with the present take a look at suite,

determine the genuinely new eventualities, and take away any

redundancies.

Instruction:

@GGQPA-001-202603191105-[Test]-multi-plan-billing-model-aware-pricing.md

There are checks which can be duplicated with current ones, examine the

related checks that exist, after which solely add checks for brand new

eventualities

Up to date artifact: the take a look at structured immediate.

Generate the unit take a look at code

As soon as the refined take a look at eventualities are reviewed and confirmed, use the

finalized take a look at immediate to drive the precise code era.

Instruction:

Based mostly on the generated take a look at immediate

@GGQPA-001-202603191105-[Test]-multi-plan-billing-model-aware-pricing.md,

please generate the corresponding unit take a look at code.

End result: all checks handed. Commit for checks.

What this instance delivered

This marks the conclusion of a whole SPDD workflow. By this

standardized course of, we efficiently delivered the next key

outcomes:

- A enterprise logic implementation with exceptionally excessive intent

alignment (~99%). - Full engineering transparency, together with a transparent

understanding of the implementation path, technical choices, and

accepted trade-offs. - A structured immediate asset tightly synchronized with the present

codebase, laying a strong basis for future iterations. - Compounding human experience, fostering a steady accumulation

of developer expertise and psychological fashions as we iterate

collaboratively with the AI.

View the whole code diff for this enhancement on GitHub.

We have additionally ready a bonus enhancement function—Enterprise

Plan Quantity-Based mostly Tiered Billing. In case you’re eager about getting

some hands-on follow, we extremely encourage you to sort out it utilizing the

SPDD workflow outlined above.

Three core abilities

SPDD is a cloth change in how builders construct software program. In our work

we have now recognized three core abilities that they want in an effort to do their

work successfully. These abilities mirror the place the worth of builders

is shifting in an AI-assisted world.

Abstraction first

design earlier than you generate

Earlier than producing any code, it’s good to be clear about what objects

exist, how they collaborate, and the place the boundaries are. With out that,

AI usually sprints on implementation particulars whereas the construction falls aside.

Unclear tasks, duplicated logic, inconsistent interfaces, and

the price exhibits up later in evaluation and rework.

Alignment

lock intent earlier than you write code

Earlier than implementation, it’s good to make “what we are going to do / what we cannot

do” express, and agree on the requirements and onerous constraints up entrance.

In any other case you find yourself with quick output and sluggish rework.

Iterative Overview

flip output right into a managed loop

You need AI help to behave like an engineering course of, not a

one-shot draft. With out a disciplined review-and-iterate loop, groups both

preserve forcing the mannequin to patch issues till the answer drifts, or they

restart repeatedly and lose management of value and time.

The place SPDD matches

Health evaluation

SPDD is an engineering funding. The desk under charges how properly it pays off by situation, from extremely advisable (5 stars) to not appropriate (1 star).

| Score | Situation | Notes |

|---|---|---|

| ★★★★★ | Scaled, standardized supply | Excessive-repeat enterprise logic that wants long-term maintainability (e.g., constructing many related APIs, automating core enterprise workflows). |

| ★★★★★ | Excessive compliance and onerous constraints | Environments the place you need to observe rules, safety requirements, or strict architectural guidelines (e.g., monetary core methods, multi-channel / multi-client deployments). |

| ★★★★☆ | Workforce collaboration and auditability | Multi-person supply the place adjustments have to be absolutely traceable and reviewable end-to-end. |

| ★★★★☆ | Cross-cutting consistency work | Complicated refactors the place logic should keep tightly synchronized throughout a number of microservices or totally different languages. |

| ★★☆☆☆ | Firefighting hotfixes | “Cease the bleeding” manufacturing fixes the place pace issues greater than architectural self-discipline. |

| ★★☆☆☆ | Exploratory spikes | When the objective is to validate an thought shortly slightly than ship production-quality software program, SPDD’s governance overhead will not pay again. |

| ★★☆☆☆ | One-off scripts | Disposable information cleanup or momentary scripts the place SPDD’s upfront value is just too excessive relative to the worth. |

| ★☆☆☆☆ | Context black holes | When the area is poorly outlined and enterprise guidelines are unclear, you possibly can’t set significant boundaries for the mannequin. |

| ★☆☆☆☆ | Pure inventive / visible work | Duties pushed by style and aesthetics slightly than logic (e.g., UI visible exploration, advertising and marketing copy). |

Commerce-offs to think about

Return on funding

| Profit | Impression | Pace | What you get |

|---|---|---|---|

| Determinism | Excessive | Fast | Encode logic in a exact spec, which considerably reduces hallucination and “inventive” interpretation. |

| Traceability | Excessive | Fast | Each significant change might be traced again to the structured immediate, closing the audit loop. |

| Quicker critiques | Excessive | Quick-term | Code “arrives” nearer to group requirements, so critiques deal with logic and design, not formatting and cleanup. |

| Explainability | Medium-Excessive | Gradual | Intent and habits are seen on the natural-language degree, decreasing the cognitive load for understanding and upkeep. |

| Safer evolution | Excessive | Lengthy-term | Nicely-defined boundaries and stepwise implementation make focused adjustments lower-risk and simpler to iterate. |

Upfront funding

| Space | Barrier | Nature | What it takes |

|---|---|---|---|

| Mindset shift | Excessive | Ongoing coaching | Groups need to adapt to “design first” slightly than “code first.” |

| Senior experience up entrance | Medium-Excessive | Per-feature | Engineers who can translate enterprise guidelines into clear abstractions and design constraints. |

| Automation tooling | Medium | Infrastructure setup | With out automation, SPDD hits a throughput ceiling and struggles to maintain prompts constant. openspdd runs the workflow on this article—from evaluation and structured REASONS prompts by code and non-compulsory take a look at help—as repeatable CLI steps, so artifacts keep versioned and reviewable as an alternative of trapped in chat. Bigger organizations should still layer a data platform on high to handle and reuse property at scale. |

Closing

By utilizing the REASONS Canvas, clarifying intent, establishing the proper

abstractions, breaking work into concrete duties, and locking in boundaries,

we give AI a well-defined area to function. Inside that area, SPDD might not

be the shortest path to “generate code shortly,” nevertheless it is without doubt one of the most

dependable methods to ship the proper change with confidence.

It is also honest to say that SPDD shines most in logic-heavy domains.

In areas pushed by aesthetic judgment, frontend styling, for instance, we’re

nonetheless exploring engineering patterns that may be as steady as purely logical

building.

The framework on this article is barely the “strikes.” The actual benefit comes

from sharpening the meta-skills behind it: abstraction and modelling, systematic

evaluation, and a deep understanding of the enterprise as a complete. These are the

human strengths that finally decide how a lot worth we will get from AI.

Within the AI period, software program growth is not a contest of mannequin IQ. It is a

contest of engineer cognitive bandwidth – how clearly we will suppose,

body issues, and make choices.

We’ll shut with a quote that captures the spirit

of SPDD:

“In science, if you realize what you’re doing, you should not be doing it.

In engineering, if you do not know what you’re doing, you should not be doing it.”

Acknowledgements

We would like to specific our honest because of Martin Fowler. Regardless of a

busy schedule, he invested deeply on this article — from sharpening the

narrative construction and clarifying key ideas, to elevating the visible

storytelling with improved and new diagrams. His eager eye for element and

dedication to precision profoundly formed the ultimate outcome.

We’re additionally deeply grateful to Eric (Ke) Zhou, Wei Solar, Sara Michelazzo,

Rebecca Parsons, Matteo Vaccari, Could (Ping) Xu, Zhi Wang, Feng Chen and Da Cheng for his or her considerate critique and insights.

Your enter helped us make clear a number of key ideas that underpin the methodology.

We additionally need to acknowledge early practitioners: Jie Wang, Jian Gao,

Yixuan Feng, Siyuan Li, Yixuan Li, Biao Tian, Wei Cheng, Qi Huang, and Yulong

Li. Thanks for validating SPDD in actual tasks, and in your endurance

because the method matured. Your frontline suggestions has been foundational to

making SPDD sensible and sturdy.

Lastly, within the spirit of working towards what we preach, this text itself

was formed with the help of giant language fashions — Claude 4.5 Sonnet,

Claude 4.6 Opus, Gemini 3.1 Professional, and ChatGPT 5.4. We relied on them for

prose refinement, structural evaluation, synthesizing solutions, and as

thought companions for steady studying all through the writing course of.

Their contributions are a becoming testomony to the very method this

article describes.