Logging is a core developer accountability that includes monitoring and recording occasions that happen in software program. It’s important for monitoring system conduct and debugging points. Python’s logging system gives a scalable different to print-based debugging, the place builders use print() statements to examine conduct and permits functions to file occasions with:

- Outlined severity.

- Constant formatting.

- Versatile routing.

By separating message technology from supply, logging makes it simpler to watch and diagnose software conduct throughout environments.

On this article, I draw on my 20 years of expertise constructing manufacturing techniques for world firms reminiscent of Hitachi and Alstom to clarify the core ideas of Python logging. We’ll take a look at loggers, ranges, handlers, and formatters and present how messages circulate by way of the logging pipeline. We’ll additionally cowl widespread configuration patterns, handler sorts, and greatest practices for constructing sturdy logging setups.

Core Ideas of Python Logging

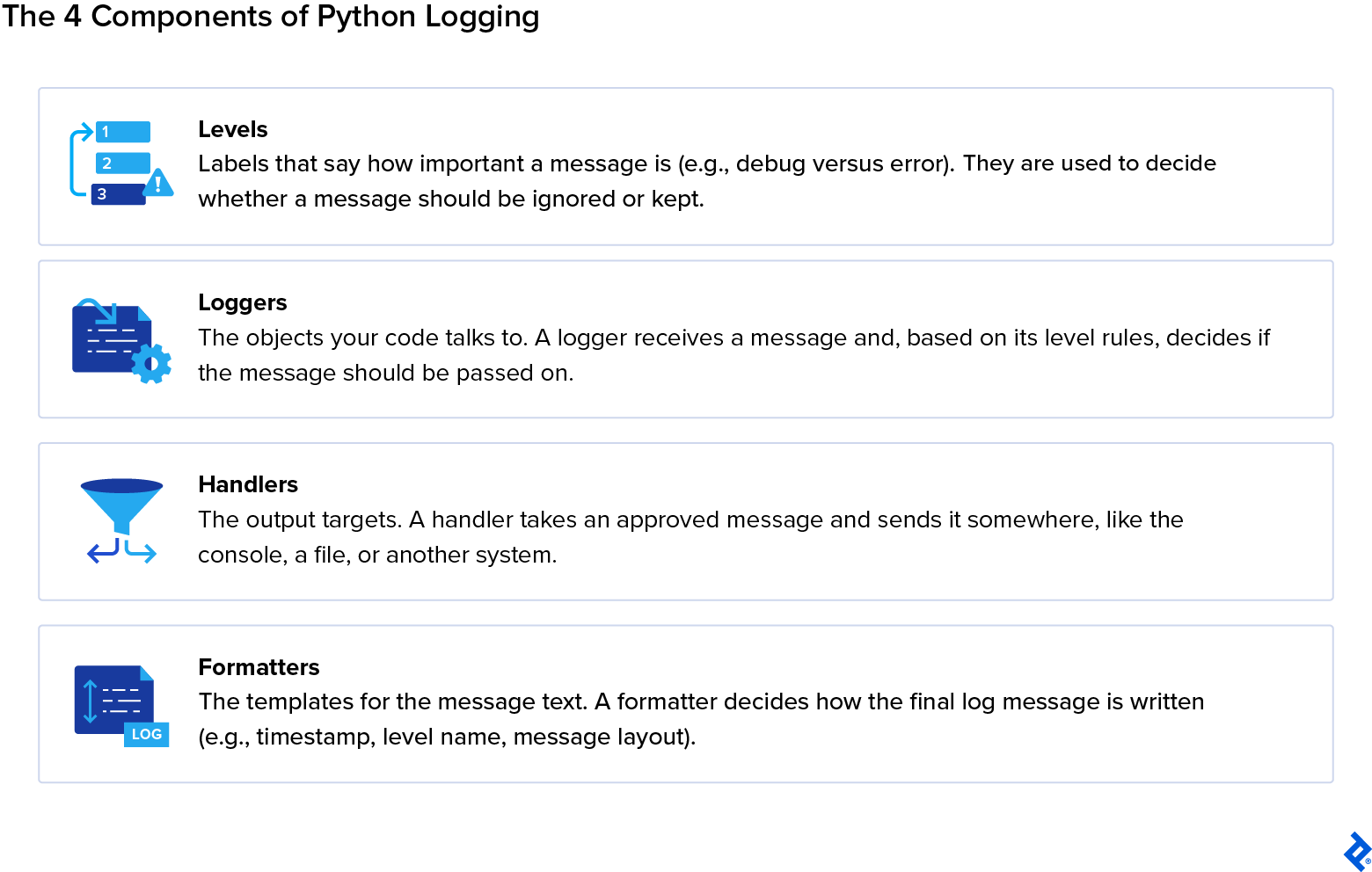

Python’s built-in logging system is organized round 4 fundamental abstractions: Loggers, Ranges, Handlers, and Formatters. Every of those parts has a particular operate that determines how messages are labeled, routed, and formatted.

-

Loggers expose strategies (

.debug(),.information(),.warning(),.error(),.crucial()) to generate logs and outline the logger-level filter. They’ve hierarchical names (e.g.,app.module.submodule) and a stage thatfilters which messages get processed. They’re the entry level of the logging system. -

Ranges management which messages are accepted by loggers and handlers (e.g.,

NOTSET) and outline the severity of a message (e.g.,CRITICAL). A logger will ignore messages under its configured stage. Every handler can even have its personal stage filter. - Handlers decide the place a log message goes. Potential places embrace console streams, recordsdata, rotating recordsdata, syslog, e mail, community sockets, reminiscence buffers, or queues. A number of handlers might be connected to the identical logger, and every can have its personal formatter and severity stage.

- Formatters management how log messages are rendered and may embrace metadata (e.g., timestamp, module, line quantity, thread or course of IDs). In addition they permit customized string templates or structured codecs like JSON. Every handler can use a unique formatter to supply a number of output types for a similar message.

A normal logging course of would see a developer calling a way on a logger (for instance, logger.information("some message")). The logger then checks its stage. If the logger accepts the message (that’s, if the message’s stage isn’t decrease than the logger’s threshold), it creates a LogRecord containing the message and metadata.

The LogRecord is then handed to all connected handlers. Every handler could examine its stage and apply a formatter if the message passes. Lastly, the log is emitted to its vacation spot by the handler. If propagation is enabled, the LogRecord could proceed as much as mum or dad loggers, repeating the method with their handlers.

Logging Ranges

Python defines six ordered ranges: NOTSET, DEBUG, INFO, WARNING, ERROR, and CRITICAL. Every of those has a particular use case and defines the significance of the message, figuring out whether or not a message can be processed (also referred to as selective filtering).

Ranges comply with a particular severity hierarchy to categorise log messages. In ascending order of severity, they’re labeled as follows:

-

NOTSET (0): This stage is basically a placeholder that signifies that no stage is explicitly set; subsequently, its severity stage is 0. If a logger’s stage is

NOTSET, it would inherit its efficient stage from its mum or dad logger. Builders use this stage not often inside code, however its significance lies in its operate to defer logging selections to the embedding software. - DEBUG (10): This stage is helpful for troubleshooting inner state, management circulate, and variable worth. It’s additionally regularly used throughout improvement to visualise circulate and knowledge. For instance, a developer could need to visualize knowledge construction contents to find out the perfect method as they construct their software.

-

INFO (20): This stage is regularly used for routine operational messages to explain software conduct, reminiscent of “Consumer logged in,” “Process accomplished efficiently,” or “Background job began.” Directors usually depend on

INFOlogs to observe system efficiency and exercise. -

WARNING (30): It warns builders that one thing sudden has occurred, or that an present situation may result in future errors. Regardless of a

WARNING, nevertheless, the appliance can proceed operating. An instance of such a message may very well be: “Configuration file lacking elective parameter, utilizing default.” -

ERROR (40): An

ERRORstage factors out a major problem that forestalls a program or a part of a program from operating as meant. Usually, these are failures or exceptions affecting the logic of a program or different crucial operations, reminiscent of a lacking required file or a database connection failure. -

CRITICAL (50): The best within the hierarchy, a

CRITICALmessage flags extreme failures which may compromise the system in its entirety. It usually requires speedy consideration and is commonly used to level out failures reminiscent of knowledge corruption or system crashes.

The filtering happens at two factors all through the logging pipeline:

- The logger-level threshold determines which message (based on severity) will enter the logging system and discards these under the logger’s configured stage.

- The handler-level threshold is extra granular, the place every handler connected to a logger independently filters messages and should solely emit people who meet or exceed its configured stage.

Log Formatters

Log formatters outline how log data are introduced, and enrich them with metadata. This metadata creates diagnostic readability that helps builders and operators perceive what occurred and why. Some useful metadata could embrace the next:

-

Timestamps (

%(asctime)s) level out when the occasion occurred. -

Logger names (

%(title)s) determine what a part of the appliance has generated the message. -

Modules or features (

%(module)s,%(funcName)s) determine particularly which operate originated the message. -

Line numbers (

%(lineno)d) flag the road quantity throughout the code file. -

Thread or course of IDs (

%(thread)d,%(course of)d) are helpful for figuring out threads or processes in multithreaded or multiple-process functions. -

Severity ranges (

%(levelname)s) point out the significance of the message at hand.

Customizing Log Output

Not like logging ranges, these formatters are extremely customizable for builders who need to tailor their log outputs to be as particular as doable.

There are a number of methods to configure log outputs. Most builders could select to make use of template string formatting that depends on placeholders in a string format:

"%(asctime)s - %(title)s - %(levelname)s - %(message)s"

When in want of log outputs which might be machine-parsable and combine with log collectors or different machine-led context, structured codecs may be a better option. In such instances, JSON or key-value pairs are used:

'{"time": "%(asctime)s", "logger": "%(title)s", "stage": "%(levelname)s", "message": "%(message)s"}'

Totally different handlers could use completely different formatters for a similar occasion (handler-specific formatting), beginning with JSON formatting for centralized ingestion. This manner, multidestination logging is facilitated with out duplicating log technology code.

import logging

logger = logging.getLogger("app")

logger.setLevel(logging.INFO)

# Console handler with a easy format

ch = logging.StreamHandler()

ch.setFormatter(logging.Formatter("%(levelname)s: %(message)s"))

# File handler with a JSON-like format

fh = logging.FileHandler("app.log")

fh.setFormatter(logging.Formatter('{"time": "%(asctime)s", "msg": "%(message)s"}'))

logger.addHandler(ch)

logger.addHandler(fh)

logger.information("Course of began")

Handlers and Their Roles

Inside the logging pipeline, handlers act as a routing layer between the logger and the output. Their core operate is to dispatch log messages to their remaining vacation spot. The identical log file might be shared with a number of handlers concurrently, and every handler can apply unbiased formatting and severity filters.

Handlers assist a number of simultaneous outputs, together with rotation insurance policies, buffering, and distant supply. Typical locations embrace:

- Console: For real-time diagnostics and debugging.

- File: For a single, persistent go browsing disk with out automated rotation.

- Rotating recordsdata: For robotically managing log file progress by dimension or time.

- System logs: For system monitoring (by way of Unix syslog or Home windows Occasion Log).

- E-mail: For communication with stakeholders in case of system crashes or failures.

- Community: For logging over TCP or UDP to distant servers or collectors.

- Reminiscence or queue: For buffering messages in reminiscence or queues for nonblocking supply.

Logger Habits and Propagation

When coping with logs, I’ve realized that it’s vital to have an organized system, particularly in functions that generate quite a few log recordsdata. A well-structured setup makes it simpler to search out your logs in case of failure and see the place issues are originating.

Loggers use a hierarchical naming construction the place names are dot-separated and inherit their configuration from mum or dad loggers. This enables for centralized management in multimodule and general extra complicated functions.

Right here is an instance of a hierarchical naming construction for loggers:

app

app.database

app.database.connection

app.api

app.api.auth

Whereas this hierarchy usually mirrors the bundle or mannequin structure for readability’s sake, it’s primarily based strictly on string naming conventions fairly than the file system itself.

Every logger’s mum or dad is recognized by stripping the rightmost suffix. For instance, in app.database, the mum or dad is app, and the mum or dad of app is the basis logger.

Logging in Multimodule Functions

When an software is extra complicated and presents a number of modules, the performance of this hierarchical naming construction is extra obvious. Except explicitly overridden, loggers inherit efficient log stage, handler, formatter conduct, and filters from their ancestors. This manner, with shared mum or dad loggers, it turns into simpler to manage logging conduct for a whole undertaking.

Put into apply, the method appears like this:

- The developer configures one fundamental mum or dad logger (e.g.,

app). - All baby loggers (

app.api,app.db, and so on.) will robotically use the identical handlers, produce logs with the identical formatting, and comply with the identical severity guidelines. - When wanted, particular person modules can override particular conduct. For instance,

app.databasecan connect a file handler with a better severity stage to seize solely database errors with out affecting the remainder of the appliance logs.

Efficient Log Stage and the Position of NOTSET

Whereas each logger has a stage, what really controls filtering is the efficient log stage. If the logger has an explicitly set stage, that stage is used (or it may be stated to be efficient). If, however, the logger’s stage is ready to NOTSET, Python walks up the hierarchy to search out the primary ancestor with a non-NOTSET stage and makes use of it because the logger’s efficient stage.

Semantically, the distinction between a logger’s stage and its efficient stage seems as follows:

-

logger.stageis the specific stage; -

logger.getEffectiveLevel()is what really governs filtering.

Propagation: Recording a Log’s Journey

In Python logging, propagation is the method that determines whether or not the LogRecord created and processed by the logger’s personal handlers will proceed up a logger hierarchy.

It may be set to True, which is the default (propagate=True), or False (propagate=False). When set to True, the log file is dealt with by the present logger’s handlers, handed to the mum or dad logger’s handlers, and eventually all the way in which to the basis by way of the grandparent. If set to False, the file stops on the present logger, and isn’t obtained by the mum or dad handlers.

Generally, propagation may cause duplicate logs. This will occur when each the kid and mum or dad loggers have handlers and propagate is ready to True, inflicting the LogRecord to be produced in any respect ranges.

This can be a widespread mistake in Python logging, however one which’s simply avoidable by configuring handlers on one top-level software logger and letting baby loggers inherit their traits with out including handlers to each module.

Another excuse for duplicate logs that I encounter usually is when a number of threads attempt to log the identical occasion directly. The only technique to stop that is to have a central logging system exterior the threads, so every occasion is recorded simply as soon as.

Python Logging Handlers Defined

In Python’s logging system, handlers kind the supply and routing layer. They outline the place log messages can be delivered, together with console streams, recordsdata, rotating archives, community sockets, system logs, e mail techniques, or in-memory buffers.

Each handler is chargeable for emitting a LogRecord to a particular vacation spot after which making use of its personal filtering coverage (severity, customized filters), formatting (plaintext, structured JSON, machine-readable fields), and I/O semantics (sync, async, buffered, rotating, networked). One logger could have a number of handlers.

StreamHandler and FileHandler

StreamHandler is the first handler for improvement and interactive classes, working with containerized environments the place stdout/stderr is captured by orchestration techniques (for instance: Kubernetes, Docker, serverless platforms). It usually goes hand in hand with human-readable codecs. It delivers logs to file-like streams like sys.stdout or sys.stderr.

FileHandler writes log entries to a specified file path. It’s best used for easy logging that’s persistent and for functions that depend on native disk stability (as a result of log quantity).

These foundational handlers introduce the core mechanics of stage filtering, message formatting, and fundamental emission conduct.

RotatingFileHandler and TimedRotatingFileHandler

RotatingFileHandler rotates recordsdata as soon as they attain a configured most dimension, which prevents unbounded disk utilization and retains a configured variety of archived log recordsdata. It’s widespread in always-on providers like API servers or back-end daemons.

TimedRotatingFileHandler produces time-stamped log units (supreme for archival and batch evaluation) and is suitable for workloads producing predictable every day or hourly log volumes.

Python’s commonplace library doesn’t embrace extra specialised and superior filesystem handlers like compression handlers or OS-integrated rotation daemons, however RotatingFileHandler and TimedRotatingFileHandler are thought of the “superior” choices throughout the built-ins.

Community Handlers (SocketHandler, DatagramHandler)

Community handlers (SocketHandler (for TCP) and DatagramHandler (for UDP) transmit serialized log data to distant endpoints. Whereas TCP is appreciated for reliability, UDP is used for low latency. Distributed techniques and microservices with centralized ingestions are good choices for these.

System and E-mail Handlers (SysLogHandler, NTEventLogHandler, SMTPHandler)

SysLogHandler delivers messages to Unix/Linux syslog daemons. Used for servers the place /var/log and syslog are managed by commonplace operational pipelines.

NTEventLogHandler sends logs to the Home windows Occasion Log. Works properly in enterprise Home windows environments.

SMTPHandler sends log messages by way of e mail, primarily for alerting functions.

MemoryHandler, QueueHandler, QueueListener

MemoryHandler buffers data in reminiscence and flushes them conditionally, both when a dimension threshold is reached or a extreme log occasion takes place. It may be useful in performance-sensitive or embedded environments.

QueueHandler pushes log data right into a queue (reminiscent of queue.Queue, multiprocessing queues).

QueueListener consumes queue objects and processes them utilizing a number of precise handlers in a background thread or course of. Excellent for internet servers, concurrent functions, or techniques with excessive log quantity, it’s often paired with QueueHandler.

Superior Handlers (NullHandler, WatchedFileHandler, BaseRotatingHandler)

NullHandler prevents undesirable “No handler configured” warnings in packaged libraries. Used primarily by library authors to stop undesirable warnings when an importing software has not configured logging.

WatchedFileHandler is designed for Unix environments the place exterior rotation instruments handle the underlying log recordsdata. Quite than assuming Python controls rotation, it screens the log file’s inode on every write. If an exterior rotation has changed the file, the handler robotically closes the previous file and opens the brand new one.

BaseRotatingHandler is the foundational class behind Python’s rotation-related handlers. It implements the shared mechanics for figuring out when rotation ought to happen, managing file descriptors, and performing rollover. Though seldom used instantly, it clarifies how rotation triggers, file handles, and rollover mechanisms are inherited by rotation-related handlers.

Logging to A number of Locations

Any logger could connect a number of handlers concurrently, and every handler can have its personal formatter and severity stage. Because of this a single log occasion can seem in a number of locations directly (for instance, human-readable textual content within the console, structured JSON in a file for ingestion, and a crucial alert despatched by e mail) with out modifying the appliance’s logging calls.

Configuring Logging for Actual Functions

Logging to A number of Locations

As we noticed, a single logger is ready to connect a number of handlers. Every handler applies its personal severity threshold and formatter, producing completely different output representations from the identical underlying log occasion. Handlers will then emit the identical occasion to recordsdata, consoles, distant collectors, and buffers, avoiding code duplication utterly.

Handlers could goal completely different locations concurrently. Examples embrace:

- Console output for builders.

- Recordsdata for auditing or debugging.

- Community endpoints for aggregation.

- Buffers for deferred processing.

Python’s logging to a number of locations is an instance of its capability to fan out logs cleanly throughout a mix of targets, which has the added benefit of isolating supply issues in addition to failures (a failure in a single handler doesn’t have an effect on the others).

Formatter and Filter Customization

Formatters management how log data are rendered. They outline which fields seem, in what order, and whether or not the output is plaintext or structured.

Filtering happens earlier within the pipeline than formatting, operates instantly on LogRecord knowledge, and determines whether or not a file proceeds to formatting in any respect.

As a result of filters see the file earlier than formatting, they will additionally modify it. Widespread makes use of embrace:

- Attaching contextual knowledge (reminiscent of request or correlation IDs, consumer and session info, and so on.).

- Suppressing low-value or irrelevant messages.

- Routing particular classes of messages to chose handlers.

Extra superior setups usually depend on structured output fairly than free-form textual content. JSON formatting, contextvars, and key-value data permit contextual knowledge to circulate by way of async code paths and throughout service boundaries with out being threaded by way of each operate name.

Some issues stay purely sensible fairly than architectural:

- Customized timestamps align logs throughout a number of environments.

- Colorized output makes logs extra readable throughout debugging.

- Multiline templates make room for stack traces and different diagnostic particulars.

Customized Handlers and Structured Logging

With customized handler lessons, builders can manipulate Python’s logging system to emit log data to straightforward locations like HTTP APIs, message queues, databases, and exterior storage techniques.

Supply logic particular to a sure area might be built-in into functions simply (by subclassing logging.Handler). This may be accomplished whereas nonetheless absolutely taking part in the usual logging pipeline.

Structured JSON logging takes a unique method to log output: As a substitute of free-form textual content, every occasion is emitted as structured key-value knowledge. This makes logs simpler to correlate throughout requests, hint by way of providers, and index downstream. In distributed and microservice environments, this construction turns into important (fairly than elective).

Structured-logging libraries reminiscent of structlog and Loguru construct on or wrap Python’s logging framework and supply extra pipelines for structured formatting, contextual discipline injection, and simplified configuration.

Avoiding Widespread Pitfalls

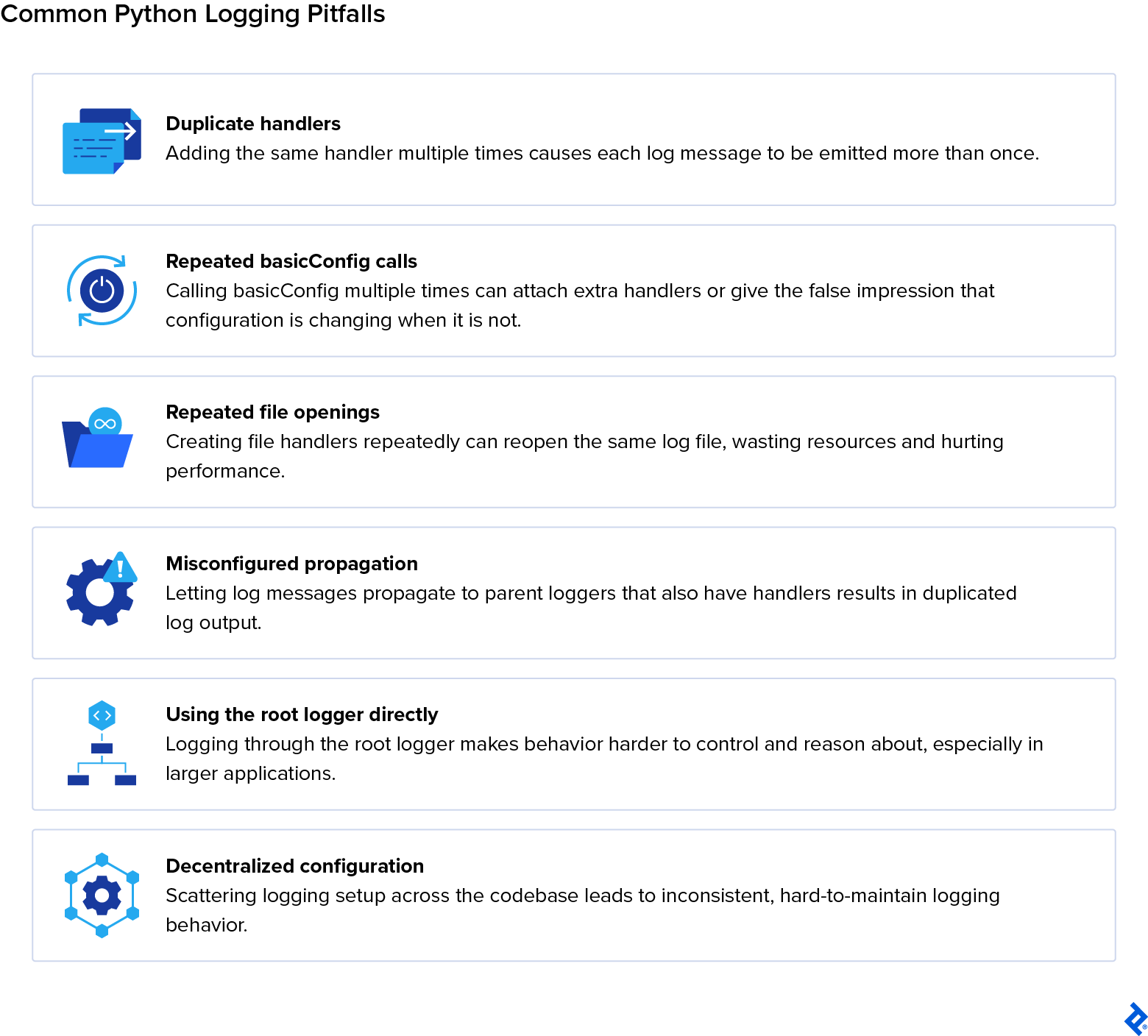

Duplicate handlers generally is a supply of inflated log quantity. This generally occurs when handlers are connected in a number of modules or when configuration code is executed a number of instances. Repeatedly calling basicConfig or file opening can even contribute to log inflation and/or degrade software efficiency.

Misconfigured propagation may cause log data to be forwarded to mum or dad loggers that even have handlers connected, leading to the identical message being emitted a number of instances. This usually occurs when each baby and mum or dad loggers outline handlers whereas propagation stays enabled.

These points might be solved by avoiding direct use of the basis logger and centralizing logging configuration in application-level loggers, which finally helps preserve predictable conduct.

The disable_existing_loggers Pitfall in dictConfig

When utilizing logging.config.dictConfig(), one setting usually causes silent manufacturing points: "disable_existing_loggers": True

This feature defaults to True. It disables each logger that already exists until it’s explicitly outlined within the configuration dictionary.

Internally, this units: logger.disabled = True

A disabled logger drops all data with out warning.

Why This Breaks Actual Functions

In trendy Python techniques, many loggers are created earlier than configuration runs:

- Frameworks (Django, FastAPI, Celery)

- Servers (Gunicorn, Uvicorn)

- Libraries (SQLAlchemy, Requests)

- Your individual modules at import time

If dictConfig() runs afterward with disable_existing_loggers=True, these loggers are silently disabled until redefined.

The outcome: Logs disappear. Worse, there’s no error or traceback, simply silence.

A Safer Methodology

For many real-world functions, I like to recommend a safer method:

"disable_existing_loggers": False

This technique preserves present loggers and avoids the silent lack of third-party or framework logs. It’s essential to nonetheless handle handler duplication fastidiously, however it’s simpler to detect duplicate logs than lacking ones.

Right here’s are some common tips:

-

Functions: Use

"disable_existing_loggers": False. -

Libraries: Don’t configure logging; add a

NullHandler. -

Totally managed techniques: Use

Trueprovided that you explicitly configure each logger.

Logging in Scalable, Multimodule, and Multithreaded Programs

When coping with a big software, scaling might be difficult. As techniques evolve and develop, issues which might be simpler to dismiss in smaller codebases are likely to emerge. Scaling-related blockers could present up as duplicated messages, inconsistent formatting, race situations (brought on by concurrent writes to shared handlers or recordsdata), and sudden prices as a result of efficiency.

Making certain multimodule logger consistency and adhering to multiple-process logging greatest practices is vital to keep away from overhead when constructing bigger tasks.

Multimodule Logger Consistency

In multimodule functions, a technique to maintain all the things on observe is naming consistency. Python’s logging hierarchy is string-based, which suggests logger names outline how configuration is inherited. Sustaining a disciplined use of dotted logger names is the correct technique to set up a uniform conduct. When modules comply with the identical naming prefix, they naturally fall beneath the identical configuration and behave constantly.

With a centralized logging configuration, formatting, severity ranges, routing, and filters are solely outlined as soon as and utilized in every single place. This manner, completely different modules don’t run the chance of silently diverging in conduct as a result of handler attachment or native configuration; a single configuration entry level turns into the one supply of fact for logging conduct.

As we noticed beforehand, logger inheritance permits baby loggers to undertake mum or dad settings and reduces granular upkeep efforts.

Thread-safe and A number of-process Logging

In multithreaded functions, logging has the accountability of guaranteeing that concurrent log emissions don’t intrude with each other. Python’s logging module gives built-in handlers that serialize writes internally, which prevents interleaved log strains or partially written messages.

However, thread security helps correctness however doesn’t eradicate efficiency prices. Synchronous handlers can nonetheless introduce rivalry when quite a few threads log concurrently.

A number of-process logging is extra complicated. When a number of processes write to the identical log file, rivalry can corrupt output or lose messages. Customary file handlers are thread-safe however not process-safe, so shared entry should be coordinated. Builders are likely to method it with methods like routing logs by way of a single course of, utilizing network-based handlers, or delegating writes to an exterior collector.

A very good answer for each threading and multiprocessing eventualities is queue-based logging pipelines. A QueueHandler pushes log data right into a thread- or process-safe queue, whereas a QueueListener in a separate thread or course of handles formatting and emission. This decouples log creation from I/O and reduces blocking.

In scalable techniques, dependable logging comes from utilizing thread‑protected handlers, course of‑conscious routing, and pipelines which might be nonblocking by design.

QueueHandler and QueueListener Patterns

Each forms of queue-based logging pipelines have indispensable roles which might be vital for builders who work with complicated techniques:

- QueueHandler: A logging handler that pushes log data right into a thread-safe or process-safe queue as a substitute of writing them on to a file or console.

- QueueListener: A companion object that runs in a separate thread or course of, consuming data from the queue and dispatching them to configured handlers (e.g., file, console, community).

When QueueHandler sends logs into the queue, it makes certain that employee threads or processes don’t block on I/O. QueueListener consumes the logs it has obtained (asynchronously) after which delivers them to the appointed vacation spot. This method permits the appliance to run as meant and easily with out ready for logging operations to finish.

Community and Distributed Logging

Trendy techniques generate huge quantities of operational knowledge, and managing that circulate is crucial to reliability. Logs should transfer from native providers into centralized collectors and pipelines, the place they’re listed and analyzed. Distributed layers for logs assist builders with higher visibility of the messages which might be vital for his or her work.

TCP and UDP Logging Variations

The principle distinction between TCP (Transmission Management Protocol) and UDP (Consumer Datagram Protocol) is how they deal with connections and reliability. With UDP, there’s no assure that the information really reaches the receiver. That may work for logs which might be despatched repeatedly (reminiscent of each second) as messages are constantly changed by incoming ones. However for one-off or crucial log occasions, I contemplate TCP the safer selection as a result of it requires a receipt acknowledgment from the receiver earlier than clearing the queue. For agent-specific instances, the perfect protocol finally is dependent upon the character of the knowledge being transmitted.

Selecting the proper protocol is dependent upon system necessities: TCP is a good selection for authoritative audit logs, error reporting, and significant occasion seize, whereas UDP is for light-weight and low-latency diagnostic streams the place occasional packet loss is a suitable draw back.

Right here is how they differ from each other at their core:

- TCP is dependable: Constructed for orderly and assured supply, TCP makes certain that each packet is acknowledged, retransmitted if misplaced, and assembled in sequence. The trade-off, nevertheless, is efficiency. Such precision and reliability do include some overhead, which might decelerate log ingestion.

- UDP is quick: It sends packets with out establishing a connection, with out acknowledgments, and with out ensures of arrival. Whereas this clearly makes it much less dependable, it’s quick, light-weight, and low latency.

SocketHandler Configuration

By implementing a SocketHandler, functions can transmit log data over TCP to a centralized collector, in order that logs are aggregated and processed throughout distributed environments in a constant method.

A SocketHandler core setup entails the next steps:

- Outline the distant server’s deal with and port the place logs can be despatched.

- The handler opens a TCP socket to the collector, sustaining a persistent channel (for log supply).

- Apply applicable log formatters in order that transmitted data are structured and parsed downstream.

Retry conduct, error dealing with, and fallback logic assist preserve stability when the distant log collector turns into briefly unavailable. When connections drop, the handler makes an attempt reconnection to keep away from dropping logs (retry conduct). Exceptions throughout transmission are caught and managed to stop software crashes (error dealing with). If the collector is unavailable, logs might be redirected to native recordsdata or buffers till connectivity is restored (fallback logic).

SocketHandler is often paired with collectors reminiscent of syslog servers, Fluentd cases, or customized TCP receiver. These devour messages for extra processing and indexing.

DatagramHandler Configuration

The DatagramHandler gives a light-weight mechanism for transmitting logs over UDP. Not like TCP-based handlers, it doesn’t preserve a persistent connection. This makes it well-suited for diagnostic streams which might be excessive‑quantity and low‑latency, and the place occasional packet loss is appropriate.

A DatagramHandler core setup entails the next:

- Every log file is encoded right into a datagram and despatched individually.

- The handler requires a number and port definition to direct packets to the suitable collector.

- No connection handshake or acknowledgment is carried out.

Port configuration and message-size consciousness are vital for compatibility with downstream collectors that anticipate light-weight and stateless log messages. UDP packets have dimension limits (generally round 65 KB, however usually a lot smaller, round 1400 bytes, to keep away from fragmentation). Handlers should guarantee that log messages match inside these boundaries to keep away from them being truncated or fragmented.

Distant Logging and Observability Pipelines

Logs not often stay on the host the place they’re generated. Native handlers usually emit logs into middleman brokers reminiscent of Fluentd, Logstash, or vector. These brokers carry out actions on the logs (reminiscent of normalization, parsing, and enrichment) earlier than forwarding them to a centralized storage location.

Distributed logging stacks then ingest these structured data into storage and observability platforms. Elasticsearch (as a part of the ELK stack) indexes logs to facilitate querying and visualization, Datadog combines logs and metrics in a single system, and Grafana Loki (usually known as Loki) applies label-based storage.

At a excessive stage, the distant logging structure follows a transparent circulate: Utility loggers emit messages → community handlers (TCP/UDP or HTTP) transmit them → log collectors or brokers course of and ahead data → processing pipelines parse, remodel, and index logs → dashboards and observability instruments present searchable entry. This illustrates the complete life cycle of distributed logging.

Extending Python’s Logging System

Python’s logging framework is wealthy by default, however it has additionally been designed to be extensible past fundamental configuration. Extra superior extension factors exist as instruments for builders to complement their logging data with customized metadata, robotically inject contextual info, and ship logs to locations past the usual handler set.

Customized LogRecord Factories

A customized LogRecord manufacturing facility permits builders to inject extra attributes into each log file as it’s created and lengthen the default metadata fields. Defining a file manufacturing facility includes writing a logging.setLogRecordFactory() operate. This operate creates or modifies an present LogRecord occasion after which registers it globally. As soon as registered, all loggers robotically use the brand new manufacturing facility.

LoggerAdapter for Contextual Logging

A light-weight technique to connect contextual knowledge to log messages with out altering present logging calls is utilizing the LoggerAdapter operate. It wraps an ordinary logger and merges a context dictionary into every emitted file, which makes contextual logging each express and reusable.

This sample is usually used for per-request, per-user, or per-task metadata. For instance, request IDs, consumer IDs, or session identifiers might be injected as soon as and robotically included in all associated log messages.

Writing Customized Handlers

Constructing a customized handler class includes subclassing logging.Handler and implementing a customized emit() technique to direct log data to nonstandard locations reminiscent of APIs, queues, or third-party instruments. Overriding this technique gives builders with full fine-grained management over how log data are serialized, buffered, retried, or dropped, which makes for a extra personalised expertise.

Manufacturing environments depend on exterior observability platforms that combination, index, visualize, and alert on logs. That is why integration is such a crucial a part of any logging technique.

Finish-to-end logging pipelines usually transfer data from application-level handlers into exterior collectors, by way of processing and enrichment levels, and eventually into listed storage techniques.

ELK Stack Integration

As a complete, the ELK (Elasticsearch, Kibana, and Logstash) stack gives an out-of-the-box set of instruments to have a full overview of your logging pipeline.

Logstash serves as the first ingestion and transformation layer. Python functions emit logs (usually in structured JSON) by way of file, socket, or HTTP handlers, and Logstash receives these data, parses fields, enriches metadata, and normalizes codecs earlier than forwarding them downstream.

The indexing and storage layer is supplied by Elasticsearch, which is designed for distributed search throughout giant quantities of log knowledge. Structured fields reminiscent of timestamps and request IDs present speedy querying and aggregation.

Kibana sits on prime of Elasticsearch with dashboards and visualizations that floor developments, error charges, and operational indicators. Developer groups are subsequently free to maneuver from particular person log strains to system-level perception and incident evaluation.

Datadog, Loki, and Higher Stack

Datadog, Grafana Loki, and Higher Stack acquire logs by counting on agent-based collectors. Brokers such because the Datadog Agent or Promtail for Loki tail native log recordsdata or obtain network-streamed occasions and ahead them to managed again ends.

The indexing and querying of logs on these platforms range. Unified tagging and correlation between logs, metrics, and traces are key options of Datadog. Label-based indexing (which suggests sooner search) is the principle focus of Loki, and Higher Stack gives pipelines focused at smaller groups or quick-moving tasks.

Error Monitoring Instruments (Sentry, Raygun, and Airbrake)

Instruments for error monitoring occupy an analogous however completely different house. Error monitoring platforms seize exceptions intimately, together with stack traces and contextual metadata previous to a failure, whereas commonplace logging data occasions.

When integrating Python logging with monitoring instruments like Sentry, Raygun, or Airbrake, SDKs or customized logging handlers that ahead high-severity log data and uncaught exceptions are usually required. This setup helps deep diagnostic evaluation, real-time alerting, and error grouping, which enhance a conventional log aggregation system.

Efficiency, Scaling, and Safety

At scale, logging stops being a passive diagnostic device and turns into an energetic a part of system conduct. When a logging pipeline is poorly designed, it exhibits up as latency spikes and general operational drag. Nicely-designed pipelines, however, are likely to fade into the background and work behind the scenes.

Log Quantity Administration

As a substitute of inflicting techniques to fail immediately, unbounded logging causes them to deteriorate over time. Oversights that may subtly enhance log quantity embrace repeated error loops and debug-level verbosity left enabled. Usually talking, controlling output consists of being acutely aware of what’s launched by default and letting verbosity fluctuate as wanted.

In my expertise, the commonest drawback with logging in manufacturing is managing the sheer quantity of knowledge. For those who log an excessive amount of info, it will probably shortly replenish the onerous drive. To forestall this, it’s important to rotate log recordsdata and again them as much as one other system utilizing a separate course of or service.

System hygiene can also be vital. That is the place retention and rotation storage issues come into play. Conserving logs longer than they’re helpful will increase each price and threat, and aggressive pruning could find yourself eradicating vital context when incidents occur. The stability is dependent upon the appliance and often adjustments as visitors will increase.

Excessive-volume techniques profit from batching, sampling, and dynamic log-level changes to stop extreme output. An excessive amount of output can overwhelm storage and indexing techniques. Moreover, well-established retention insurance policies can warrant that logs are dealt with (which usually means archived, rotated, or deleted) based on operational, authorized, or compliance necessities.

I/O Prices and Buffering

Logging all the time competes with the appliance for I/O. When writes occur synchronously, this rivalry turns into noticeable. Buffering or offloading can disguise it, smoothing the spikes in log exercise. Most manufacturing techniques depend on queues, background threads, or exterior brokers to deal with this work exterior the principle execution path.

The compromise is delayed supply and, in excessive instances, potential loss throughout a crash. Deciding the place buffering occurs (and the way a lot loss is appropriate to your staff) is a design selection fairly than a purely technical one.

Async and Structured Logging (Python 3.11+)

As a result of blocking conduct is immediately obvious, async functions reveal logging issues extra shortly. Occasion loops stay responsive when data are emitted right into a queue and serialization and I/O are left to a different thread or course of.

When concurrency is surging, structured output positive factors worth. Structured logging outputs (reminiscent of JSON, key-value pairs, and context-enriched data) make correlation and filtering predictable, whereas flat textual content logs are readable however brittle. With improved context propagation in newer Python variations, request- or task-level metadata can comply with execution paths with out being handed explicitly by way of each name.

Safe Logging (PII Masking, Audit Trails, and Compliance)

Safety issues have a tendency to seem after logs exist already. Delicate knowledge, together with personally identifiable info (PII), can seem unexpectedly in exception messages, debug output, or different fields, so stopping publicity often means filtering or redacting fields earlier than they’re written. Attempting to wash logs afterward is extra difficult and error-prone.

Audit logs are a unique class of knowledge. They’re meant to file privileged entry occasions, configuration adjustments, and system-level actions. On the similar time, they’re accountable for retaining delicate knowledge protected and away from plaintext.

From a compliance perspective, delicate fields should be masked, encrypted, or omitted solely to fulfill regulatory necessities reminiscent of Basic Information Safety Regulation (GDPR), Service Group Management 2 (SOC2), and comparable requirements. This is applicable not solely to application-level knowledge but in addition to metadata that will not directly determine customers or techniques and can also be simple to miss.

Audit logging, specifically, should assure that delicate values by no means seem in plaintext. Whereas these logs give attention to accountability and traceability (who modified what, and when) they need to stay tightly managed and remoted from common diagnostic output to keep away from unintended disclosure.

Testing and Debugging Logging Configurations

As a result of logging is basically configuration-driven and produces negative effects, testing logging configurations is about verifying its observable conduct fairly than the interior implementation. Small adjustments can silently alter essential steps alongside the pipeline.

Unit Testing Logging Output

Unit exams can validate that single log messages are emitted on the right severity and beneath the proper situations. Assessments usually seize log data in reminiscence and examine their attributes fairly than asserting on recordsdata or exterior techniques, as it might usually be the case in software testing.

Capturing Logs in Pytest

In pytest-based testing suites, instruments reminiscent of caplog intercept log data throughout check execution. Assessments can then examine:

- Which messages had been emitted.

- The severity stage of every message.

- How logger names seem within the hierarchy.

- The content material of any customized fields added by adapters, filters, or file factories.

This technique verifies logging intent with out counting on exterior handlers or I/O.

Validating Configuration

Past message content material, exams can even validate logging configuration itself. This consists of confirming that, for instance, required handlers are connected to the proper loggers. Configuration validation exams will help catch issues that always solely floor on the worst time if left unchecked, reminiscent of beneath load.

Designing Environment friendly Python Logging

In Python logging, construction issues, and architectural selections form whether or not the pipeline helps improvement or turns into a legal responsibility. A deep understanding of Python’s logging ecosystem can foster these efforts and provide help to construct a logging system that helps day-to-day improvement as a substitute of slowing it down.

As techniques evolve and develop, a well-oiled logging pipeline stops being solely a debugging assist and turns into an integral a part of the infrastructure: an info community that’s paramount to maintain predictable beneath load, composable, and suitable with exterior tooling.

Python logging handlers differ by vacation spot and conduct: some handlers write to consoles or persist logs to disk. Others handle file progress or rollover, ahead occasions remotely, or allow nonblocking processing throughout threads or processes. Selecting the correct mix ensures a logging technique that enriches and helps your undertaking from starting to finish.

Recapping Python Logging Greatest Practices

Considerate design of Python logging pipelines transforms logging from a easy debugging device into an integral a part of your software’s infrastructure. Some greatest practices to bear in mind for logging embrace:

- Configure logging centrally by way of hierarchical loggers.

- Keep away from direct calls to the basis logger.

- Persist logs and enrich them with metadata for clearer diagnostics.

- Use structured formatting, rotation, and centralized configuration to construct logging pipelines.