Are you incurring vital cross Availability Zone site visitors prices when working an Apache Kafka shopper in containerized environments on Amazon Elastic Kubernetes Service (Amazon EKS) that eat information from Amazon Managed Streaming for Apache Kafka (Amazon MSK) matters?

In case you’re not conversant in Apache Kafka’s rack consciousness function, we strongly suggest beginning with the weblog publish on the best way to Scale back community site visitors prices of your Amazon MSK customers with rack consciousness for an in-depth rationalization of the function and the way Amazon MSK helps it.

Though the answer described in that publish makes use of an Amazon Elastic Compute Cloud (Amazon EC2) occasion deployed in a single Availability Zone to eat messages from an Amazon MSK subject, trendy cloud-native architectures demand extra dynamic and scalable approaches. Amazon EKS has emerged as a number one platform for deploying and managing distributed functions. The dynamic nature of Kubernetes introduces distinctive implementation challenges in comparison with static shopper deployments. On this publish, we stroll you thru an answer for implementing rack consciousness in client functions which might be dynamically deployed throughout a number of Availability Zones utilizing Amazon EKS.

Right here’s a fast recap of some key Apache Kafka terminology from the referenced weblog. An Apache Kafka shopper client will register to learn in opposition to a subject. A subject is the logical information construction that Apache Kafka organizes information into. A subject is segmented right into a single or many partitions. Partitions are the unit of parallelism in Apache Kafka. Amazon MSK gives excessive availability by replicating every partition of a subject throughout brokers in several Availability Zones. As a result of there are replicas of every partition that reside throughout the completely different brokers that make up your MSK cluster, Amazon MSK additionally tracks whether or not a duplicate partition is in sync with the latest information for that partition. This implies there’s one partition that Amazon MSK acknowledges as containing probably the most up-to-date information, and this is named the chief partition. The gathering of replicated partitions is named in-sync replicas. This checklist of in-sync replicas is used internally when the cluster must elect a brand new chief partition if the present chief have been to develop into unavailable.

When client functions learn from a subject, the Apache Kafka protocol facilitates a community trade to find out which dealer presently has the chief partition that the buyer must learn from. Because of this the buyer could possibly be instructed to learn from a dealer in a special Availability Zone than itself, resulting in cross-zone site visitors cost in your AWS account. To assist optimize this value, Amazon MSK helps the rack consciousness function, utilizing which shoppers can ask an Amazon MSK cluster to offer a duplicate partition to learn from, inside the identical Availability Zone because the shopper, even when it isn’t the present chief partition. The cluster accomplishes this by checking for an in-sync duplicate on a dealer inside the identical Availability Zone as the buyer.

The problem with Kafka shoppers on Amazon EKS

In Amazon EKS, the underlying models of computes are EC2 cases which might be abstracted as Kubernetes nodes. The nodes are organized into node teams for ease of administration, scaling, and grouping of functions on sure EC2 occasion sorts. As a finest observe for resilience, the nodes in a node group are unfold throughout a number of Availability Zones. Amazon EKS makes use of the underlying Amazon EC2 metadata concerning the Availability Zone that it’s positioned in, and it injects that info into the node’s metadata throughout node configuration. Particularly, the Availability Zone (AZ ID) is injected into the node metadata.

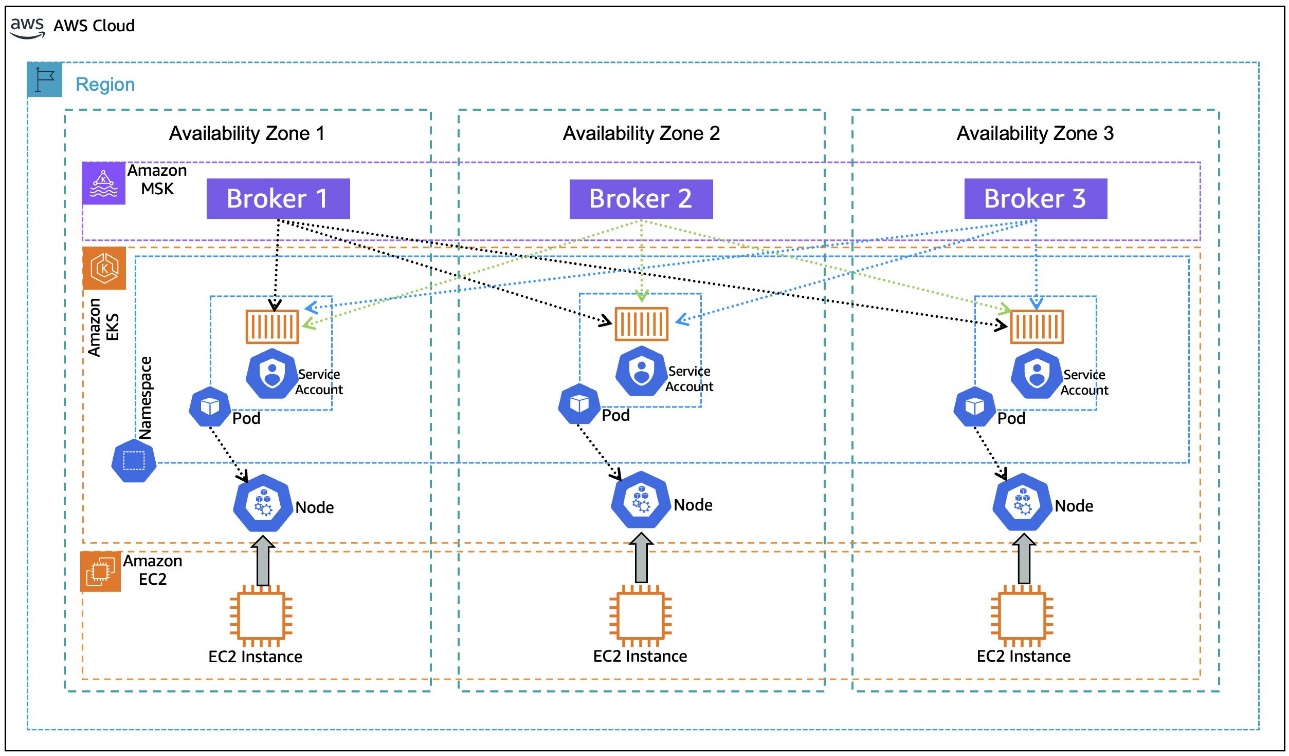

When an software is deployed in a Kubernetes Pod on Amazon EKS, it goes via a means of binding to a node that meets the pod’s necessities. As proven within the following diagram, while you deploy shopper functions on Amazon EKS, the pod for the appliance might be certain to a node with obtainable capability in any Availability Zone. Additionally, the pod doesn’t routinely inherit the Availability Zone info from the node that it’s certain to, a chunk of knowledge obligatory for rack consciousness. The next structure diagram illustrates Kafka customers working on Amazon EKS with out rack consciousness.

To set the shopper configuration for rack consciousness, the pod must know what Availability Zone it’s positioned in, dynamically, as it’s certain to a node. Throughout its lifecycle, the identical pod might be evicted from the node it was certain to beforehand and moved to a node in a special Availability Zone, if the matching standards allow that. Making the pod conscious of its Availability Zone dynamically units the rack consciousness parameter shopper.rack in the course of the initialization of the appliance container that’s encapsulated within the pod.

After rack consciousness is enabled on the MSK cluster, what occurs if the dealer in the identical Availability Zone because the shopper (hosted on Amazon EKS or elsewhere) turns into unavailable? The Apache Kafka protocol is designed to help a distributed information storage system. Assuming prospects observe the very best observe of implementing a replication issue > 1, Apache Kafka can dynamically reroute the buyer shopper to the subsequent obtainable in-sync duplicate on a special dealer. This resilience stays constant even after implementing nearest duplicate fetching, or rack consciousness. Enabling rack consciousness optimizes the networking trade to want a partition inside the identical Availability Zone, however it doesn’t compromise the buyer’s means to function if the closest duplicate is unavailable.

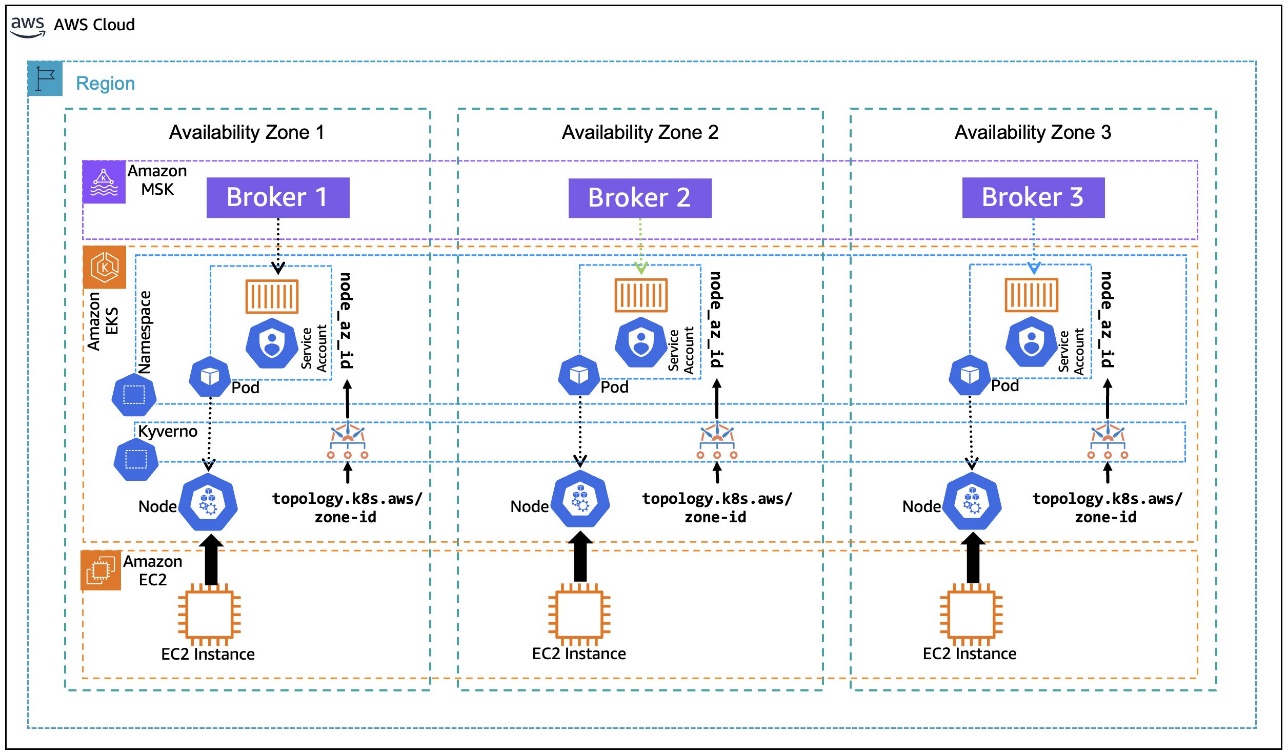

On this publish, we stroll you thru an instance of the best way to use the Kubernetes metadata label, topology.k8s.aws/zone-id, assigned to every node by Amazon EKS, and use an open supply coverage engine, Kyverno, to deploy a coverage that mutates the pods which might be within the binding state to dynamically inject the node’s AZ ID into the pod’s metadata as an annotation, as depicted within the following diagram. This annotation, in flip, is utilized by the container to create an setting variable that’s assigned the pod’s annotated AZ ID info. The setting variable is then used within the container postStart lifecycle hook to generate the Kafka shopper configuration file with rack consciousness setting. The next structure diagram illustrates Kafka customers working on Amazon EKS with rack consciousness.

Answer Walkthrough

Stipulations

For this walkthrough, we use AWS CloudShell to run the scripts which might be supplied inline as you progress. For a clean expertise, earlier than getting began, make sure that to have kubectl and eksctl put in and configured within the AWS CloudShell setting, following the set up directions for Linux (amd64). Helm can be required to be set up on AWS CloudShell, utilizing the directions for Linux.

Additionally, test if the envsubst instrument is put in in your CloudShell setting by invoking:

If the instrument isn’t put in, you may set up it utilizing the command:

We additionally assume you have already got an MSK cluster deployed in an Amazon Digital Personal Cloud (VPC) in three Availability Zones with the identify MSK-AZ-Conscious. On this walkthrough, we use AWS Identification and Entry Administration (IAM) authentication for shopper entry management to the MSK cluster. In case you’re utilizing a cluster in your account with a special identify, exchange the cases of MSK-AZ-Conscious within the directions.

We observe the identical MSK cluster configuration talked about within the Rack Consciousness weblog talked about beforehand, with some modifications. (Make sure you’ve set duplicate.selector.class = org.apache.kafka.frequent.duplicate.RackAwareReplicaSelector for the explanations mentioned there). In our configuration, we add one line: num.partitions = 6. Though not obligatory, this ensures that matters which might be routinely created may have a number of partitions to help clearer demonstrations in subsequent sections.

Lastly, we use the Amazon MSK Knowledge Generator with the next configuration:

Working the MSK Knowledge Generator with this configuration will routinely create a six-partition subject named MSK-AZ-Conscious-Matter on our cluster for us, and it’ll push information to that subject. To observe together with the walkthrough, we suggest and assume that you simply deploy the MSK Knowledge Generator to create the subject and populate it with simulated information.

Create the EKS cluster

Step one is to put in an EKS cluster in the identical Amazon VPC subnets because the MSK cluster. You possibly can modify the identify of the MSK cluster by altering that setting variable MSK_CLUSTER_NAME in case your cluster is created with a special identify than steered. You may as well change the Amazon EKS cluster identify by altering EKS_CLUSTER_NAME.

The setting variables that we outline listed below are used all through the walkthrough.

The final step is to replace the kubeconfig with an entry for the EKS cluster:

Subsequent, you have to create an IAM coverage, MSK-AZ-Conscious-Coverage, to permit entry from the Amazon EKS pods to the MSK cluster. Be aware right here that we’re utilizing MSK-AZ-Conscious because the cluster identify.

Create a file, msk-az-aware-policy.json, with the IAM coverage template:

To create the IAM coverage, use the next command. It first replaces the placeholders within the coverage file with values from related setting variables, after which creates the IAM coverage:

Configure EKS Pod Identification

Amazon EKS Pod Identification presents a simplified expertise for acquiring IAM permissions for pods on Amazon EKS. This requires putting in an add-on Amazon EKS Pod Identification Agent to the EKS cluster:

Affirm that the add-on has been put in and its standing is ACTIVE and that the standing of all of the pods related to the add-on is Working.

After you’ve put in the add-on, you have to create a pod id affiliation between a Kubernetes service account and the IAM coverage created earlier:

Set up Kyverno

Kyverno is an open supply coverage engine for Kubernetes that permits for validation, mutation, and technology of Kubernetes sources utilizing insurance policies written in YAML, thus simplifying the enforcement of safety and compliance necessities. That you must set up Kyverno to dynamically inject metadata into the Amazon EKS pods as they enter the binding state to tell them of Availability Zone ID.

In AWS CloudShell, create a file named kyverno-values.yaml. This file defines the Kubernetes RBAC permissions for Kyverno’s Admission Controller to learn Amazon EKS node metadata as a result of the default Kyverno (v. 1.13 onwards) settings don’t permit this:

After this file is created, you may set up Kyverno utilizing helm and offering the values file created within the earlier step:

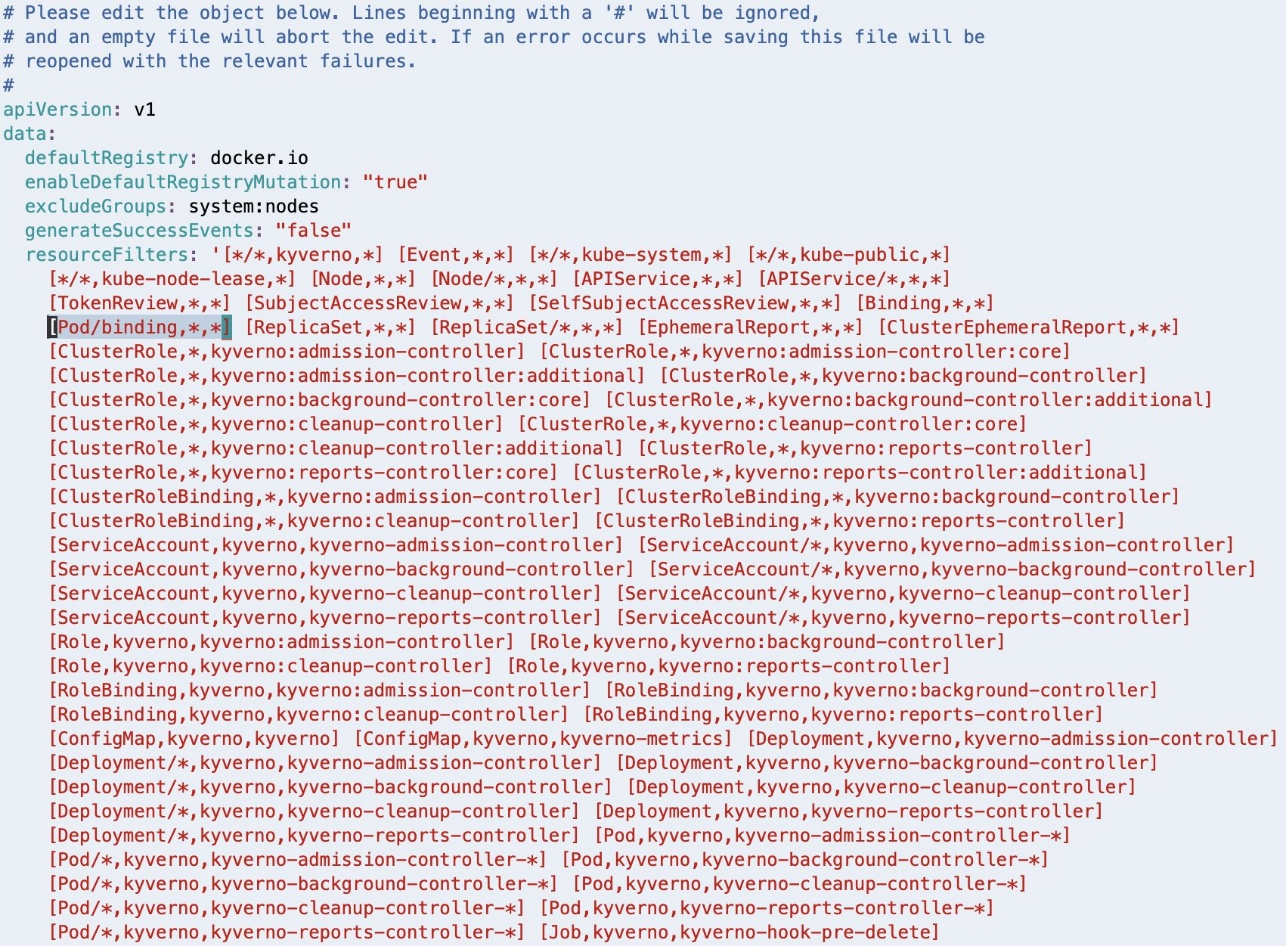

Beginning with Kyverno v 1.13, the Admission Controller is configured to disregard the AdmissionReview requests for pods in binding state. This must be modified by modifying the Kyverno ConfigMap:

The kubectl edit command makes use of the default editor configured in your setting (in our case Linux VIM).

This can open the ConfigMap in a textual content editor.

As highlighted within the following screenshot, [Pod/binding,*,*] must be faraway from the resourceFilters area for the Kyverno Admission Controller to course of AdmissionReview requests for pods in binding state.

If Linux VIM is your default editor, you may delete the entry utilizing VIM command 18x, that means delete (or reduce) 18 characters from the present cursor place. Save the modified configuration utilizing the VIM command :wq, that means write (or save) the file and give up.

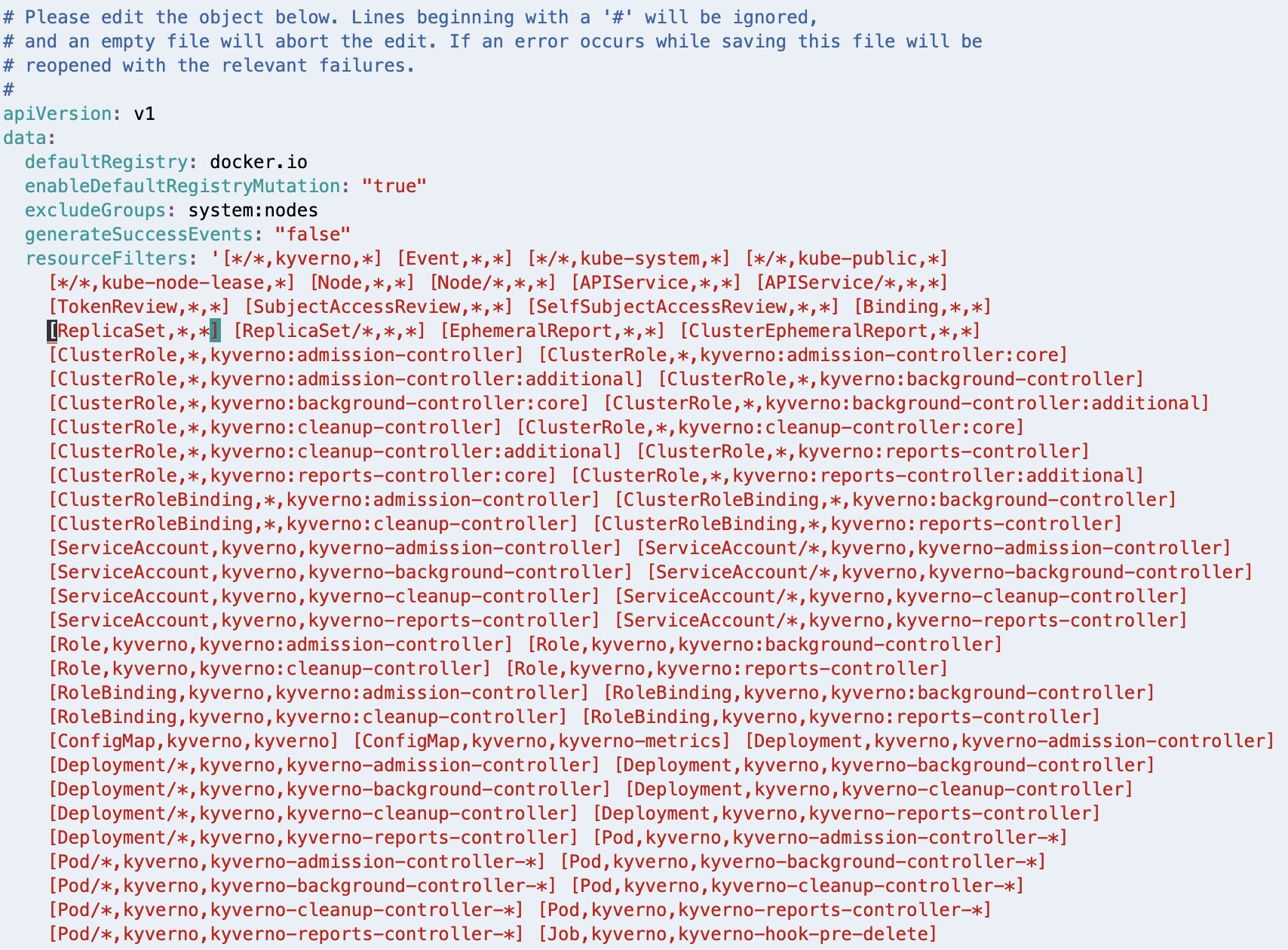

After deleting, the resourceFilters area ought to look much like the next screenshot.

When you’ve got a special editor configured in your setting, observe the suitable steps to realize the same end result.

Configure Kyverno coverage

That you must configure the coverage that can make the pods rack conscious. This coverage is tailored from the steered method within the Kyverno weblog publish, Assigning Node Metadata to Pods. Create a brand new file with the identify kyverno-inject-node-az-id.yaml:

It instructs Kyverno to look at for pods in binding state. After Kyverno receives the AdmissionReview request for a pod, it units the variable node to the identify of the node to which the pod is being certain. It additionally units one other variable node_az_id to the Availability Zone ID by calling the Kubernetes API /api/v1/nodes/node to get the node metadata label topology.k8s.aws/zone-id. Lastly, it defines a mutate rule to inject the obtained AZ ID into the pod’s metadata as an annotation node_az_id.

After you’ve created the file, apply the coverage utilizing the next command:

Deploy a pod with out rack consciousness

Now let’s visualize the issue assertion. To do that, hook up with one of many EKS pods and test the way it interacts with the MSK cluster while you run a Kafka client from the pod.

First, get the bootstrap string of the MSK cluster. Lookup the Amazon Useful resource Names (ARNs) of the MSK cluster:

Utilizing the cluster ARN, you will get the bootstrap string with the next command:

Create a brand new file named kafka-no-az.yaml:

This pod manifest doesn’t make use of the Availability Zone ID injected into the metadata annotation and therefore doesn’t add shopper.rack to the shopper.properties configuration.

Deploy the pods utilizing the next command:

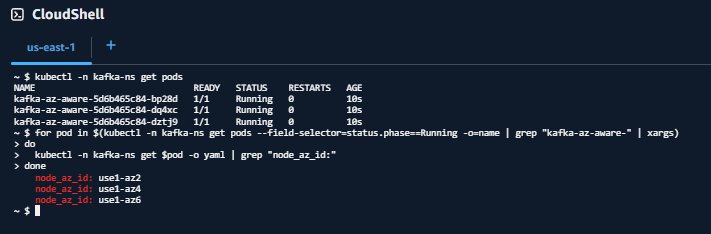

Run the next command to verify that the pods have been deployed and are within the Working state:

Choose a pod id from the output of the earlier command, and hook up with it utilizing:

Run the Kafka client:

This command will dump all of the ensuing logs into the file, non-rack-aware-consumer.log. There’s a whole lot of info in these logs, and we encourage you to open them and take a deeper look. Subsequent, look at the EKS pod in motion. To do that, run the next command to tail the file to view fetch request outcomes to the MSK cluster. You’ll discover a handful of significant logs to evaluation as the buyer entry varied partitions of the Kafka subject:

Observe your log output, which ought to look much like the next:

You’ve now linked to a particular pod within the EKS cluster and run a Kafka client to learn from the MSK subject with out rack consciousness. Do not forget that this pod is working inside a single Availability Zone.

Reviewing the log output, you discover rack: values as use1-az2, use1-az4, and use1-az6 because the pod makes calls to completely different partitions of the subject. These rack values signify the Availability Zone IDs that our brokers are working inside. Because of this our EKS pod is creating networking connections to brokers throughout three completely different Availability Zones, which might be accruing networking prices in our account.

Additionally discover that you don’t have any approach to test which node, and due to this fact Availability Zone, this EKS pod is working in. You possibly can observe within the logs that it’s calling to MSK brokers in several Availability Zones, however there isn’t any approach to know which dealer is in the identical Availability Zone because the EKS pod you’ve linked to. Delete the deployment while you’re executed:

Deploy a pod with rack consciousness

Now that you’ve skilled the buyer habits with out rack consciousness, you have to inject the Availability Zone ID to make your pods rack-aware.

Create a brand new file named kafka-az-aware.yaml:

As you may observe, the pod manifest defines an setting variable NODE_AZ_ID, assigning it the worth from the pod’s personal metadata annotation node_az_id that was injected by Kyverno. The manifest then makes use of the pod’s postStart lifecycle script so as to add shopper.rack into the shopper.properties configuration, setting it equal to the worth within the setting variable NODE_AZ_ID.

Deploy the pods utilizing the next command:

Run the next command to verify that the pods have been deployed and are within the Working state:

Confirm that Availability Zone Ids have been injected into the pods

Your output ought to look much like:

Or:

Choose a pod id from the output of the get pods command and shell-in to it.

The output of the get $pod command matches the order of outcomes from the get pods command. This matching will make it easier to perceive what Availability Zone your pod is working in so you may evaluate it to log outputs later.

After you’ve linked to your pod, run the Kafka client:

Much like earlier than, this command will dump all of the ensuing logs into the file, rack-aware-consumer.log. You create a brand new file so there’s no overlap between the Kafka customers you’ve run. There’s a whole lot of info in these logs, and we encourage you to open them and take a deeper look. If you wish to see the rack consciousness of your EKS pod in motion, run the next command to tail the file to view fetch request outcomes to the MSK cluster. You possibly can observe a handful of significant logs to evaluation right here as the buyer entry varied partitions of the Kafka subject:

Observe your log output, which ought to look much like the next:

For every log line, now you can observe two rack: values. The primary rack: worth reveals the present chief, the second rack: reveals the rack that’s getting used to fetch messages.

For instance, have a look at MSK-AZ-Conscious-Matter-5. The chief is recognized as rack: use1-az4, however the fetch request is distributed to use1-az6 as indicated by to node b-2.mskazaware.hxrzlh.c6.kafka.us-east-1.amazonaws.com:9098 (id: 2 rack: use1-az6) (org.apache.kafka.shoppers.client.internals.AbstractFetch)

You’ll discover one thing comparable in all different log traces. The fetch is all the time to the dealer in use1-az6, which maps to our expectation, given the pod we linked to was on this Availability Zone.

Congratulations! You’re consuming from the closest duplicate on Amazon EKS.

Clear Up

Delete the deployment when completed:

To delete the EKS Pod Identification affiliation:

To delete the IAM coverage:

To delete the EKS cluster:

In case you adopted together with this publish utilizing the Amazon MSK Knowledge Generator, make sure you delete your deployment so it’s not making an attempt to generate and ship information after you delete the remainder of your sources.

Clear up will rely on which deployment possibility you used. To learn extra concerning the deployment choices and the sources created for the Amazon MSK Knowledge Generator, check with Getting Began within the GitHub repository.

Creating an MSK cluster was a prerequisite of this publish, and for those who’d like to scrub up the MSK cluster as nicely, you should use the next command:

aws kafka delete-cluster --cluster-arn "${MSK_CLUSTER_ARN}"

There isn’t a further value to utilizing AWS CloudShell, however for those who’d wish to delete your shell, check with the Delete a shell session residence listing within the AWS CloudShell Consumer Information.

Conclusion

Apache Kafka nearest duplicate fetching, or rack consciousness, is a strategic cost-optimization method. By implementing it for Amazon MSK customers on Amazon EKS, you may considerably cut back cross-zone site visitors prices whereas sustaining strong, distributed streaming architectures. Open supply instruments resembling Kyverno can simplify advanced configuration challenges and drive significant financial savings.The answer we’ve demonstrated gives a robust, repeatable method to dynamically injecting Availability Zone info into Kubernetes pods, optimize Kafka client routing, and decrease cut back switch prices.

Extra sources

To be taught extra about rack consciousness with Amazon MSK, check with Scale back community site visitors prices of your Amazon MSK customers with rack consciousness.

Concerning the authors

Austin Groeneveld is a Streaming Specialist Options Architect at Amazon Net Providers (AWS), based mostly within the San Francisco Bay Space. On this position, Austin is captivated with serving to prospects speed up insights from their information utilizing the AWS platform. He’s significantly fascinated by the rising position that information streaming performs in driving innovation within the information analytics house. Outdoors of his work at AWS, Austin enjoys watching and taking part in soccer, touring, and spending high quality time together with his household.

Austin Groeneveld is a Streaming Specialist Options Architect at Amazon Net Providers (AWS), based mostly within the San Francisco Bay Space. On this position, Austin is captivated with serving to prospects speed up insights from their information utilizing the AWS platform. He’s significantly fascinated by the rising position that information streaming performs in driving innovation within the information analytics house. Outdoors of his work at AWS, Austin enjoys watching and taking part in soccer, touring, and spending high quality time together with his household.

Farooq Ashraf is a Senior Options Architect at AWS, specializing in SaaS, Generative AI, and MLOps. He’s captivated with mixing multi-tenant SaaS ideas with Cloud companies to innovate scalable options for the digital enterprise, and has a number of weblog posts, and workshops to his credit score.

Farooq Ashraf is a Senior Options Architect at AWS, specializing in SaaS, Generative AI, and MLOps. He’s captivated with mixing multi-tenant SaaS ideas with Cloud companies to innovate scalable options for the digital enterprise, and has a number of weblog posts, and workshops to his credit score.