Machine studying fashions require labeled information with the intention to study and make dependable predictions. Developments in synthetic intelligence (AI) and enormous language fashions (LLMs) are pushed extra by information high quality than amount, or by mannequin structure. This implies high-quality information labeling is extra essential than ever—and regardless of the rise in automated information labeling instruments, human experience stays irreplaceable. People are good at understanding context, feelings, and delicate nuances that algorithms might overlook or misread as a result of their reliance on predefined patterns and statistical fashions. For instance, in duties like sentiment evaluation or picture labeling, human annotators can acknowledge irony, sarcasm, cultural references, and emotional undertones that could be difficult for machines to detect precisely. Furthermore, people can present priceless suggestions to enhance algorithmic approaches over time. By retaining people within the loop, organizations can mitigate dangers related to biases and errors that automated instruments on their very own would possibly introduce.

In my 4 years of main AI growth initiatives and scaling groups, I’ve explored a big selection of approaches to constructing an information labeling crew. On this article, I break down the various kinds of labeling groups, advocate use instances, and supply particular steering on learn how to construction, recruit, and prepare your crew.

Varieties of Information Labeling Groups

In terms of information labeling for machine studying, there’s no one-size-fits-all answer. Totally different initiatives demand totally different methods primarily based on their information varieties, complexity, and supposed use instances. The spectrum of knowledge labeling groups usually spans three essential varieties: human-powered (or handbook), totally automated, and hybrid. Every method brings distinctive strengths to the desk, together with sure limitations.

Guide Annotation Groups

Composed primarily of annotators who label the info by hand, handbook annotation groups rely totally on human cognitive talents to use context, tradition, and linguistic subtleties that machines usually wrestle to know. This method fits initiatives requiring detailed understanding and interpretation of advanced or nuanced information. Guide annotation has scalability and value challenges: It’s inherently time-consuming and labor-intensive. Regardless of this, material specialists stay indispensable for initiatives the place high-quality labels are essential, resembling medical diagnostics or advanced authorized texts.

One of the vital well-known instances of handbook annotation is the unique iteration of reCAPTCHA. Designed by Guatemalan laptop scientist Luis von Ahn, the system was made to guard web sites from bots, nevertheless it additionally contributed considerably to the creation of labeled datasets. When customers interacted with reCAPTCHA challenges, like figuring out all photographs with visitors lights or typing distorted textual content, in addition they created input-output pairs that had been used for coaching machine studying fashions in object recognition. (The service has since pivoted to utilizing conduct evaluation to detect bots.)

Automated Annotation Groups

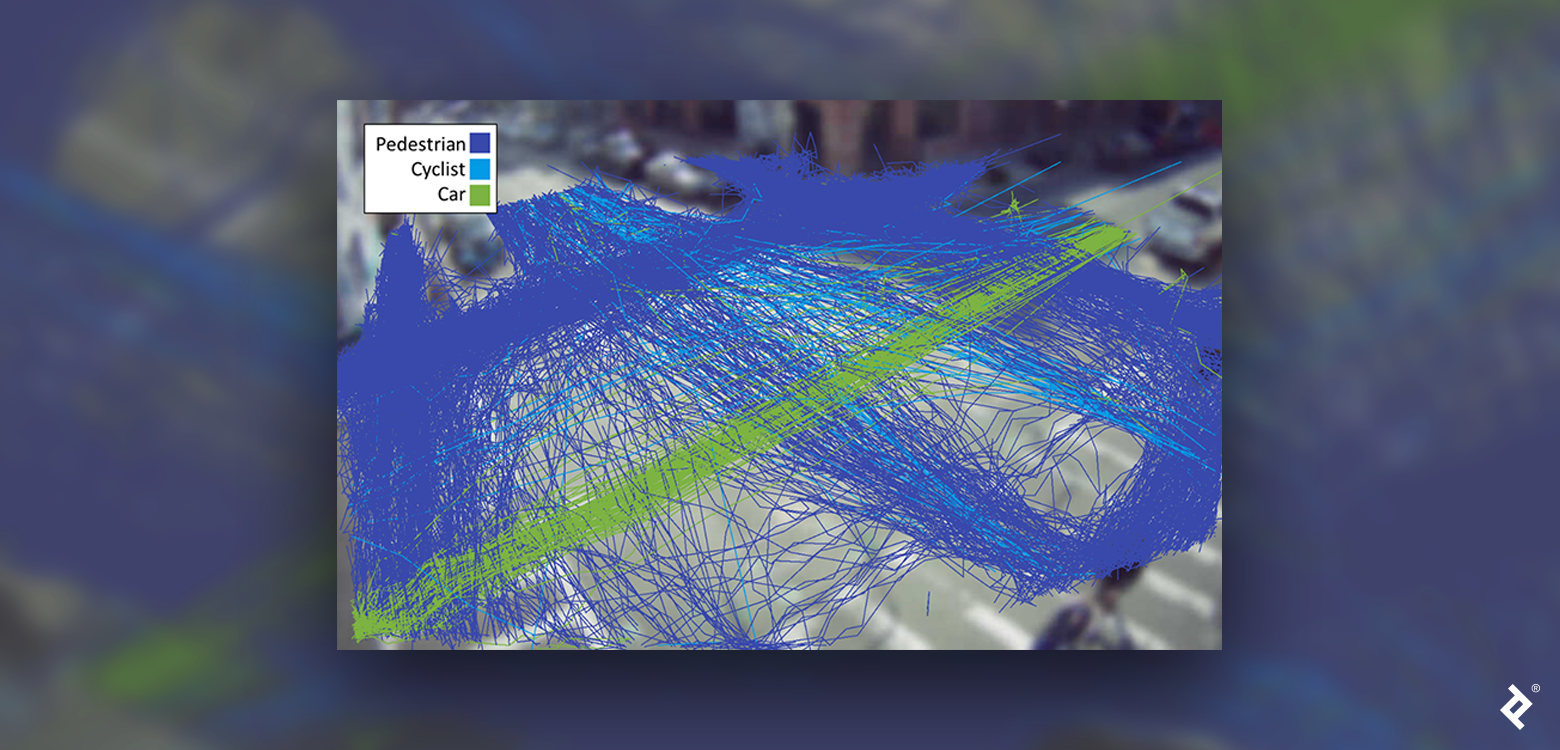

Automated annotation groups depend on algorithms and machine studying fashions to annotate information with minimal human intervention. Software program engineers, information scientists, and machine studying specialists kind the spine of this method, growing, coaching, and sustaining the programmatic labeling fashions that function within the background. Automated annotation excels in initiatives resembling optical character recognition, which scans paperwork or photographs and shortly converts them into searchable textual content. It’s also extremely efficient in video body labeling, robotically annotating 1000’s of frames to establish objects inside video streams.

Regardless of benefits in pace and scalability, this method isn’t used by itself, as a result of if you have already got a mannequin that may predict the labels then there’s little purpose to retrain one other mannequin from scratch utilizing those self same labels.What’s extra, automated annotation is just not splendid for information that requires intricate contextual understanding or subjective interpretation. It depends closely on well-defined statistical patterns, making it liable to biases or misclassifications when skilled on incomplete or skewed datasets. This inherent limitation emphasizes the necessity for high quality management measures and human oversight.

Hybrid Annotation Groups

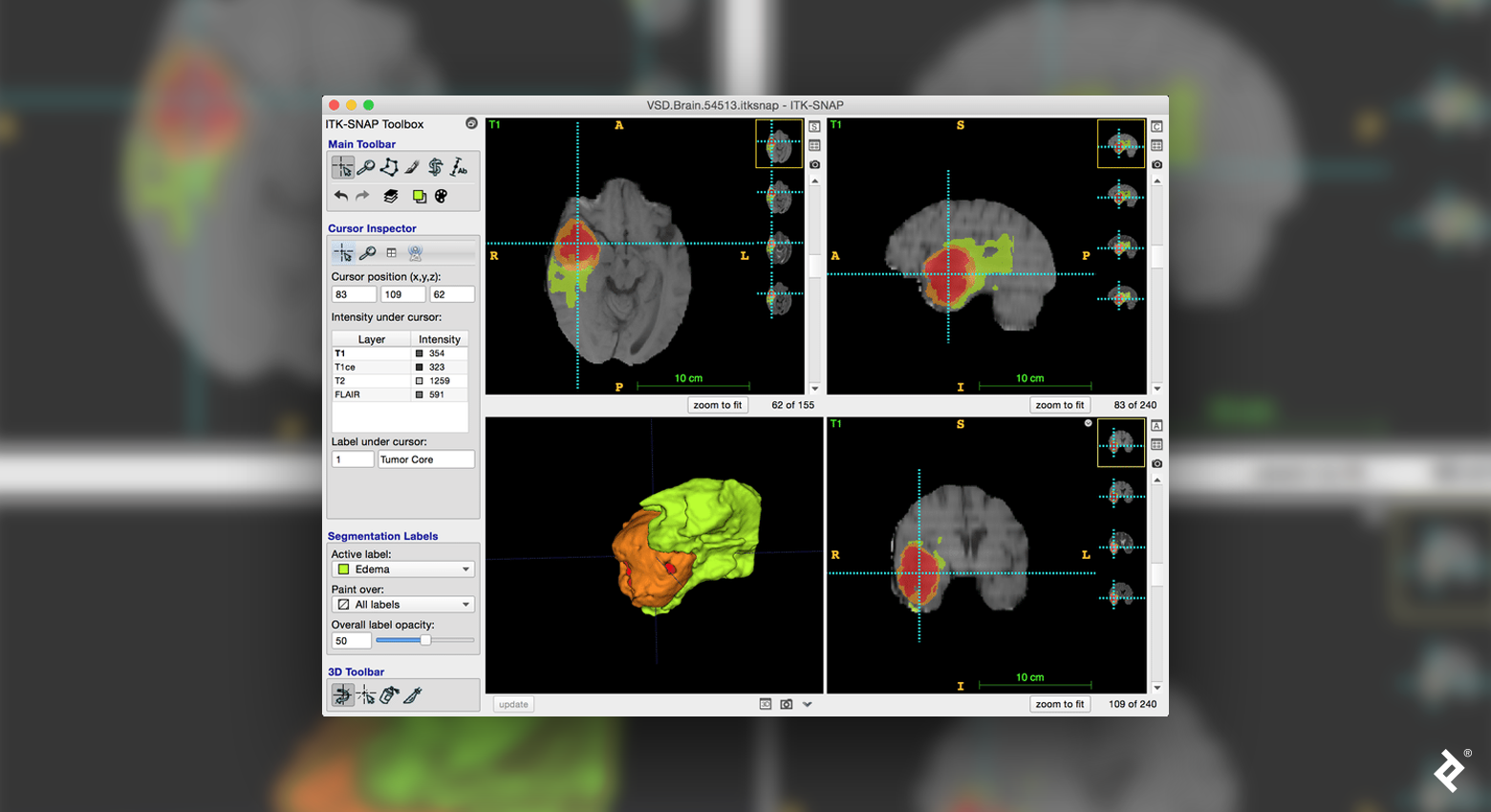

The hybrid semi-supervised method blends the pace of automated labeling with the precision of human oversight to strike a stability between effectivity and accuracy. This method sometimes includes leveraging machine studying fashions for large-scale labeling duties, whereas human labelers deal with high quality management, edge instances, and ambiguous information. In initiatives like medical picture classification, for instance, automated algorithms or fashions first establish potential abnormalities in MRI scans, after which medical doctors confirm the accuracy of the outcomes.

A key benefit of hybrid groups is their flexibility. Automated fashions deal with repetitive, high-volume duties that don’t require nuanced judgment, permitting human specialists to concentrate on tougher instances. This workflow reduces annotation time whereas sustaining information high quality—however integrating machine and human efforts additionally requires strong workflows and clear communication. Growing tips ensures constant labeling throughout the crew, and steady suggestions loops assist refine automated fashions primarily based on human insights.

Structuring Your Information Labeling Workforce

Whereas the roles might range relying on the precise undertaking, the kind of information labeling you select will decide what sort of specialists you want. Exact definitions of roles and duties are important to ascertain environment friendly workflows. Listed here are among the most related crew members and the way they could contribute to an information labeling undertaking:

Workforce lead/Undertaking supervisor: The crew lead coordinates the crew’s actions, establishing annotation tips, deadlines, and key metrics to make sure everyone seems to be aligned. As an example, if the undertaking includes annotating movies for a dataset supporting autonomous driving, the lead defines particular parameters like body charge, object classes, and boundary tolerances. They preserve communication between stakeholders and the annotation crew, ensuring that shopper suggestions (e.g., requiring extra exact pedestrian identification) will get included into up to date tips. Within the case of hybrid groups, they guarantee fashions are usually up to date with handbook corrections and that timelines for each groups align.

QA specialist: Because the gatekeeper for high quality, the QA specialist routinely audits annotations to verify that they meet the undertaking’s accuracy requirements. For instance, if an annotator constantly mislabels cancerous tumors in MRI scans in medical picture labeling, the job of the QA specialist is to catch the discrepancy, work with the crew result in alter the rules, and supply tailor-made suggestions to the annotator. They could run spot-checks or sampling opinions to confirm the consistency of the crew’s output, which immediately impacts the reliability of knowledge fashions.

Information labelers: Labelers are the first contributors to the precise activity—labeling information. If the undertaking includes annotating e-commerce photographs for object detection, for instance, they might meticulously define objects like sneakers, baggage, and clothes. They adhere to tips for uniform labeling whereas looking for clarification on ambiguous instances. As an example, if a brand new product class like smartwatches seems, they seek the advice of the crew lead or QA specialist to make sure constant labeling.

Area skilled/Marketing consultant: When taking a hybrid method to labeling, area specialists work alongside annotators and engineers to refine fashions for particular challenges. They could advise on edge instances the place automated fashions wrestle, guaranteeing the system’s guidelines incorporate skilled data. As an example, in an e-commerce picture categorization undertaking, they may define distinctions in style kinds that handbook annotators should establish.

Information scientist: The info scientist defines the methods for preprocessing and coaching datasets to optimize the annotation fashions. Suppose the automated annotation undertaking includes categorizing sentiment in buyer emails. In that case, the info scientist designs information pipelines that filter, clear, and stability the dataset for correct sentiment detection. They analyze annotated outputs to establish biases, gaps, or error patterns, offering insights to machine studying engineers for bettering the fashions.

For hybrid and automatic information labeling initiatives, you will have to deliver engineers on board who can deal with growth duties:

Software program developer: Builders construct and preserve the infrastructure that integrates the annotation fashions into the broader workflow. As an example, in an autonomous driving undertaking the place movies are analyzed for lane detection, they might develop a instrument to feed real-time video into the fashions, seize the annotations, and retailer them in a structured database. Builders may also implement APIs that allow annotators to question and validate automated outcomes effectively.

Machine studying engineer: The machine studying engineer designs and trains the fashions used for automated annotation. If the undertaking includes labeling photographs for facial recognition in safety programs, the engineer would develop a convolutional neural community (CNN) able to recognizing numerous facial options. The engineer additionally refines the mannequin primarily based on annotated information to scale back false positives and negatives. The system’s accuracy is improved by steady testing and retraining, particularly when new facial patterns or angles are launched.

Centralized vs. Decentralized Information Labeling Groups

The most effective mannequin to your information labeling crew hinges on elements like undertaking scope, information complexity, safety necessities, and funds.

In-house Centralized Workforce

This mannequin includes constructing a devoted crew of labelers or annotators throughout the group. With in-house employees, administration oversees high quality requirements and processes to make sure that annotations align with inner crew tips. However this stage of management requires important funding, as coaching, managing, and scaling the crew are inherently resource-intensive duties. Nonetheless, this method is especially priceless when coping with delicate information that may’t be outsourced or the place constant labeling high quality is paramount.

Such a crew is normally composed of annotators, high quality assurance specialists, undertaking managers, and platform engineers who arrange annotation instruments and workflows. Information scientists and machine studying engineers may also help the crew by offering labeling tips and refining labeling processes. They’re all immediately managed by a central information crew, usually underneath the chief information officer (CDO) or chief expertise officer (CTO). Undertaking managers work intently with higher administration to align labeling priorities with organizational targets.

Outsourced Centralized Workforce

Outsourcing to third-party distributors or service suppliers gives rapid entry to skilled annotators. This mannequin allows scalability, tapping right into a a lot bigger workforce than an in-house crew may present alone. Whereas high quality management and communication can current challenges, respected information labeling firms sometimes have well-established processes and specialised experience to ship dependable outcomes. Outsourcing is usually useful for initiatives the place flexibility and scalability are essential however controlling delicate information is much less of a priority. With outsourcing, the annotators, in addition to the standard management specialists, are provided by a service supplier. A undertaking supervisor or information crew head supervises the seller relationship and works underneath the CDO or CTO to make sure that high quality requirements and expectations are met.

Crowdsourcing

Crowdsourcing distributes annotation duties to a various, decentralized workforce utilizing platforms like Amazon Mechanical Turk or Clickworker. This mannequin’s key benefit is speedy scalability, leveraging an enormous pool of employees from numerous backgrounds and time zones. Nevertheless, sustaining high quality management throughout such a diverse workforce requires cautious administration. Methods like consensus-based voting assist confirm label high quality and accuracy, whereas clear tips present constant expectations.

A crowdsourced crew may doubtlessly contain 1000’s of distributed employees with diverse ability ranges. The crew is often supported by platform engineers and QA specialists who arrange high quality management programs. The work is managed by the crowdsourcing platform, usually underneath the supervision of a information undertaking supervisor or information operations supervisor, who coordinates between platform employees and the group. Oversight is the duty of the info crew, which falls underneath the CDO or CTO.

Harnessing devoted volunteers’ enthusiasm and collective experience, community-based labeling incentivizes contributors by means of gamification or shared pursuits. This method depends on people who find themselves passionate sufficient about the subject material to annotate information precisely and constantly. Though high quality management will be tough, establishing neighborhood tips and moderation mechanisms may help.

These groups normally characteristic volunteers, moderators, neighborhood managers, and QA specialists, in addition to platform engineers who assist configure the instruments and workflow. From a structural standpoint, neighborhood managers can report back to the undertaking supervisor or head of the info labeling crew.

Recruiting and Coaching Information Labelers

Splendid data-labeling candidates display consideration to element, a capability to interpret nuanced info, and a willingness to observe tips intently. For handbook labeling initiatives, human annotators can come from numerous fields, however they want a eager eye for element and the power to work comfortably with giant volumes of knowledge. Area experience can also be fascinating to offer correct and contextually related annotations for the precise undertaking at hand. Familiarity with specialised instruments like Labelbox or CVAT is advantageous, because it streamlines the annotation course of. Moreover, annotators ought to have the ability to deal with high quality management duties to make sure uniform requirements are met throughout the dataset.

Automated labeling groups could be the most difficult to recruit for because of the extremely technical expertise required. Information scientists and machine studying engineers are among the many most sought-after specialists now—and for the foreseeable future. In line with the World Financial Discussion board, the demand for these professionals is anticipated to develop 40% by 2027. As they’re the spine of automated information labeling fashions, they need to have expertise with the algorithms and frameworks that underpin automated annotation pipelines, resembling CNNs, pure language processing (NLP), and time sequence evaluation. Data of knowledge preprocessing, in addition to mannequin coaching and validation, is essential to make sure that automated fashions stay correct throughout diverse datasets. Moreover, proficiency in coding languages (e.g., Python, R, or SQL) and familiarity with cloud platforms are extremely priceless.

In case you are constructing a hybrid crew, search for robust collaborative expertise that may enable you join automated labeling with handbook oversight. Annotators ought to supply insights that enhance automated algorithms, whereas information scientists should be attentive to the annotators’ suggestions. These groups profit considerably from members who can suppose critically throughout totally different domains and proactively share data to boost workflow effectivity.

Upskilling Your Workforce

Coaching applications are a wonderful means to make sure your information labeling crew operates effectively and at a excessive stage. It is best to take a multifaceted method, by which annotators study to navigate the complexities of instruments, information varieties, and undertaking tips. This goes past the fundamentals—they should be proficient with every instrument’s superior options to enhance accuracy and productiveness.

Every dataset calls for a singular method, so coaching applications ought to immerse your employees within the particular labeling methods wanted for various information varieties. For picture information, they could observe inserting bounding bins round distinct objects or making use of segmentation strategies that define object edges precisely. For textual content, annotators should grasp entity recognition, categorization, or sentiment tagging. Efficient coaching will assist the crew create correct and dependable annotations.

Consciousness of high quality management will even pace up the method. Annotators must be skilled in self-review methods to establish errors or inconsistencies earlier than information reaches the QA stage. This proactive high quality management helps preserve dataset accuracy and adherence to constant labeling tips. Understanding the frequent error patterns of their specific area will likely be essential to anticipating and addressing challenges early.

In hybrid groups, finest practices contain coaching annotators and engineers to foster collaboration. Annotators ought to grasp how machine studying fashions will use their labels, whereas engineers want a sensible understanding of handbook annotation challenges. This cross-training ensures all crew members admire the undertaking’s targets, resulting in a cohesive workflow by which handbook and automatic efforts complement one another.

Scaling a Profitable Information Labeling Workforce

Along with your crew in place, it’s time to ascertain strong documentation practices and well-defined customary working procedures. These assist with consistency and scalability by offering annotators and information scientists with exact, repeatable tips to observe. Create a shared repository that paperwork key workflows for every information sort or annotation activity. This repository ought to embody tips for edge instances, examples of frequent annotation errors, and directions on addressing them. Usually assessment these tips to adapt to rising undertaking wants or shifts in annotation requirements.

To streamline annotation efforts and decrease downtime, incorporate instruments that improve crew collaboration and information administration. Open-source instruments like GitHub, OpenProject, and Jira’s cloud subscription may help centralize communication and preserve undertaking duties organized whereas guaranteeing annotators can simply entry mandatory tips. Use labeling platforms that permit annotations to be saved systematically and assist handle workflow processes effectively. This can make assigning, reviewing, and approving labeling duties simpler whereas sustaining high-quality information.

A few of the finest practices on this regard embody aligning your crew on efficiency metrics and high quality benchmarks by clearly speaking labeling targets, anticipated accuracy charges, and timelines. Set up periodic audits and QA assessment factors the place annotated datasets are sampled and verified for consistency. Construct a suggestions loop the place QA specialists present actionable insights to annotators, serving to them refine their expertise and observe tips extra successfully. Automated reporting instruments may also spotlight particular person and crew traits in accuracy or productiveness, figuring out areas that want consideration.

Lastly, emphasize a tradition of steady enchancment. Use insights from high quality opinions to refine annotation tips and replace customary working procedures. Conduct common coaching periods the place annotators and information scientists can study new methods, handle recurring challenges, and share their experiences. By iterating in your processes and investing in crew progress, you’ll foster a versatile, high-performing information labeling workflow to deal with present and future initiatives.

As machine studying and AI preserve evolving and being built-in into totally different industries, the demand for high-quality coaching information has skyrocketed. Correct information labeling isn’t only a technical field to tick—it’s a strategic asset that may make or break the usefulness and effectivity of your machine-learning fashions. Groups that may shortly adapt to new information varieties, deal with huge datasets easily, and preserve excessive labeling requirements will give their firms a aggressive edge within the fast-paced AI world.