Google Cloud in the present day unveiled a slew of database enhancements designed to enhance prospects’ generative AI initiatives, together with the final availability of ScaNN index that may assist as much as 1 billion vectors in AlloyDB and assist for vector search in Memorystore for Valkey 7.2.

As corporations construct out their GenAI merchandise and techniques, they’re trying to databases that may carry all of it collectively. The potential to create, retailer, and serve vector embeddings that connect with massive language fashions (LLMs) is a essential piece of these initiatives. To that finish, Google Cloud rolled out a number of enhancements to its database choices that may assist corporations transfer their GenAI balls ahead.

First up is the launch of Google’s ScaNN index with AlloyDB, the corporate’s Postgres-based hosted database service. First introduced in April for Alloy DB Omni, the downloadable model of AlloyDB, Google Cloud has now declared the ScaNN index usually accessible with its hosted AlloyDB for PostgreSQL providing.

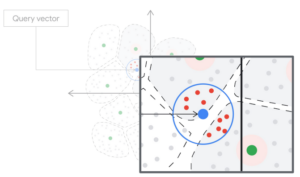

ScaNN is constructed on the approximate nearest-neighbor know-how that Google Analysis constructed for its personal search engine, for Google Advertisements, and for YouTube. That may give Google Cloud prospects loads of overhead for his or her neural search and GenAI purposes, says Google Cloud GM & VP of Engineering, Databases Andi Gutmans.

“The ScaNN index is the primary PostgreSQL-compatible index that may scale to assist a couple of billion vectors whereas sustaining state-of-the-art question efficiency–enabling excessive scale workloads for each enterprise,” Gutmans stated in a weblog put up in the present day.

ScaNN is suitable with pgvector, the favored vector plug-in for Postgres, however exceeds it in a number of methods, in keeping with a Google white paper on ScaNN. In comparison with pgvector, ScaNN can create vector indexes as much as 8x sooner, affords 4x the question efficiency, makes use of 3-4x much less reminiscence, and as much as 10x the write throughput. You’ll be able to obtain the Google white paper right here.

One other GenAI enhancement could be discovered with the addition of vector search within the 7.2 variations of Memorystore for Redis and Memorystore for Valkey, a brand new key-value retailer providing Google Cloud launched final month. Valkey is an open-source fork of Redis that’s managed by the Linux Basis, and which Google Cloud has taken an curiosity.

“A single Memorystore for Valkey or Memorystore for Redis Cluster occasion can carry out vector search at single-digit millisecond latency on over a billion vectors with higher than 99% recall,” Gutmans writes in his weblog put up.

The corporate additionally introduced the general public preview of Memorystore for Valkey 8.0, which can carry main efficiency and reliability enhancements, a brand new replication scheme, networking enhancements, and detailed visibility into efficiency and useful resource utilization, the database GM says. Memorystore for Valkey 8.0 pushes as much as twice the queries per seconds in comparison with Memorystore for Redis Cluster, at microseconds latency, Gutmans says.

Google Cloud introduced updates to a number of different merchandise, together with Firebase, Spanner, and Gemini. You’ll be able to learn extra about them right here.

Associated Objects:

Google Revs Cloud Databases, Provides Extra GenAI to the Combine

Google Cloud Bolsters AI Choices At Subsequent ’24

Google Cloud Launches New Postgres-Appropriate Database, AlloyDB