Introduction

Indexes are a vital a part of correct knowledge modeling for all databases, and DynamoDB isn’t any exception. DynamoDB’s secondary indexes are a strong device for enabling new entry patterns on your knowledge.

On this put up, we’ll have a look at DynamoDB secondary indexes. First, we’ll begin with some conceptual factors about how to consider DynamoDB and the issues that secondary indexes resolve. Then, we’ll have a look at some sensible suggestions for utilizing secondary indexes successfully. Lastly, we’ll shut with some ideas on when it is best to use secondary indexes and when it is best to search for different options.

Let’s get began.

What’s DynamoDB, and what are DynamoDB secondary indexes?

Earlier than we get into use circumstances and greatest practices for secondary indexes, we must always first perceive what DynamoDB secondary indexes are. And to do this, we must always perceive a bit about how DynamoDB works.

This assumes some fundamental understanding of DynamoDB. We’ll cowl the fundamental factors it’s essential know to grasp secondary indexes, however when you’re new to DynamoDB, you could wish to begin with a extra fundamental introduction.

The Naked Minimal you Must Learn about DynamoDB

DynamoDB is a novel database. It is designed for OLTP workloads, that means it is nice for dealing with a excessive quantity of small operations — consider issues like including an merchandise to a procuring cart, liking a video, or including a touch upon Reddit. In that means, it could actually deal with related purposes as different databases you may need used, like MySQL, PostgreSQL, MongoDB, or Cassandra.

DynamoDB’s key promise is its assure of constant efficiency at any scale. Whether or not your desk has 1 megabyte of information or 1 petabyte of information, DynamoDB desires to have the identical latency on your OLTP-like requests. This can be a large deal — many databases will see diminished efficiency as you improve the quantity of information or the variety of concurrent requests. Nonetheless, offering these ensures requires some tradeoffs, and DynamoDB has some distinctive traits that it’s essential perceive to make use of it successfully.

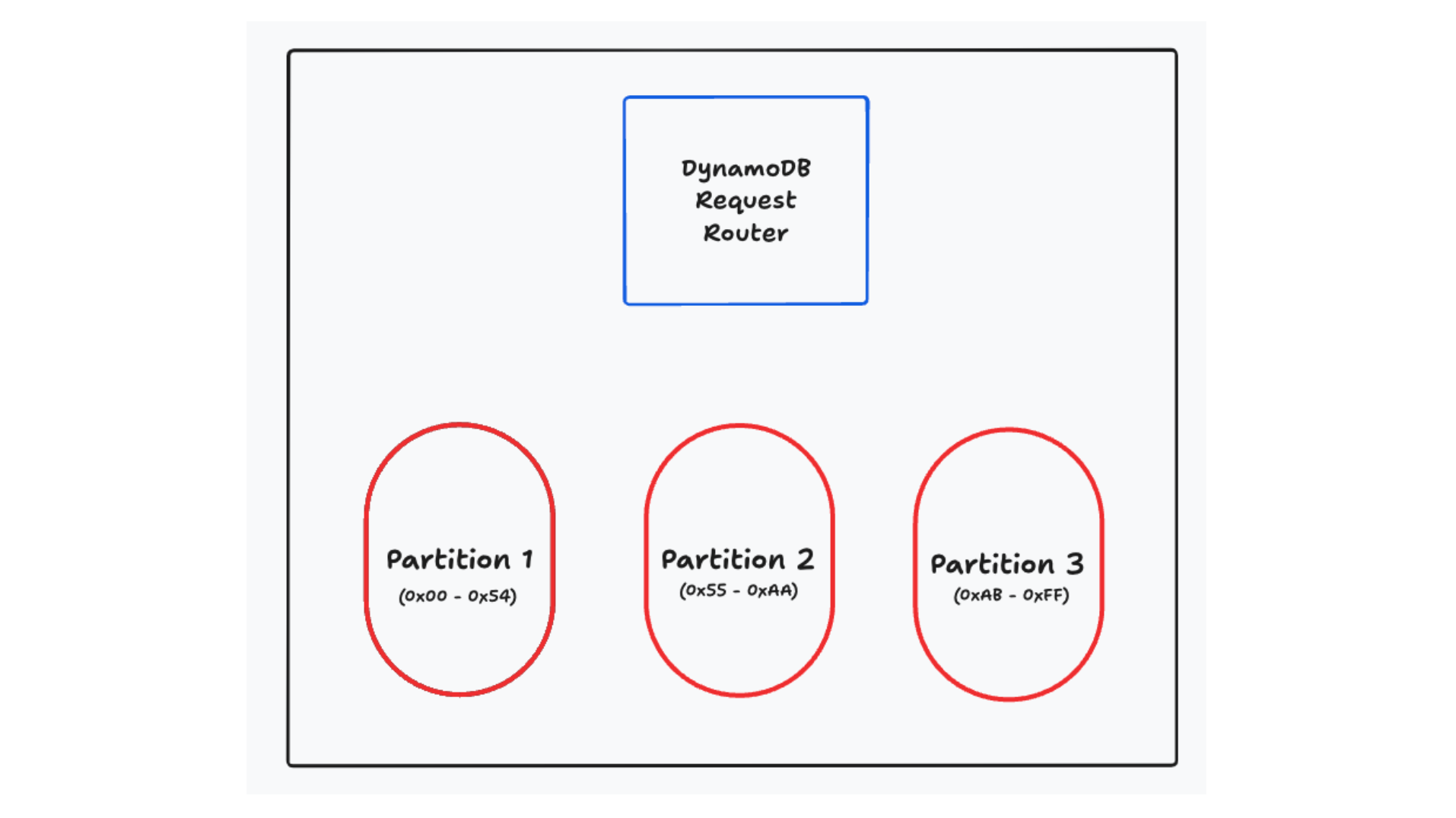

First, DynamoDB horizontally scales your databases by spreading your knowledge throughout a number of partitions underneath the hood. These partitions aren’t seen to you as a person, however they’re on the core of how DynamoDB works. You’ll specify a main key on your desk (both a single ingredient, referred to as a ‘partition key’, or a mix of a partition key and a form key), and DynamoDB will use that main key to find out which partition your knowledge lives on. Any request you make will undergo a request router that can decide which partition ought to deal with the request. These partitions are small — typically 10GB or much less — to allow them to be moved, cut up, replicated, and in any other case managed independently.

Horizontal scalability through sharding is fascinating however is in no way distinctive to DynamoDB. Many different databases — each relational and non-relational — use sharding to horizontally scale. Nonetheless, what is distinctive to DynamoDB is the way it forces you to make use of your main key to entry your knowledge. Moderately than utilizing a question planner that interprets your requests right into a sequence of queries, DynamoDB forces you to make use of your main key to entry your knowledge. You might be basically getting a straight addressable index on your knowledge.

The API for DynamoDB displays this. There are a sequence of operations on particular person objects (GetItem, PutItem, UpdateItem, DeleteItem) that permit you to learn, write, and delete particular person objects. Moreover, there’s a Question operation that lets you retrieve a number of objects with the identical partition key. If in case you have a desk with a composite main key, objects with the identical partition key might be grouped collectively on the identical partition. They are going to be ordered in line with the kind key, permitting you to deal with patterns like “Fetch the latest Orders for a Consumer” or “Fetch the final 10 Sensor Readings for an IoT Gadget”.

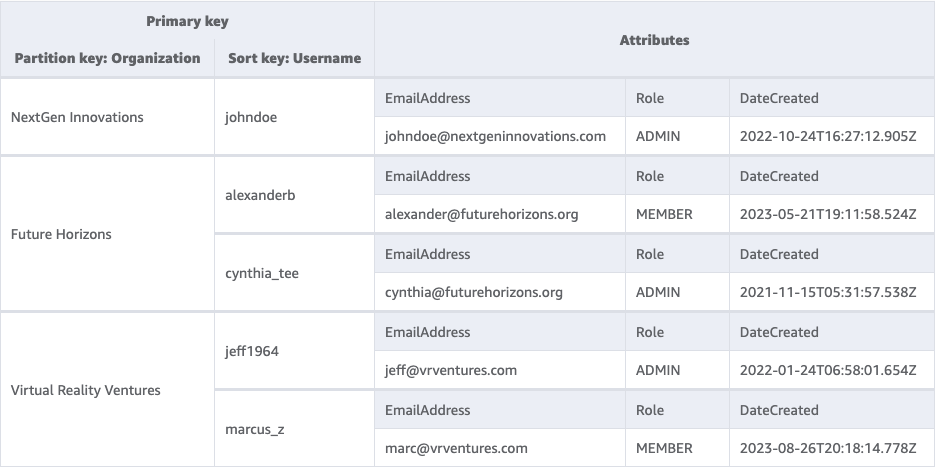

For instance, we could say a SaaS utility that has a desk of Customers. All Customers belong to a single Group. We’d have a desk that appears as follows:

We’re utilizing a composite main key with a partition key of ‘Group’ and a form key of ‘Username’. This permits us to do operations to fetch or replace a person Consumer by offering their Group and Username. We are able to additionally fetch all the Customers for a single Group by offering simply the Group to a Question operation.

What are secondary indexes, and the way do they work

With some fundamentals in thoughts, let’s now have a look at secondary indexes. The easiest way to grasp the necessity for secondary indexes is to grasp the issue they resolve. We have seen how DynamoDB partitions your knowledge in line with your main key and the way it pushes you to make use of the first key to entry your knowledge. That is all nicely and good for some entry patterns, however what if it’s essential entry your knowledge differently?

In our instance above, we had a desk of customers that we accessed by their group and username. Nonetheless, we might also must fetch a single person by their electronic mail tackle. This sample would not match with the first key entry sample that DynamoDB pushes us in direction of. As a result of our desk is partitioned by totally different attributes, there’s not a transparent strategy to entry our knowledge in the way in which we wish. We might do a full desk scan, however that is sluggish and inefficient. We might duplicate our knowledge right into a separate desk with a distinct main key, however that provides complexity.

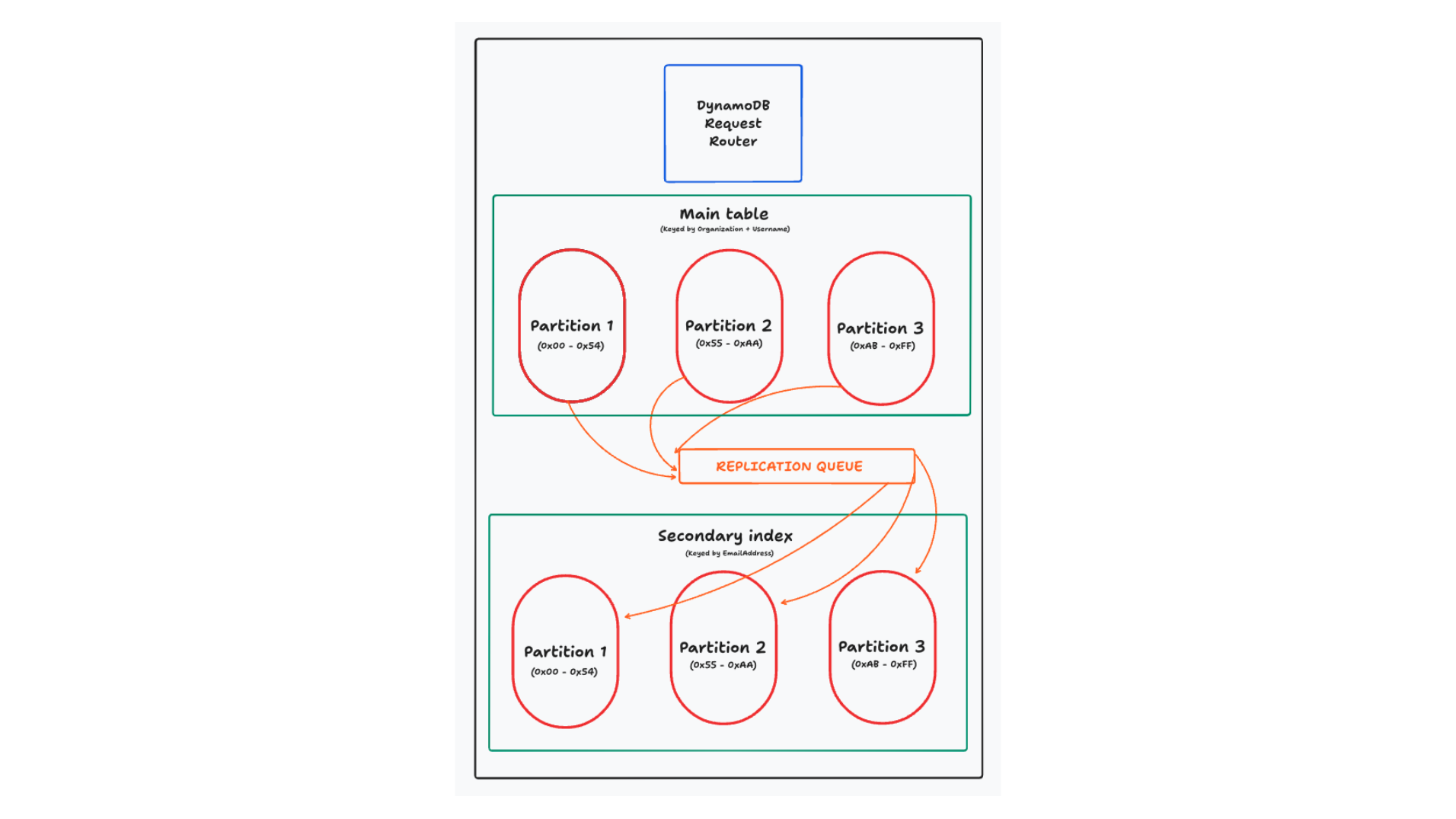

That is the place secondary indexes are available. A secondary index is mainly a totally managed copy of your knowledge with a distinct main key. You’ll specify a secondary index in your desk by declaring the first key for the index. As writes come into your desk, DynamoDB will robotically replicate the info to your secondary index.

Word: Every thing on this part applies to world secondary indexes. DynamoDB additionally gives native secondary indexes, that are a bit totally different. In nearly all circumstances, you want a world secondary index. For extra particulars on the variations, try this text on selecting a world or native secondary index.

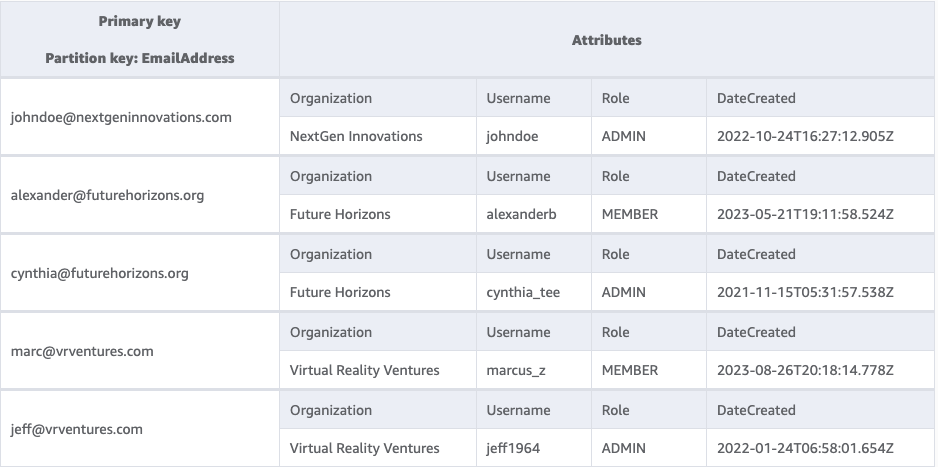

On this case, we’ll add a secondary index to our desk with a partition key of “E-mail”. The secondary index will look as follows:

Discover that this is similar knowledge, it has simply been reorganized with a distinct main key. Now, we will effectively lookup a person by their electronic mail tackle.

In some methods, that is similar to an index in different databases. Each present an information construction that’s optimized for lookups on a specific attribute. However DynamoDB’s secondary indexes are totally different in a number of key methods.

First, and most significantly, DynamoDB’s indexes reside on fully totally different partitions than your most important desk. DynamoDB desires each lookup to be environment friendly and predictable, and it desires to offer linear horizontal scaling. To do that, it must reshard your knowledge by the attributes you may use to question it.

In different distributed databases, they often do not reshard your knowledge for the secondary index. They will normally simply keep the secondary index for all knowledge on the shard. Nonetheless, in case your indexes do not use the shard key, you are shedding a few of the advantages of horizontally scaling your knowledge as a question with out the shard key might want to do a scatter-gather operation throughout all shards to search out the info you are on the lookout for.

A second means that DynamoDB’s secondary indexes are totally different is that they (typically) copy the complete merchandise to the secondary index. For indexes on a relational database, the index will typically include a pointer to the first key of the merchandise being listed. After finding a related report within the index, the database will then must go fetch the total merchandise. As a result of DynamoDB’s secondary indexes are on totally different nodes than the primary desk, they wish to keep away from a community hop again to the unique merchandise. As an alternative, you may copy as a lot knowledge as you want into the secondary index to deal with your learn.

Secondary indexes in DynamoDB are highly effective, however they’ve some limitations. First off, they’re read-only — you may’t write on to a secondary index. Moderately, you’ll write to your most important desk, and DynamoDB will deal with the replication to your secondary index. Second, you’re charged for the write operations to your secondary indexes. Thus, including a secondary index to your desk will typically double the overall write prices on your desk.

Ideas for utilizing secondary indexes

Now that we perceive what secondary indexes are and the way they work, let’s discuss the right way to use them successfully. Secondary indexes are a strong device, however they are often misused. Listed below are some suggestions for utilizing secondary indexes successfully.

Attempt to have read-only patterns on secondary indexes

The primary tip appears apparent — secondary indexes can solely be used for reads, so it is best to purpose to have read-only patterns in your secondary indexes! And but, I see this error on a regular basis. Builders will first learn from a secondary index, then write to the primary desk. This leads to additional value and further latency, and you may typically keep away from it with some upfront planning.

Should you’ve learn something about DynamoDB knowledge modeling, you in all probability know that it is best to consider your entry patterns first. It isn’t like a relational database the place you first design normalized tables after which write queries to affix them collectively. In DynamoDB, it is best to take into consideration the actions your utility will take, after which design your tables and indexes to assist these actions.

When designing my desk, I like to start out with the write-based entry patterns first. With my writes, I am typically sustaining some kind of constraint — uniqueness on a username or a most variety of members in a gaggle. I wish to design my desk in a means that makes this easy, ideally with out utilizing DynamoDB Transactions or utilizing a read-modify-write sample that may very well be topic to race circumstances.

As you’re employed by means of these, you may typically discover that there is a ‘main’ strategy to determine your merchandise that matches up together with your write patterns. It will find yourself being your main key. Then, including in extra, secondary learn patterns is straightforward with secondary indexes.

In our Customers instance earlier than, each Consumer request will possible embody the Group and the Username. It will permit me to lookup the person Consumer report in addition to authorize particular actions by the Consumer. The e-mail tackle lookup could also be for much less outstanding entry patterns, like a ‘forgot password’ stream or a ‘seek for a person’ stream. These are read-only patterns, and so they match nicely with a secondary index.

Use secondary indexes when your keys are mutable

A second tip for utilizing secondary indexes is to make use of them for mutable values in your entry patterns. Let’s first perceive the reasoning behind it, after which have a look at conditions the place it applies.

DynamoDB lets you replace an current merchandise with the UpdateItem

operation. Nonetheless, you can’t change the first key of an merchandise in an replace. The first secret’s the distinctive identifier for an merchandise, and altering the first secret’s mainly creating a brand new merchandise. If you wish to change the first key of an current merchandise, you may must delete the outdated merchandise and create a brand new one. This two-step course of is slower and expensive. Usually you may must learn the unique merchandise first, then use a transaction to delete the unique merchandise and create a brand new one in the identical request.

Alternatively, in case you have this mutable worth within the main key of a secondary index, then DynamoDB will deal with this delete + create course of for you throughout replication. You possibly can challenge a easy UpdateItem request to vary the worth, and DynamoDB will deal with the remaining.

I see this sample come up in two most important conditions. The primary, and most typical, is when you’ve got a mutable attribute that you just wish to type on. The canonical examples listed here are a leaderboard for a sport the place individuals are frequently racking up factors, or for a frequently updating checklist of things the place you wish to show probably the most lately up to date objects first. Consider one thing like Google Drive, the place you may type your information by ‘final modified’.

A second sample the place this comes up is when you’ve got a mutable attribute that you just wish to filter on. Right here, you may consider an ecommerce retailer with a historical past of orders for a person. You might wish to permit the person to filter their orders by standing — present me all my orders which are ‘shipped’ or ‘delivered’. You possibly can construct this into your partition key or the start of your type key to permit exact-match filtering. Because the merchandise modifications standing, you may replace the standing attribute and lean on DynamoDB to group the objects accurately in your secondary index.

In each of those conditions, transferring this mutable attribute to your secondary index will prevent money and time. You will save time by avoiding the read-modify-write sample, and you will get monetary savings by avoiding the additional write prices of the transaction.

Moreover, observe that this sample matches nicely with the earlier tip. It is unlikely you’ll determine an merchandise for writing primarily based on the mutable attribute like their earlier rating, their earlier standing, or the final time they have been up to date. Moderately, you may replace by a extra persistent worth, just like the person’s ID, the order ID, or the file’s ID. Then, you may use the secondary index to type and filter primarily based on the mutable attribute.

Keep away from the ‘fats’ partition

We noticed above that DynamoDB divides your knowledge into partitions primarily based on the first key. DynamoDB goals to maintain these partitions small — 10GB or much less — and it is best to purpose to unfold requests throughout your partitions to get the advantages of DynamoDB’s scalability.

This typically means it is best to use a high-cardinality worth in your partition key. Consider one thing like a username, an order ID, or a sensor ID. There are massive numbers of values for these attributes, and DynamoDB can unfold the visitors throughout your partitions.

Usually, I see folks perceive this precept of their most important desk, however then fully neglect about it of their secondary indexes. Usually, they need ordering throughout the complete desk for a kind of merchandise. In the event that they wish to retrieve customers alphabetically, they will use a secondary index the place all customers have USERS because the partition key and the username as the kind key. Or, if they need ordering of the latest orders in an ecommerce retailer, they will use a secondary index the place all orders have ORDERS because the partition key and the timestamp as the kind key.

This sample can work for small-traffic purposes the place you will not come near the DynamoDB partition throughput limits, but it surely’s a harmful sample for a heavy-traffic utility. Your entire visitors could also be funneled to a single bodily partition, and you may rapidly hit the write throughput limits for that partition.

Additional, and most dangerously, this will trigger issues on your most important desk. In case your secondary index is getting write throttled throughout replication, the replication queue will again up. If this queue backs up an excessive amount of, DynamoDB will begin rejecting writes in your most important desk.

That is designed that will help you — DynamoDB desires to restrict the staleness of your secondary index, so it’s going to stop you from a secondary index with a considerable amount of lag. Nonetheless, it may be a stunning state of affairs that pops up if you’re least anticipating it.

Use sparse indexes as a world filter

Folks typically consider secondary indexes as a strategy to replicate all of their knowledge with a brand new main key. Nonetheless, you do not want all your knowledge to finish up in a secondary index. If in case you have an merchandise that does not match the index’s key schema, it will not be replicated to the index.

This may be actually helpful for offering a world filter in your knowledge. The canonical instance I exploit for it is a message inbox. In your most important desk, you may retailer all of the messages for a specific person ordered by the point they have been created.

However when you’re like me, you’ve got plenty of messages in your inbox. Additional, you may deal with unread messages as a ‘todo’ checklist, like little reminders to get again to somebody. Accordingly, I normally solely wish to see the unread messages in my inbox.

You could possibly use your secondary index to offer this world filter the place unread == true. Maybe your secondary index partition secret’s one thing like ${userId}#UNREAD, and the kind secret’s the timestamp of the message. Whenever you create the message initially, it’s going to embody the secondary index partition key worth and thus might be replicated to the unread messages secondary index. Later, when a person reads the message, you may change the standing to READ and delete the secondary index partition key worth. DynamoDB will then take away it out of your secondary index.

I exploit this trick on a regular basis, and it is remarkably efficient. Additional, a sparse index will prevent cash. Any updates to learn messages is not going to be replicated to the secondary index, and you will save on write prices.

Slender your secondary index projections to scale back index measurement and/or writes

For our final tip, let’s take the earlier level a bit of additional. We simply noticed that DynamoDB will not embody an merchandise in your secondary index if the merchandise would not have the first key components for the index. This trick can be utilized for not solely main key components but additionally for non-key attributes within the knowledge!

Whenever you create a secondary index, you may specify which attributes from the primary desk you wish to embody within the secondary index. That is referred to as the projection of the index. You possibly can select to incorporate all attributes from the primary desk, solely the first key attributes, or a subset of the attributes.

Whereas it is tempting to incorporate all attributes in your secondary index, this could be a expensive mistake. Keep in mind that each write to your most important desk that modifications the worth of a projected attribute might be replicated to your secondary index. A single secondary index with full projection successfully doubles the write prices on your desk. Every extra secondary index will increase your write prices by 1/N + 1, the place N is the variety of secondary indexes earlier than the brand new one.

Moreover, your write prices are calculated primarily based on the scale of your merchandise. Every 1KB of information written to your desk makes use of a WCU. Should you’re copying a 4KB merchandise to your secondary index, you may be paying the total 4 WCUs on each your most important desk and your secondary index.

Thus, there are two methods that you may get monetary savings by narrowing your secondary index projections. First, you may keep away from sure writes altogether. If in case you have an replace operation that does not contact any attributes in your secondary index projection, DynamoDB will skip the write to your secondary index. Second, for these writes that do replicate to your secondary index, it can save you cash by decreasing the scale of the merchandise that’s replicated.

This could be a difficult stability to get proper. Secondary index projections aren’t alterable after the index is created. Should you discover that you just want extra attributes in your secondary index, you may must create a brand new index with the brand new projection after which delete the outdated index.

Do you have to use a secondary index?

Now that we have explored some sensible recommendation round secondary indexes, let’s take a step again and ask a extra basic query — do you have to use a secondary index in any respect?

As we have seen, secondary indexes enable you to entry your knowledge differently. Nonetheless, this comes at the price of the extra writes. Thus, my rule of thumb for secondary indexes is:

Use secondary indexes when the diminished learn prices outweigh the elevated write prices.

This appears apparent if you say it, however it may be counterintuitive as you are modeling. It appears really easy to say “Throw it in a secondary index” with out desirous about different approaches.

To convey this dwelling, let us take a look at two conditions the place secondary indexes may not make sense.

A lot of filterable attributes in small merchandise collections

With DynamoDB, you typically need your main keys to do your filtering for you. It irks me a bit of every time I exploit a Question in DynamoDB however then carry out my very own filtering in my utility — why could not I simply construct that into the first key?

Regardless of my visceral response, there are some conditions the place you may wish to over-read your knowledge after which filter in your utility.

The most typical place you may see that is if you wish to present plenty of totally different filters in your knowledge on your customers, however the related knowledge set is bounded.

Consider a exercise tracker. You may wish to permit customers to filter on plenty of attributes, corresponding to kind of exercise, depth, length, date, and so forth. Nonetheless, the variety of exercises a person has goes to be manageable — even an influence person will take some time to exceed 1000 exercises. Moderately than placing indexes on all of those attributes, you may simply fetch all of the person’s exercises after which filter in your utility.

That is the place I like to recommend doing the mathematics. DynamoDB makes it simple to calculate these two choices and get a way of which one will work higher on your utility.

A lot of filterable attributes in massive merchandise collections

Let’s change our state of affairs a bit — what if our merchandise assortment is massive? What if we’re constructing a exercise tracker for a gymnasium, and we wish to permit the gymnasium proprietor to filter on all the attributes we talked about above for all of the customers within the gymnasium?

This modifications the state of affairs. Now we’re speaking about a whole bunch and even hundreds of customers, every with a whole bunch or hundreds of exercises. It will not make sense to over-read the complete merchandise assortment and do post-hoc filtering on the outcomes.

However secondary indexes do not actually make sense right here both. Secondary indexes are good for identified entry patterns the place you may depend on the related filters being current. If we wish our gymnasium proprietor to have the ability to filter on a wide range of attributes, all of that are non-obligatory, we might must create a lot of indexes to make this work.

We talked concerning the potential downsides of question planners earlier than, however question planners have an upside too. Along with permitting for extra versatile queries, they will additionally do issues like index intersections to take a look at partial outcomes from a number of indexes in composing these queries. You are able to do the identical factor with DynamoDB, however it should lead to plenty of forwards and backwards together with your utility, together with some advanced utility logic to determine it out.

When I’ve these kind of issues, I typically search for a device higher fitted to this use case. Rockset and Elasticsearch are my go-to suggestions right here for offering versatile, secondary-index-like filtering throughout your dataset.

Conclusion

On this put up, we discovered about DynamoDB secondary indexes. First, we checked out some conceptual bits to grasp how DynamoDB works and why secondary indexes are wanted. Then, we reviewed some sensible tricks to perceive the right way to use secondary indexes successfully and to be taught their particular quirks. Lastly, we checked out how to consider secondary indexes to see when it is best to use different approaches.

Secondary indexes are a strong device in your DynamoDB toolbox, however they are not a silver bullet. As with all DynamoDB knowledge modeling, be sure to fastidiously take into account your entry patterns and depend the prices earlier than you bounce in.

Study extra about how you should use Rockset for secondary-index-like filtering in Alex DeBrie’s weblog DynamoDB Filtering and Aggregation Queries Utilizing SQL on Rockset.