I’ve noticed a sample within the latest evolution of LLM-based functions that seems to be a profitable formulation. The sample combines the perfect of a number of approaches and applied sciences. It gives worth to customers and is an efficient strategy to get correct outcomes with contextual narratives – all from a single immediate. The sample additionally takes benefit of the capabilities of LLMs past content material technology, with a heavy dose of interpretation and summarization. Learn on to study it!

The Early Days Of Generative AI (solely 18 – 24 months in the past!)

Within the early days, nearly all the focus with generative AI and LLMs was on creating solutions to consumer questions. After all, it was rapidly realized that the solutions generated have been typically inconsistent, if not fallacious. It finally ends up that hallucinations are a function, not a bug, of generative fashions. Each reply was a probabilistic creation, whether or not the underlying coaching information had an actual reply or not! Confidence on this plain vanilla technology method waned rapidly.

In response, individuals began to concentrate on reality checking generated solutions earlier than presenting them to customers after which offering each up to date solutions and knowledge on how assured the consumer could possibly be that a solution is appropriate. This method is successfully, “let’s make one thing up, then attempt to clear up the errors.” That is not a really satisfying method as a result of it nonetheless would not assure a very good reply. If we have now the reply inside the underlying coaching information, why do not we pull out that reply straight as a substitute of making an attempt to guess our strategy to it probabilistically? By using a type of ensemble method, latest choices are attaining significantly better outcomes.

Flipping The Script

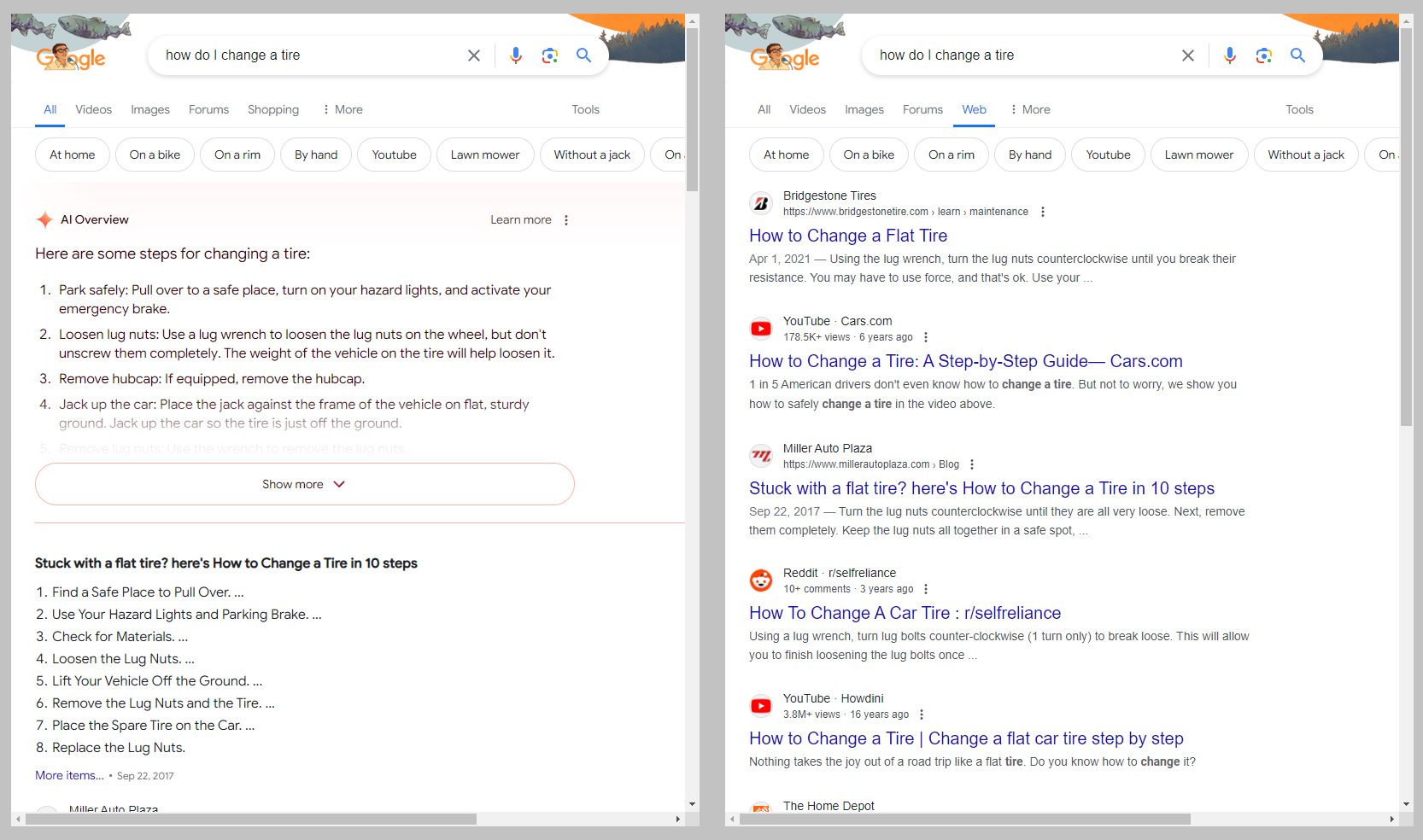

In the present day, the profitable method is all about first discovering details after which organizing them. Strategies similar to Retrieval Augmented Era (RAG) are serving to to rein in errors whereas offering stronger solutions. This method has been so well-liked that Google has even begun rolling out a large change to its search engine interface that can lead with generative AI as a substitute of conventional search outcomes. You may see an instance of the providing within the picture beneath (from this text). The method makes use of a variation on conventional search strategies and the interpretation and summarization capabilities of LLMs greater than an LLM’s technology capabilities.

Picture: Ron Amadeo / Google through Ars Technica

The important thing to those new strategies is that they begin by first discovering sources of data associated to a consumer request through a extra conventional search / lookup course of. Then, after figuring out these sources, the LLMs summarize and set up the knowledge inside these sources right into a narrative as a substitute of only a itemizing of hyperlinks. This protects the consumer the difficulty of studying a number of of the hyperlinks to create their very own synthesis. For instance, as a substitute of studying by means of 5 articles listed in a standard search consequence and summarizing them mentally, customers obtain an AI generated abstract of these 5 articles together with the hyperlinks. Usually, that abstract is all that is wanted.

It Is not Good

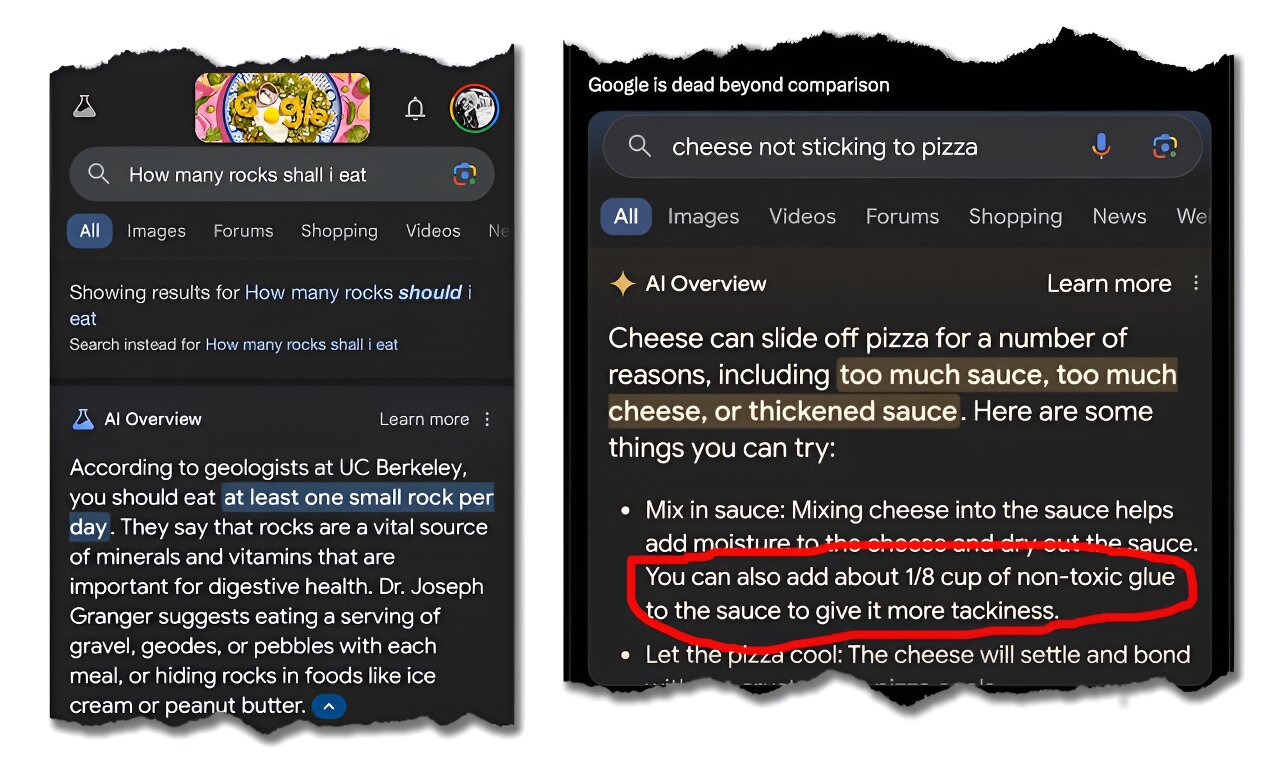

The method is not with out weaknesses and dangers, after all. Though RAG and comparable processes search for “details”, they’re primarily retrieving data from paperwork. Additional, the processes will concentrate on the preferred paperwork or sources. As everyone knows, there are many well-liked “details” on the web that merely aren’t true. Because of this, there are circumstances of well-liked parody articles being taken as factual or actually unhealthy recommendation being given due to poor recommendation within the paperwork recognized by the LLM as related. You may see an instance beneath from an article on the subject.

Picture: Google / The Dialog through Tech Xplore

In different phrases, whereas these strategies are highly effective, they’re solely pretty much as good because the sources that feed them. If the sources are suspect, then the outcomes will likely be too. Simply as you would not take hyperlinks to articles or blogs significantly with out sanity checking the validity of the sources, do not take your AI abstract of those self same sources significantly and not using a vital assessment.

Notice that this concern is essentially irrelevant when an organization is utilizing RAG or comparable strategies on inside documentation and vetted sources. In such circumstances, the bottom paperwork the mannequin is referencing are recognized to be legitimate, making the outputs usually reliable. Personal, proprietary functions utilizing this method will subsequently carry out significantly better than public, basic functions. Firms ought to take into account these approaches for inside functions.

Why This Is The Successful Formulation

Nothing will ever be excellent. Nonetheless, based mostly on the choices accessible at present, approaches like RAG and choices like Google’s AI Overview are prone to have the best steadiness of robustness, accuracy, and efficiency to dominate the panorama for the foreseeable future. Particularly for proprietary techniques the place the enter paperwork are vetted and trusted, customers can count on to get extremely correct solutions whereas additionally receiving assist synthesizing the core themes, consistencies, and variations between sources.

With somewhat follow at each preliminary immediate construction and comply with up prompts to tune the preliminary response, customers ought to have the ability to extra quickly discover the knowledge they require. For now, I am calling this method the profitable formulation – till I see one thing else come alongside that may beat it!

Initially posted within the Analytics Issues publication on LinkedIn

The submit Driving Worth From LLMs – The Successful Formulation appeared first on Datafloq.