Structure diagrams lie, somewhat. Not on objective. They present packing containers and arrows in clear preparations and make every little thing look sequential and tidy. What they can’t present is what fails first, what stunned you, and which selections you’d struggle hardest to maintain if somebody needed to simplify issues.

That is about these selections.

The aim was to maneuver reference information from an on-premises MongoDB occasion, the registered golden supply for enterprise reference information, right into a ruled cloud pipeline, with Athena because the question floor and an enterprise Knowledge Market because the publication layer. Simple sufficient in concept. The issues have been within the particulars, as they at all times are.

Why Three Layers and Not One

The apparent path is: extract from MongoDB, put it someplace within the cloud, let folks question it. You can also make that work, technically. What you find yourself with is a storage location that everybody regularly stops trusting, as a result of it’s by no means clear whether or not what’s in it displays the present state of the supply or a snapshot from two weeks in the past, and the schema is regardless of the final one who ran the extraction thought was smart.

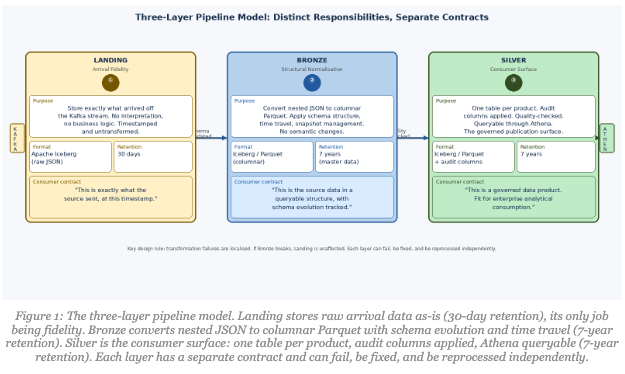

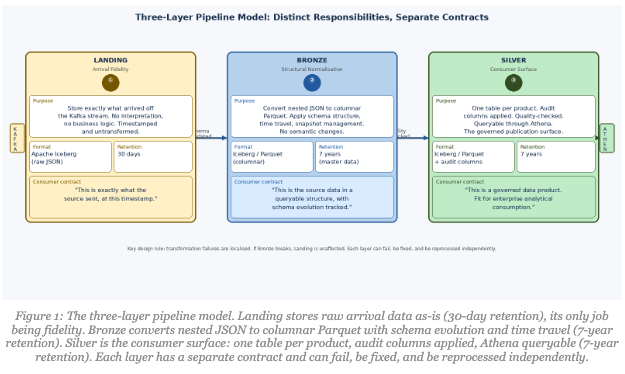

Three specific layers, Touchdown, Bronze, Silver have been a direct reply to that. Every has a definite accountability, a special file format, a special retention coverage, and a special contract with the info.

Touchdown shops precisely what got here off the Kafka stream: uncooked JSON, timestamped, untransformed, held in Apache Iceberg tables with a 30-day archive coverage. No enterprise logic, no interpretation. When one thing goes fallacious downstream, you possibly can return to Touchdown and know with confidence it displays what was within the supply at that cut-off date. Thirty days covers any incident investigation cycle whereas conserving storage prices cheap.

Bronze takes Touchdown’s uncooked information and establishes precise desk construction, changing nested JSON to columnar Parquet format in Iceberg tables, with correct snapshots, schema evolution, and time journey functionality. The archive coverage steps as much as seven years for grasp information, reflecting the regulatory context we function in. Bronze is its personal stage relatively than being collapsed into Touchdown since you need transformation failures to be seen and localised. If Bronze breaks, Touchdown is unaffected. You possibly can repair the problem and reprocess with out touching the arrival checkpoint.

Silver is what customers see. Formed for analytical use, necessary audit columns utilized, quality-checked, queryable via Athena, saved as Parquet in Iceberg with seven-year retention. That is the product floor, and it must be held to a special commonplace than the intermediate layers. Blurring Bronze and Silver into one layer is a shortcut that makes debugging a nightmare.

What the Kafka Layer Truly Does

Folks describe Kafka as “the streaming layer” and transfer on. The choices contained in the Kafka Join configuration have been the place plenty of the pipeline’s trustworthiness was really constructed.

Two mechanisms ran in parallel inside Kafka Join, and each have been important.

Lifeless Letter Queue for operational visibility. When a message failed, whether or not on account of a malformed payload, kind mismatch, or sudden nesting, it went to a DLQ with a configurable retention interval relatively than being silently dropped or blocking the stream. The DLQ was what turned “we seen one thing was fallacious three days later” into “we bought alerted inside twenty minutes and had the unhealthy occasions proper there to examine.” The distinction between these two outcomes is important in any setting, however particularly so when downstream groups deal with the info as authoritative.

Schema validation through a Schema Registry. Each occasion goes via schema validation earlier than reaching the S3 sink. If a source-side change altered discipline names or varieties, the pipeline rejected the occasion at Kafka relatively than writing rubbish into Touchdown. Quiet corruption is the worst type of information downside, since you typically don’t discover out till a client’s job breaks in manufacturing on a Friday afternoon. Early rejection trades a visual failure in a managed place for a hidden failure found a lot later.

Collectively, these two issues meant Touchdown may very well be handled as a reliable checkpoint relatively than a dump of no matter got here down the stream.

Two Transformation Levels, Two Completely different Jobs

Value being exact about one thing right here, as a result of it’s simple to provide the fallacious impression. We’re working with reference information from an authoritative golden supply. The enterprise requirement explicitly acknowledged that no business-logic transformation could be utilized. This can be a one-to-one mapping from supply to vacation spot. We aren’t enriching, aggregating, or deriving something. The worth proposition is trustworthy preservation.

However “no transformation” doesn’t imply “no work.” MongoDB shops nested JSON paperwork. Analytical customers want flat columns in Parquet. Getting from one to the opposite is structural conversion, not semantic transformation, however it’s nonetheless a non-trivial pipeline stage that may fail.

Stage 1: Touchdown to Bronze. The job takes uncooked JSON from the touchdown path, flattens nested sub-documents right into a columnar construction, deduplicates by key, and writes the end result as Parquet into an Iceberg desk. A checksum validation confirms every little thing that left MongoDB arrived. No enterprise semantics touched, no values modified. Structural conversion solely.

Stage 2: Bronze to Silver. A single MongoDB assortment typically holds a number of logical entity varieties: nation codes, foreign money codes, organisational position varieties, multi functional assortment as a result of that’s handy for the operational system. For customers, that may be a mess. The Bronze-to-Silver stage splits every assortment by information class into its personal desk. One product, one desk. Governance turns into tractable as a result of you possibly can draw a boundary round every product.

Each Silver desk will get a typical set of audit columns at this stage: CREATE_DATE_TIME, UPDATE_DATE_TIME, VALID_FROM and VALID_TO (distinguishing present from historic values), DELETE_FLAG (delicate delete from the supply system), CREATED_BY, UPDATED_BY, SOURCE_SYSTEM, JOB_NAME, JOB_RUN_ID, JOB_START_DTTM, and JOB_END_DTTM. Extra on why these matter shortly.

Maintaining these as separate pipeline phases means every one can fail, be mounted, and be rerun independently. That issues extra at 2am than any architectural class argument.

CDC: Not the Simple Half

Change information seize will get described like a solved downside. Extract the modifications, apply them downstream, performed. What it really offers you is occasions. The difficult elements are what you do with them: deduplication when occasions arrive out of order, making use of deletes appropriately through soft-delete flags relatively than arduous deletes, ensuring a file that modified 5 occasions in an hour arrives downstream in the appropriate closing state.

The pipeline captures inserts, updates, and deletes from MongoDB and applies them precisely to the goal, validating the change order to ensure occasions are consumed within the right sequence. After the preliminary full information load, all subsequent synchronization runs via CDC solely, no reprocessing of the total dataset. The pipeline runs on a month-to-month batch cadence: the fifth of each month at 07:00 UTC, totally automated, no dependency on working days or vacation calendars.

The difficulty that generated probably the most help tickets, considerably embarrassingly, was the absence of occasions. If nothing modified in MongoDB, nothing flows via the pipeline. That’s right behaviour, completely aligned with how CDC works. However groups anticipating a day by day file drop as affirmation the pipeline was alive learn “no new file” as “one thing is damaged.” We constructed an specific no-change sign: a small indicator that the pipeline ran, checked, discovered nothing new, and is wholesome. Not glamorous engineering. It closed a big variety of pointless incidents.

Minimal Transformation Is Not Minimal Accountability

As a result of we have been publishing authoritative reference information with out enrichment, some stakeholders assumed the standard bar could be lighter. The logic was: we’re not altering a lot, so there’s much less to get fallacious.

The alternative is true. When the worth proposition is “we preserved the reality precisely,” validation is what proves you probably did that. The standard gates work in layers. Schema validation at Kafka is the primary gate: a schema mismatch fails the job and alerts the reference information proprietor workforce. Fundamental information high quality checks comply with: non-null enforcement for necessary fields, allowed worth validation for reference codes. Reconciliation runs between layers, file counts, null charges, key distributions, so any drift between Touchdown, Bronze, and Silver surfaces shortly. Checksum logic at Touchdown confirms every little thing that left MongoDB really arrived. When twenty-one merchandise all make the identical promise, the validation proving that promise must be hermetic.

What Audit Columns Truly Do

I used to consider audit columns as compliance ornament. Then I watched a workforce spend three days on what turned out to be a easy query: was this Silver file stale, soft-deleted, or simply unchanged because the final run?

With the audit columns in place, that may be a five-minute question. VALID_FROM and VALID_TO let you know whether or not you’re looking at a present or historic worth. DELETE_FLAG tells you if the supply system soft-deleted the file. JOB_RUN_ID and JOB_START_DTTM let you know precisely which pipeline run produced the file. SOURCE_SYSTEM confirms provenance.

With out them, it’s a three-day archaeology venture involving Airflow logs, Kafka offsets, and escalating frustration. The sample repeated throughout a number of incidents. Not dramatic information corruption, simply the atypical operational questions that come up always when information is shared throughout groups. Audit columns flip these questions from investigations into lookups.

What Made This a Platform Reasonably Than Only a Pipeline

A pipeline will get information from A to B. A platform is one thing folks can construct on without having to grasp all of the plumbing beneath.

The distinction was the Knowledge Market and what it pressured. The endpoint for a completed product shouldn’t be “the Silver desk exists.” It’s “the product is listed within the Market with metadata, a Kitemark high quality rating, documentation, and subscription behaviour.” Compliance with all lively requirements at deployment time is necessary. Consumption happens completely through the Market subscription mannequin. Not a suggestion. An enforced constraint.

That enforcement is what makes naming conventions matter in follow relatively than in precept. A client trying to find a dataset finds it utilizing enterprise-standard terminology, not the inner shorthand that made sense to the workforce that constructed it. The metadata framework transition to FDM mapping is unglamorous work. It’s also what makes {the catalogue} really navigable.

The pipeline earned belief by being predictable. Schema validated. Unhealthy occasions quarantined within the DLQ. JSON structurally transformed to Parquet. Knowledge lessons partitioned into particular person tables. Audit columns persistently utilized. Merchandise printed with documentation. Shoppers querying via Athena and subscribing via the Market. Nothing stunning.

In a big enterprise, nothing stunning is the aim.