Chatbots have gotten invaluable instruments for companies, serving to to enhance effectivity and help staff. By sifting by way of troves of firm knowledge and documentation, LLMs can help staff by offering knowledgeable responses to a variety of inquiries. For skilled staff, this can assist reduce time spent in redundant, much less productive duties. For newer staff, this can be utilized to not solely pace the time to an accurate reply however information these staff by way of on-boarding, assess their data development and even recommend areas for additional studying and growth as they arrive extra absolutely in control.

For the foreseeable future, these capabilities seem poised to increase staff greater than to interchange them. And with looming challenges in employee availability in lots of developed economies, many organizations are rewiring their inside processes to make the most of the help they will present.

Scaling LLM-Primarily based Chatbots Can Be Costly

As companies put together to broadly deploy chatbots into manufacturing, many are encountering a big problem: price. Excessive-performing fashions are sometimes costly to question, and lots of trendy chatbot purposes, often called agentic methods, could decompose particular person consumer requests into a number of, more-targeted LLM queries so as to synthesize a response. This may make scaling throughout the enterprise prohibitively costly for a lot of purposes.

However think about the breadth of questions being generated by a gaggle of staff. How dissimilar is every query? When particular person staff ask separate however related questions, may the response to a earlier inquiry be re-used to handle some or the entire wants of a latter one? If we may re-use a few of the responses, what number of calls to the LLM might be prevented and what may the associated fee implications of this be?

Reusing Responses Might Keep away from Pointless Price

Contemplate a chatbot designed to reply questions on an organization’s product options and capabilities. By utilizing this instrument, staff may be capable to ask questions so as to help numerous engagements with their clients.

In an ordinary strategy, the chatbot would ship every question to an underlying LLM, producing practically similar responses for every query. But when we programmed the chatbot utility to first search a set of beforehand cached questions and responses for extremely related inquiries to the one being requested by the consumer and to make use of an present response each time one was discovered, we may keep away from redundant calls to the LLM. This system, often called semantic caching, is changing into broadly adopted by enterprises due to the associated fee financial savings of this strategy.

Constructing a Chatbot with Semantic Caching on Databricks

At Databricks, we function a public-facing chatbot for answering questions on our merchandise. This chatbot is uncovered in our official documentation and sometimes encounters related consumer inquiries. On this weblog, we consider Databricks’ chatbot in a collection of notebooks to grasp how semantic caching can improve effectivity by lowering redundant computations. For demonstration functions, we used a synthetically generated dataset, simulating the sorts of repetitive questions the chatbot may obtain.

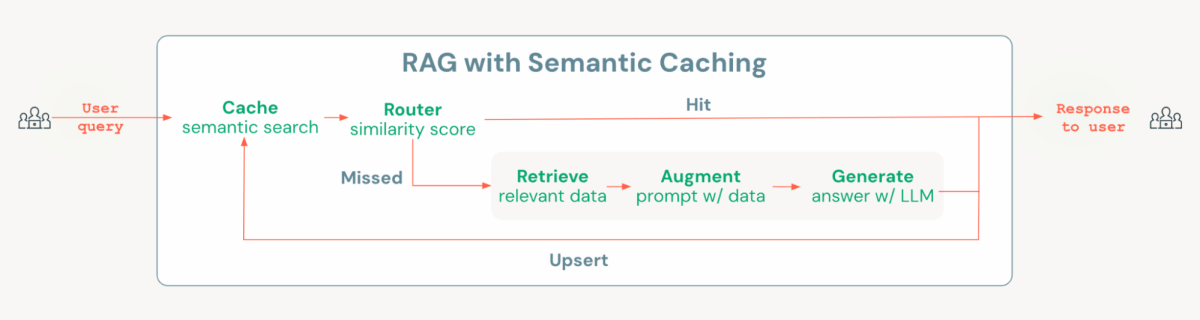

Databricks Mosaic AI gives all the required parts to construct a cost-optimized chatbot resolution with semantic caching, together with Vector Seek for making a semantic cache, MLflow and Unity Catalog for managing fashions and chains, and Mannequin Serving for deploying and monitoring, in addition to monitoring utilization and payloads. To implement semantic caching, we add a layer at first of the usual Retrieval-Augmented Era (RAG) chain. This layer checks if an analogous query already exists within the cache; if it does, then the cached response is retrieved and served. If not, the system proceeds with executing the RAG chain. This straightforward but highly effective routing logic will be simply carried out utilizing open supply instruments like Langchain or MLflow’s pyfunc.

Within the notebooks, we exhibit find out how to implement this resolution on Databricks, highlighting how semantic caching can scale back each latency and prices in comparison with an ordinary RAG chain when examined with the identical set of questions.

Along with the effectivity enchancment, we additionally present how semantic caching impacts the response high quality utilizing an LLM-as-a-judge strategy in MLflow. Whereas semantic caching improves effectivity, there’s a slight drop in high quality: analysis outcomes present that the usual RAG chain carried out marginally higher in metrics akin to reply relevance. These small declines in high quality are anticipated when retrieving responses from the cache. The important thing takeaway is to find out whether or not these high quality variations are acceptable given the numerous price and latency reductions offered by the caching resolution. Finally, the choice must be based mostly on how these trade-offs have an effect on the general enterprise worth of your use case.

Why Databricks?

Databricks gives an optimum platform for constructing cost-optimized chatbots with caching capabilities. With Databricks Mosaic AI, customers have native entry to all crucial parts, specifically a vector database, agent growth and analysis frameworks, serving, and monitoring on a unified, extremely ruled platform. This ensures that key property, together with knowledge, vector indexes, fashions, brokers, and endpoints, are centrally managed underneath sturdy governance.

Databricks Mosaic AI additionally presents an open structure, permitting customers to experiment with numerous fashions for embeddings and era. Leveraging the Databricks Mosaic AI Agent Framework and Analysis instruments, customers can quickly iterate on purposes till they meet production-level requirements. As soon as deployed, KPIs like hit ratios and latency will be monitored utilizing MLflow traces, that are robotically logged in Inference Tables for simple monitoring.

If you happen to’re trying to implement semantic caching on your AI system on Databricks, take a look at this mission that’s designed that can assist you get began rapidly and effectively.