Welcome to the primary installment of a collection of posts discussing the lately introduced Cloudera AI Inference service.

At present, Synthetic Intelligence (AI) and Machine Studying (ML) are extra essential than ever for organizations to show information right into a aggressive benefit. To unlock the total potential of AI, nevertheless, companies must deploy fashions and AI functions at scale, in real-time, and with low latency and excessive throughput. That is the place the Cloudera AI Inference service is available in. It’s a highly effective deployment surroundings that allows you to combine and deploy generative AI (GenAI) and predictive fashions into your manufacturing environments, incorporating Cloudera’s enterprise-grade safety, privateness, and information governance.

Over the following a number of weeks, we’ll discover the Cloudera AI Inference service in-depth, offering you with a complete introduction to its capabilities, advantages, and use instances.

On this collection, we’ll delve into subjects comparable to:

- A Cloudera AI Inference service structure deep dive

- Key options and advantages of the service, and the way it enhances Cloudera AI Workbench

- Service configuration and sizing of mannequin deployments based mostly on projected workloads

- implement a Retrieval-Augmented Technology (RAG) system utilizing the service

- Exploring totally different use instances for which the service is a good selection

For those who’re occupied with unlocking the total potential of AI and ML in your group, keep tuned for our subsequent posts, the place we’ll dig deeper into the world of Cloudera AI Inference.

What’s the Cloudera AI Inference service?

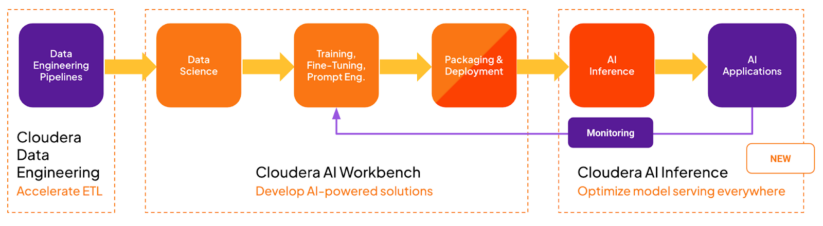

The Cloudera AI Inference service is a extremely scalable, safe, and high-performance deployment surroundings for serving manufacturing AI fashions and associated functions. The service is focused on the production-serving finish of the MLOPs/LLMOPs pipeline, as proven within the following diagram:

It enhances Cloudera AI Workbench (beforehand generally known as Cloudera Machine Studying Workspace), a deployment surroundings that’s extra targeted on the exploration, growth, and testing phases of the MLOPs workflow.

Why did we construct it?

The emergence of GenAI, sparked by the discharge of ChatGPT, has facilitated the broad availability of high-quality, open-source massive language fashions (LLMs). Companies like Hugging Face and the ONNX Mannequin Zoo made it simple to entry a variety of pre-trained fashions. This availability highlights the necessity for a strong service that allows prospects to seamlessly combine and deploy pre-trained fashions from varied sources into manufacturing environments. To fulfill the wants of our prospects, the service should be extremely:

- Safe – sturdy authentication and authorization, personal, and protected

- Scalable – a whole bunch of fashions and functions with autoscaling functionality

- Dependable – minimalist, quick restoration from failures

- Manageable – simple to function, rolling updates

- Requirements compliant – undertake market-leading API requirements and mannequin frameworks

- Useful resource environment friendly – fine-grained useful resource controls and scale to zero

- Observable – monitor system and mannequin efficiency

- Performant – best-in-class latency, throughput, and concurrency

- Remoted – keep away from noisy neighbors to supply sturdy service SLAs

These and different concerns led us to create the Cloudera AI Inference service as a brand new, purpose-built service for internet hosting all manufacturing AI fashions and associated functions. It’s perfect for deploying always-on AI fashions and functions that serve business-critical use instances.

Excessive-level structure

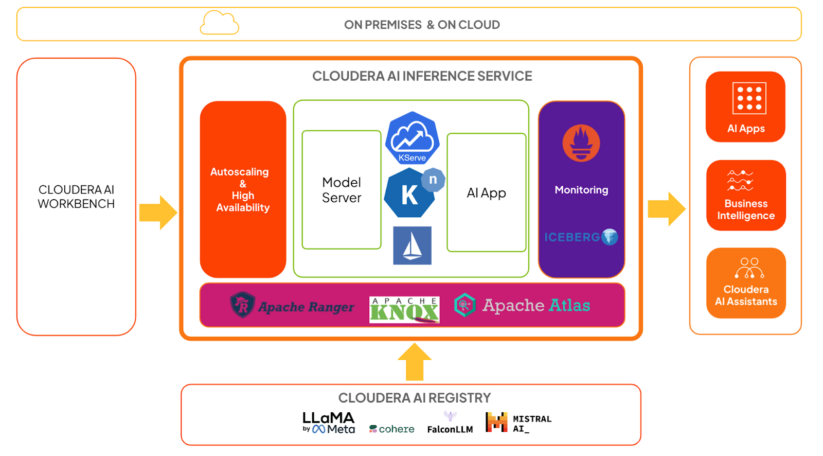

The diagram above exhibits a high-level structure of Cloudera AI Inference service in context:

- KServe and Knative deal with mannequin and utility orchestration, respectively. Knative offers the framework for autoscaling, together with scale to zero.

- Mannequin servers are liable for operating fashions utilizing extremely optimized frameworks, which we are going to cowl intimately in a later submit.

- Istio offers the service mesh, and we reap the benefits of its extension capabilities so as to add sturdy authentication and authorization with Apache Knox and Apache Ranger.

- Inference request and response payloads ship asynchronously to Apache Iceberg tables. Groups can analyze the information utilizing any BI software for mannequin monitoring and governance functions.

- System metrics, comparable to inference latency and throughput, can be found as Prometheus metrics. Knowledge groups can use any metrics dashboarding software to observe these.

- Customers can practice and/or fine-tune fashions within the AI Workbench, and deploy them to the Cloudera AI Inference service for manufacturing use instances.

- Customers can deploy educated fashions, together with GenAI fashions or predictive deep studying fashions, on to the Cloudera AI Inference service.

- Fashions hosted on the Cloudera AI Inference service can simply combine with AI functions, comparable to chatbots, digital assistants, RAG pipelines, real-time and batch predictions, and extra, all with commonplace protocols just like the OpenAI API and the Open Inference Protocol.

- Customers can handle all of their fashions and functions on the Cloudera AI Inference service with widespread CI/CD techniques utilizing Cloudera service accounts, also called machine customers.

- The service can effectively orchestrate a whole bunch of fashions and functions and scale every deployment to a whole bunch of replicas dynamically, supplied compute and networking sources can be found.

Conclusion

On this first submit, we launched the Cloudera AI Inference service, defined why we constructed it, and took a high-level tour of its structure. We additionally outlined a lot of its capabilities. We are going to dive deeper into the structure in our subsequent submit, so please keep tuned.